How the Google leak confirms the significance of author and publisher entities in SEO

Written on June 6, 2024 at 9:44 pm, by admin

The May 2024 Google leak has shed light on the growing importance of author and publisher entities in SEO.

This article explains how the Google leak confirms that the search engine can identify content creators and website owners. It also shows why this is a great opportunity for SEOs to optimize author and publisher entities in their strategies.

Expanding SEO: Beyond websites to publishers and authors

For over 30 years, SEO has focused on website and content-level optimization.

We can keep that. It is valuable and it works.

However, SEO needs to add two new layers of optimization:

- The website owner (publisher).

- The content creator (author).

This allows Google to assess each related entity and gives Google confidence in the brand, so it awards each entity a kgmid, and a place in Google’s Knowledge Graph – the cornerstone of search and generative AI.

Since 2015, I have optimized thousands of website entities, website owner entities and author entities to create Knowledge Panels and optimize brand SERPs (the search results for a brand name search).

Google identifies and assesses the credibility of the content, the entity behind the website and the content creators on the website.

How do I know? I have the data.

The Google leak does not list ranking factors or machine learning elements that drive Google Knowledge algorithms. Still, it reinforces what I see daily – that Google’s algorithms successfully detect and evaluate the credibility of website owners and content creators.

And this is an enormous opportunity for SEO.

A new three-tiered approach to SEO

Tier 1: Optimizing website content with traditional SEO

Continue to focus on website-level optimization – technical SEO, links and content. This will drive traffic to the website and convert visitors.

The two tiers below provide a holistic SEO strategy that will thrive and survive in generative AI results and contribute to the wider business digital marketing objective of acquiring clients and making sales.

Tier 2: Optimizing the website owner (publisher)

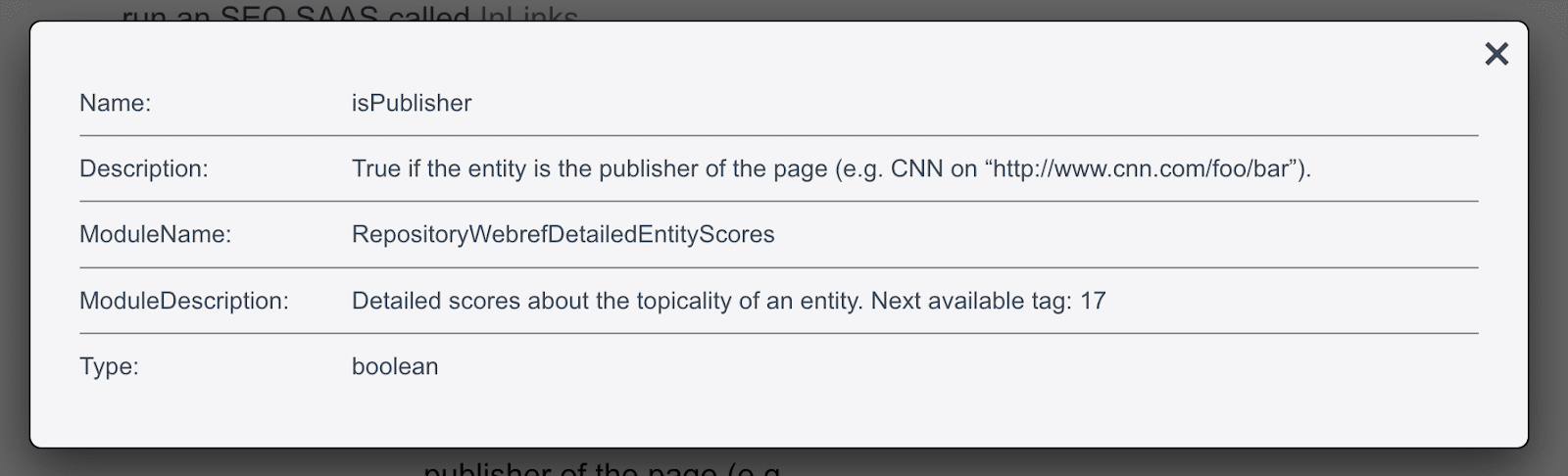

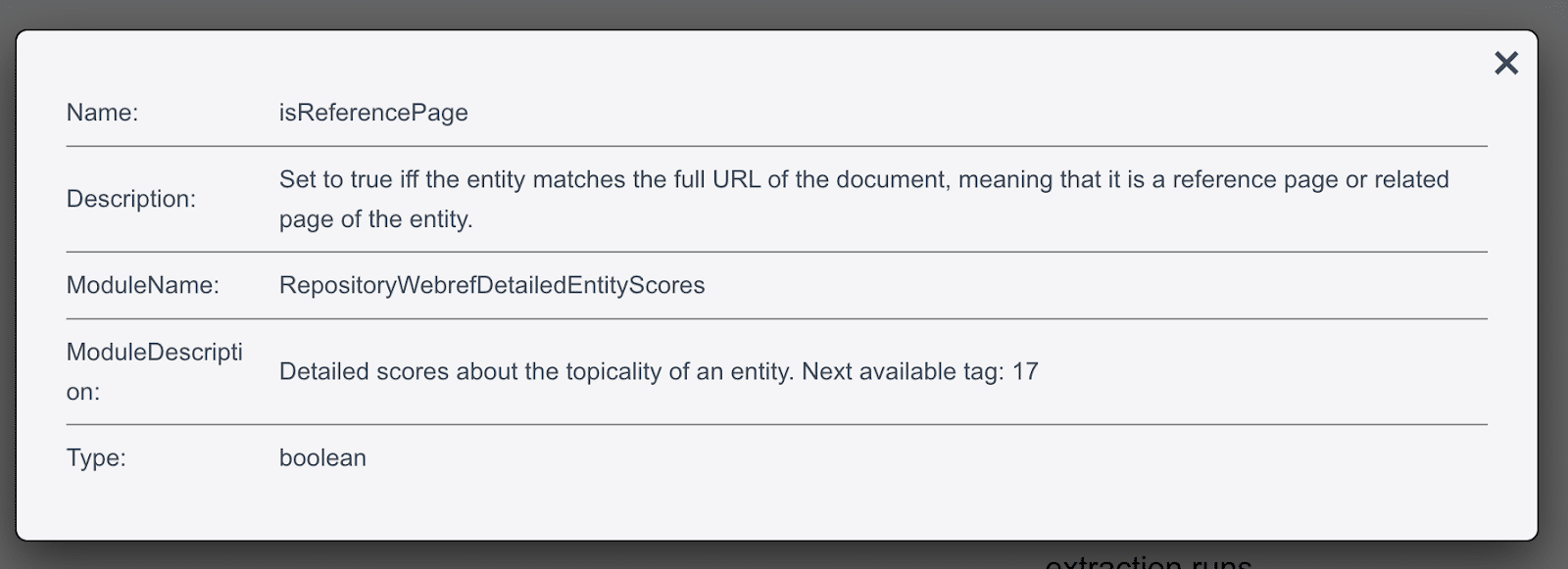

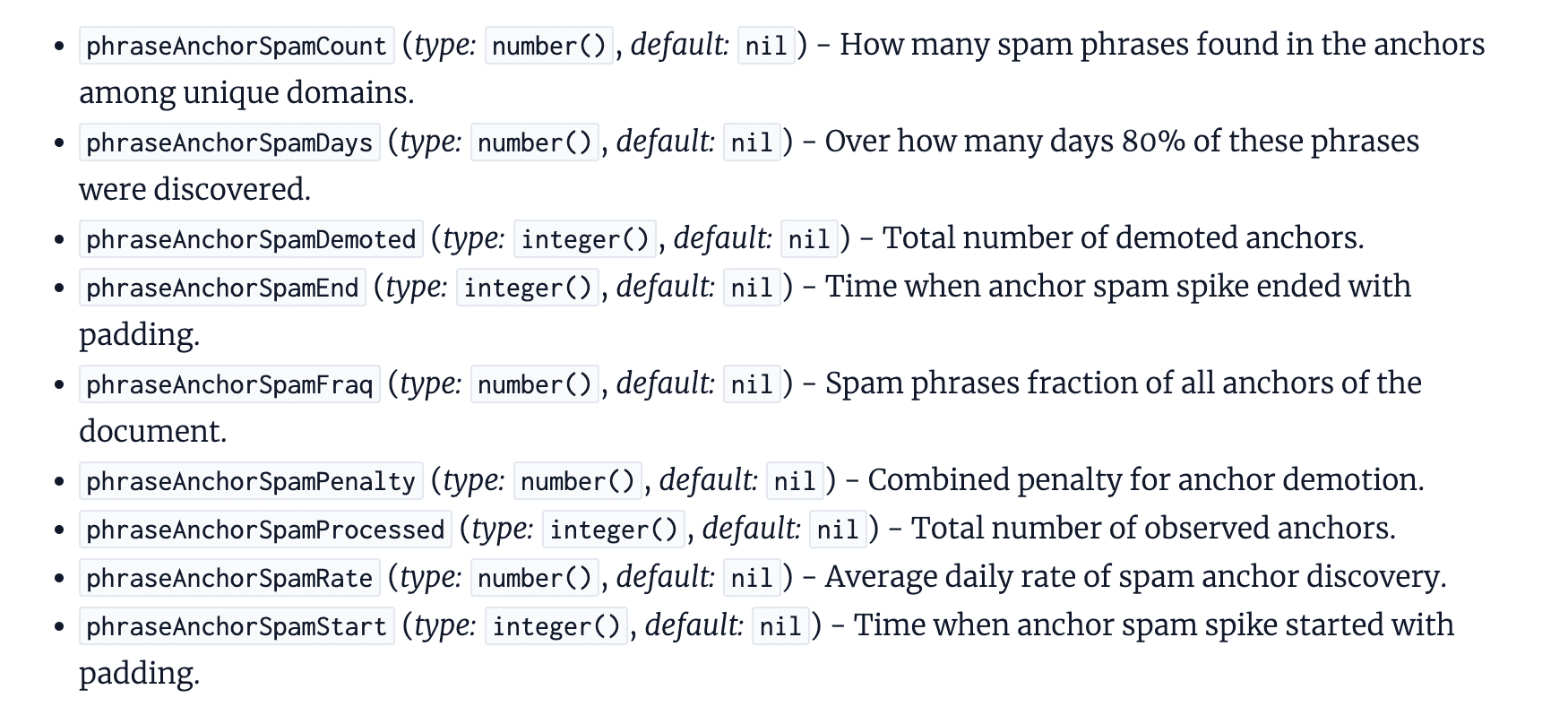

Google leak: isPublisher

The screenshots of the documents are thanks to Dixon Jones from InLinks, who provides a great resource for searching and displaying the documentation

The screenshots of the documents are thanks to Dixon Jones from InLinks, who provides a great resource for searching and displaying the documentation The isPublisher element in the leak corresponds directly to the Search Quality Rater guidelines changes between December 2022 and September 2023.

Mentions of “website” were replaced by “website owner” (a.k.a. publisher). There are now 20 mentions of “website owner.”

This website owner entity is the “guarantor” of the content, standing firmly behind it. Who is putting their reputation on the line by publishing this? Is that entity trustworthy?

Note: The publisher will often be an organization (including a corporation, local business or educational organization), but it can also be a person. In this article, I am assuming a corporation.

The isPublisher variable is boolean, so the game is zero-sum. The algorithms understand who the publisher is, or they don’t. If they don’t, Google loses confidence in the website and content because it doesn’t understand who has their reputation on the line.

Optimizing for website owner/publisher entities

Step 1 of tier 2: Understandability

Understandability is the foundation of website owner optimization. Without this, the rest won’t work. You absolutely cannot skip this step.

Educate Google’s knowledge algorithms so that they understand the entity that published the content: who they are, what they offer and who they serve.

Focus on the clarity, consistency and accuracy of all information on the entity home and company profiles, creating an infinite loop of self-corroboration. Google identifies isReferencePage for entities, which is similar to sameAs and subjectOf in schema markup.

Your KPI in step 1 is obtaining a kgmid in Google’s Knowledge Graphs for your website owner entity. Once you have a kgmid, you have started your entity optimization.

A corporate entity in Google’s Knowledge Graph

A corporate entity in Google’s Knowledge GraphBut don’t stop there. Keep building confidence in Google’s understanding. Confidence is key.

Google is more likely to prioritize entities when it is confident it understands who they are, what they offer and which audience they serve.

Expand the footprint with references on relevant second and third-party websites to build confidence in understanding.

Google identifies reference pages from all across the web with the website owner entity through inbound links, explicit mentions and even implicit mentions, as these variables show.

Your KPIs for confidence in understanding will combine the items listed below.

- The confidence score in Google’s Knowledge Graph. (WordLift’s score of 197 in the screenshot above is solid.)

- The stability of a Knowledge Panel (when it 100% reliably triggers on a brand SERP).

- The entity home is displayed in the Knowledge Panel.

- The presence of related entities in the Knowledge Panel, such as a People Also Search For section.

Step 2 of tier 2: Credibility

Google refers to credibility as E-E-A-T. At my company, we use an extended version: N-E-E-A-T-T (adding Notability and Transparency, both vital to entity optimization).

Demonstrating N-E-E-A-T-T credibility requires that you communicate the following things to Google.

- Notability: The company is a recognized market leader cited by multiple leading resources inside the industry (and, where possible, generalist resources such as major media sites, Wikipedia, etc).

- Experience: The company has visible, historical and recognized involvement in the topic.

- Expertise: The company provides topical content that aligns with generally industry-accepted facts across owned sites, social platforms and third-party sites. The content provides relevant solutions to common problems faced by the target audience.

- Authoritativeness: The company has mentions and links back to its Entity Home from multiple relevant authoritative websites. It has recent and historical relationships with market-leading companies and influential people in the industry.

- Trustworthiness: The company is regularly cited positively by leading industry resources and the target audience (clients and users) on forums and across the web.

- Transparency: The company provides clear, up-to-date and accurate information about itself and its products on its website and across the web. It interacts openly and clearly with users and clients web-wide.

Your work as an SEO is to use traditional SEO tactics to ensure that this information is discoverable for Google and provided in a format that Google can digest easily and analyze confidently.

Don’t focus only on the company’s website. You must look wider and work on second-party websites such as social media platforms, profile pages and review sites (see isReferencePage above).

Your KPIs for credibility will be a combination of the following things.

- The quality (information-richness) of a Knowledge Panel.

- The number, relevancy and confidence scores for related entities in the Knowledge Graph. WordLift has 345 entity associations in the example above (but be wary when too many are irrelevant).

- The quality and richness of the brand SERP.

- The quality and richness of SERPs for queries that include the company name.

Step 3 of tier 2: Deliverability

Deliverability is not directly the responsibility of SEO, but a marketing, funnel and acquisition strategy.

It ensures your content is strategically placed across the right online platforms to reach your target audience. Having the right content on the right platforms for the right audience across the entire market digital ecosystem.

Ideally, the company achieves omnipresence for its ideal client and shows up everywhere when looking for solutions to their problems.

SEO is essential in supporting work by “packaging” this content for Google to understand.

Your KPIs for deliverability will be a combination of the following things.

- Brand search volume.

- Brand SERP click-through rate.

- Positive sentiment and accuracy of assistive engine descriptions (ChatGPT, Bing Copilot, Google Gemini, etc.)

- A presence in the top and middle of the funnel results.

- Proto-measurements in search and assistive AI results.

Dig deeper: Modern SEO: Packaging your brand and marketing for Google

Tier 3: Optimizing the content creator (author)

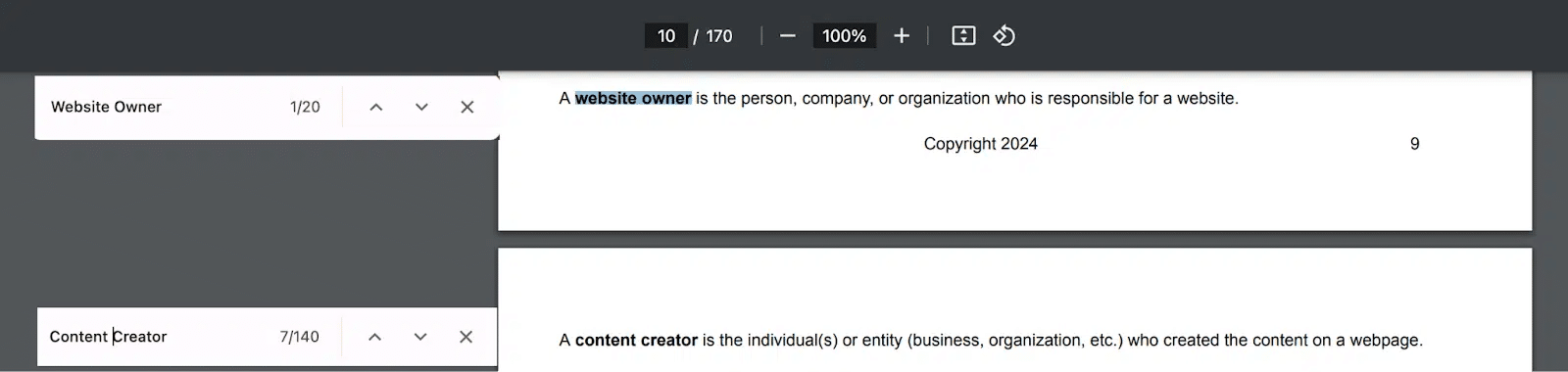

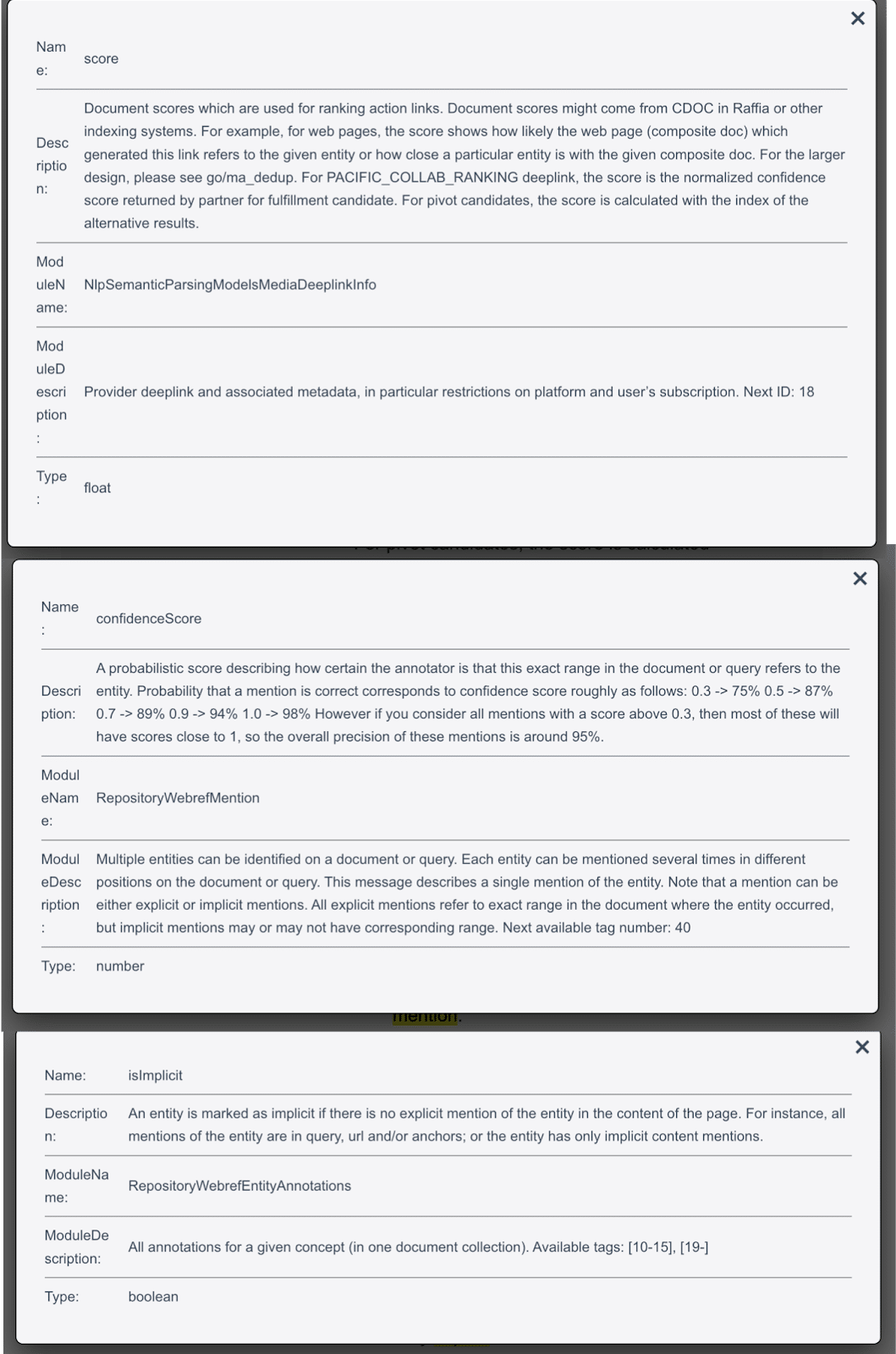

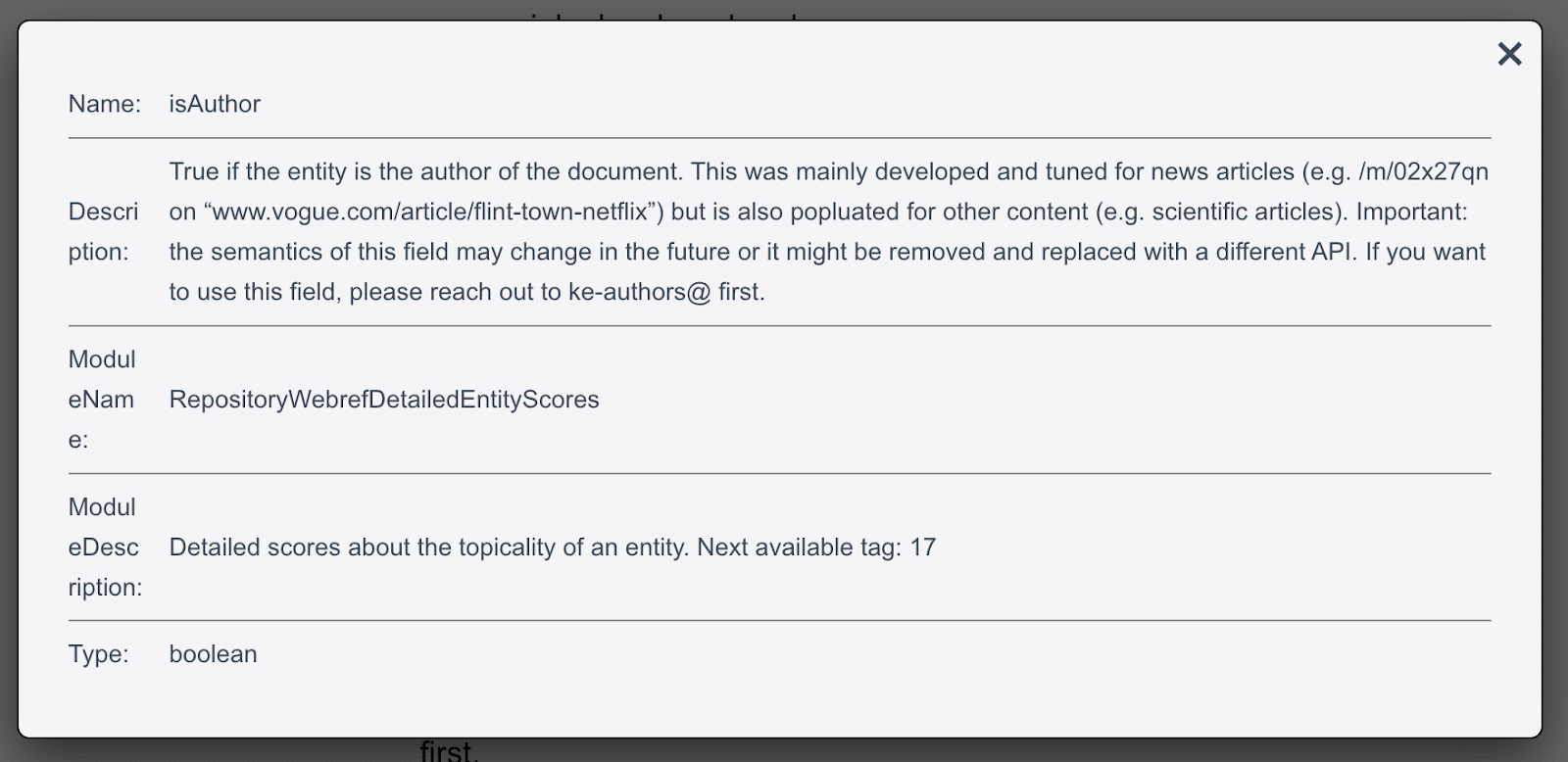

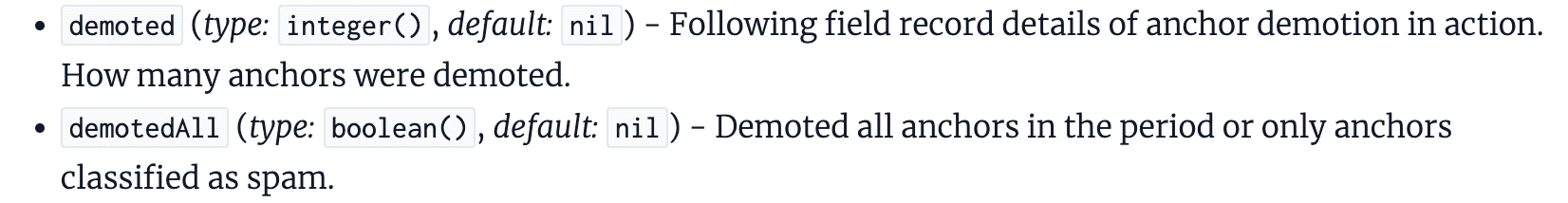

Google leak: isAuthor

Like isPublisher, isAuthor corresponds directly to the Search Quality Rater Guidelines changes between December 2022 and September 2023 when mentions of content creator (author) escalated. The guidelines mention content creator 140 times.

The content creator entity is responsible for the information in the content and stands behind it. Google wants to know who is creating the content and whether they are trustworthy.

The isAuthor variable is boolean, so the game is zero-sum. The algorithms understand who the content creator is. Or they don’t. If they don’t, you aren’t even in the game for tier 3.

Optimizing for content creator/author entities

The three-step process for a website owner/publisher I described above works similarly for a personal entity and personal brand strategy, so I won’t repeat everything here; I’ll just identify different aspects.

Step 1 of tier 3: Understandability

Google’s knowledge algorithms have been focusing almost exclusively on person entities since the first Killer Whale Update in July 2023, so getting a place in the Knowledge Graph and triggering a Knowledge Panel is significantly easier for a person than a company.

The process is the same. Create an infinite loop of self-corroboration.

- Identify an entity home.

- Make all references to the person clear, accurate and consistent across the web.

- Link from the entity home to the corroborative source and back to the entity home.

Your KPIs for confidence in understanding will be the same as for the website owner/publisher/corporation entity.

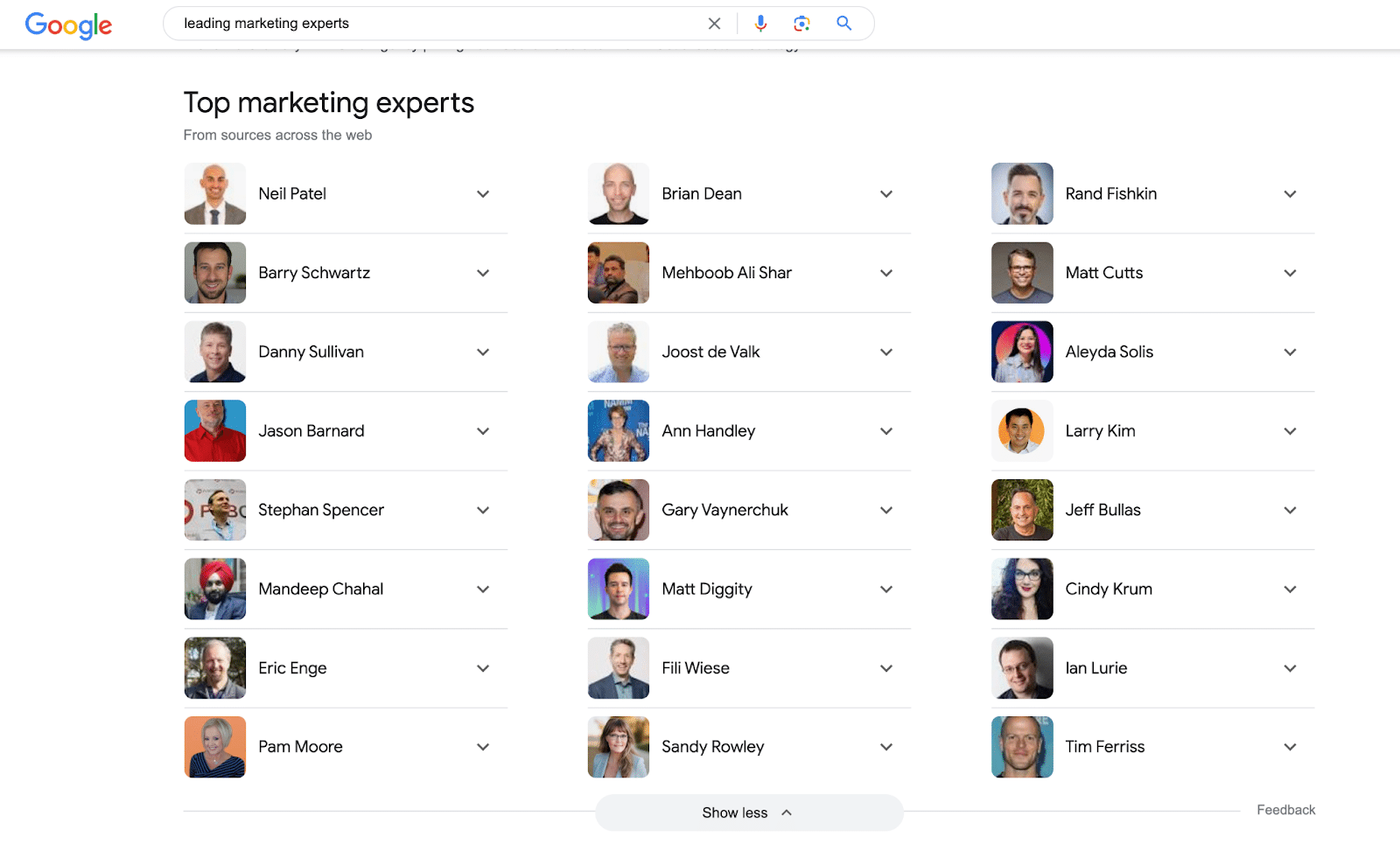

Step 2 of tier 2: Credibility

You need to be transparent to demonstrate to the algorithms that the content creator is an expert, has experience and is authoritative and trustworthy.

If you want to be at the top of the results, you must make that entity notable in its field. This is niche notability. Trusted and famous in a niche beats trusted every day of the week.

That is how you can get an author or person entity to the top of an entity list like this:

Your KPIs for confidence in credibility will be the same as for the website owner/corporation entity.

Step 3 of tier 2: Deliverability

Optimizing an author entity or a personal brand means maintaining a consistent presence wherever the audience may be online. The message and content are distributed across the digital landscape to engage with the target audience.

By aligning their content with the platforms the author or person’s ideal audience naturally uses, the person shows up at every stage of their online journey, offering insights, solutions, or services related to their interests and needs.

Deliverability is not SEO’s direct remit. SEO leverages maximum value from digital content assets wherever they appear online by ensuring they are discoverable, digestible and attractive to Google.

Your KPIs for deliverability will be the same as for the website owner/corporation entity.

Incorporating author and publisher entity optimization into your SEO strategy

The May 2024 Google leak is a definitive signal for SEOs and business leaders to embrace an expanded approach to optimization.

By recognizing the importance of entities – specifically authors and publishers – you must adapt your strategies to align with Google’s evolving ability to understand the world and evaluate the credibility and authority of the players.

The traditional focus on website and content optimization remains the essential foundation, but adding entity optimization at both the publisher and author levels is vital.

The three-tiered entity optimization strategy – encompassing understanding, credibility and deliverability – ensures that you are not just optimizing for search engines but establishing a clear identity for all related entities within the relevant market’s digital ecosystem.

As you progress with your SEO efforts, remember that it’s no longer just about climbing SERP rankings; it’s about building trust with users and search engines through insightful entity optimization.

This holistic approach will future-proof your SEO and provide greater visibility, user engagement, and, ultimately, sales and revenue for your company or clients.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Category seo news | Tags:

Social Networks : Technorati, Stumble it!, Digg, de.licio.us, Yahoo, reddit, Blogmarks, Google, Magnolia.

Google sued by publishers over alleged pirate textbook promotion

Written on June 5, 2024 at 6:44 pm, by admin

Major educational publishers — Cengage, Macmillan Learning, McGraw Hill and Elsevier — have filed a lawsuit against Google, accusing it of promoting pirated copies of their textbooks.

Why it matters. This case could reshape how tech giants handle copyright infringement and impact the $8.3 billion U.S. textbook market.

Why we care. Advertisers will care about this lawsuit because it strikes at the heart of ad integrity and fair competition. If the allegations are true — that Google promotes pirated textbooks while restricting ads for legitimate ones – it suggests the tech giant may not be providing a level playing field or ensuring brand safety.

Details.

- Filed in the U.S. District Court, Southern District of New York

- Google accused of ignoring thousands of infringement notices

- Pirated e-books allegedly featured at the top of search results

- Publishers claim Google restricts ads for licensed e-books

By the numbers. Pirated textbooks are often sold at artificially low prices, undercutting legitimate sellers.

What they’re saying. “Google has become a thieves’ den for textbook pirates,” Matt Oppenheim, the publishers’ attorney, told Reuters.

- Google hasn’t commented on the lawsuit.

What’s next. The case (No. 1:24-cv-04274) seeks unspecified monetary damages.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Category seo news | Tags:

Social Networks : Technorati, Stumble it!, Digg, de.licio.us, Yahoo, reddit, Blogmarks, Google, Magnolia.

Google Ads inviting some advertisers to join Advisors Community

Written on June 5, 2024 at 6:44 pm, by admin

Google Ads is inviting select customers to join its Advisors Community, offering a rare chance to directly influence its products and services.

Why it matters. This move signals Google’s push for more customer-centric product development, potentially shaping the future of digital advertising.

Why we care. Advertisers have seen many updates to Google products that have been seriously lacking in effectiveness. Many advertisers request being part of the discussion before an update is launched. This may be the answer to their long-running concerns.

Details.

- Members provide brief monthly feedback (max 4 surveys, ~5 mins each)

- Opportunity to impact unreleased products

- Quarterly updates on how feedback is used

How to join. If you see this communication in your inbox, fill out a quick, confidential questionnaire via third-party provider Alida.

Between the lines. By involving customers early, Google aims to better align its tools with user needs, possibly to maintain its dominance in the competitive ad tech space.

Reaction. I first saw this on Google Ads Consultant, Boris Beceric’s LinkedIn profile. When asked to comment, Beceric said:

- “I welcome the opportunity to have a more direct way of giving feedback to Google. I know they are listening (e.g. they gave us more control & reporting for Performance Max), but sometimes it is just so frustrating to be seeing things in the accounts that are opaque for no good reason. Criticizing on social media is not really going to move the needle in advertiser’s favor, so I think we need to take these opportunities when they are presented to us.”

What to watch. How much Google actually incorporates user feedback and whether this improves Google Ads products.

The email. Here’s a screenshot of the email Beceric shared on LinkedIn:

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Category seo news | Tags:

Social Networks : Technorati, Stumble it!, Digg, de.licio.us, Yahoo, reddit, Blogmarks, Google, Magnolia.

Google must face $17 billion UK ad tech lawsuit

Written on June 5, 2024 at 6:44 pm, by admin

A UK court has ruled that Google must face a £13.6 billion ($17 billion) lawsuit alleging it wields too much power over the online advertising market.

Why we care. The case could have far-reaching implications for the digital ad industry. Will this lead to advertisers spending less on Google?

Driving the news. The Competition Appeal Tribunal in London rejected Google’s attempt to dismiss the case, allowing it to proceed to trial.

The lawsuit, brought by Ad Tech Collective Action LLP, claims Google’s anticompetitive practices have cost UK online publishers money.

It alleges Google engages in “self-preferencing,” promoting its own products over rivals.

Google’s response. The tech giant calls the lawsuit “speculative and opportunistic,” vowing to “oppose it vigorously and on the facts.”

Context. This is just one of many regulatory challenges Google faces:

- Multiple probes, including the U.S. vs. Google antitrust trial

- Billions in fines from the EU for anticompetitive behaviour

What’s next? No trial date is set yet. The case has already taken 18 months to reach this stage.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Category seo news | Tags:

Social Networks : Technorati, Stumble it!, Digg, de.licio.us, Yahoo, reddit, Blogmarks, Google, Magnolia.

OpenAI’s growing list of partnerships

Written on June 5, 2024 at 6:44 pm, by admin

OpenAI has announced 30 significant deals with tech and media brands to date, including three in the past week with Vox Media, the Atlantic and the World Association of Newspapers and News Publishers.

Now there’s an easy way to keep track of them all: the OpenAI Partnerships List from Originality.AI.

Why we care. These partnerships will undoubtedly lead to greater discoverability in OpenAI products – think: featured content and citations (links) in ChatGPT. As we’ve seen from the Google-Reddit deal, brands with partnerships tend to get favorable placement, which is good news for those with such deals but bad news if you’re competing against them.

The content deals. Brands that sign on with OpenAI will be discoverable in OpenAI’s products, including ChatGPT. OpenAI will also use the content from these brands to train its systems.

The 30 deals. So far, OpenAI has partnered with:

- American Journalism Project

- AP (Associated Press)

- Arizona State University

- The Atlantic

- Atlassian

- Axel Springer

- Bain & Company

- BuzzFeed

- Consensus

- Dotdash Meredith

- Figure

- Financial Times

- G42

- GitHub

- Icelandic Government

- Le Monde

- Microsoft

- Neo Accelerator

- News Corp

- Opera Press

- Prisa Media

- Salesforce

- Sanofi & Formation Bio

- Shutterstock

- Stack Overflow

- Stripe

- Upwork

- Vox Media

- World Association of Newspapers and News Publishers (WAN-IFRA)

ChatGPT Search. While rumors of a ChatGPT Search product have quieted for now, we have already seen ChatGPT more prominently feature links in its answers. And we know OpenAI CEO is interested in creating Search that is much different than Google.

- These deals could have even greater implications later because these brands will have an unfair advantage should ChatGPT Search become a viable Google alternative.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Category seo news | Tags:

Social Networks : Technorati, Stumble it!, Digg, de.licio.us, Yahoo, reddit, Blogmarks, Google, Magnolia.

Perplexity Pages showing in Google AI Overviews, featured snippets

Written on June 4, 2024 at 3:43 pm, by admin

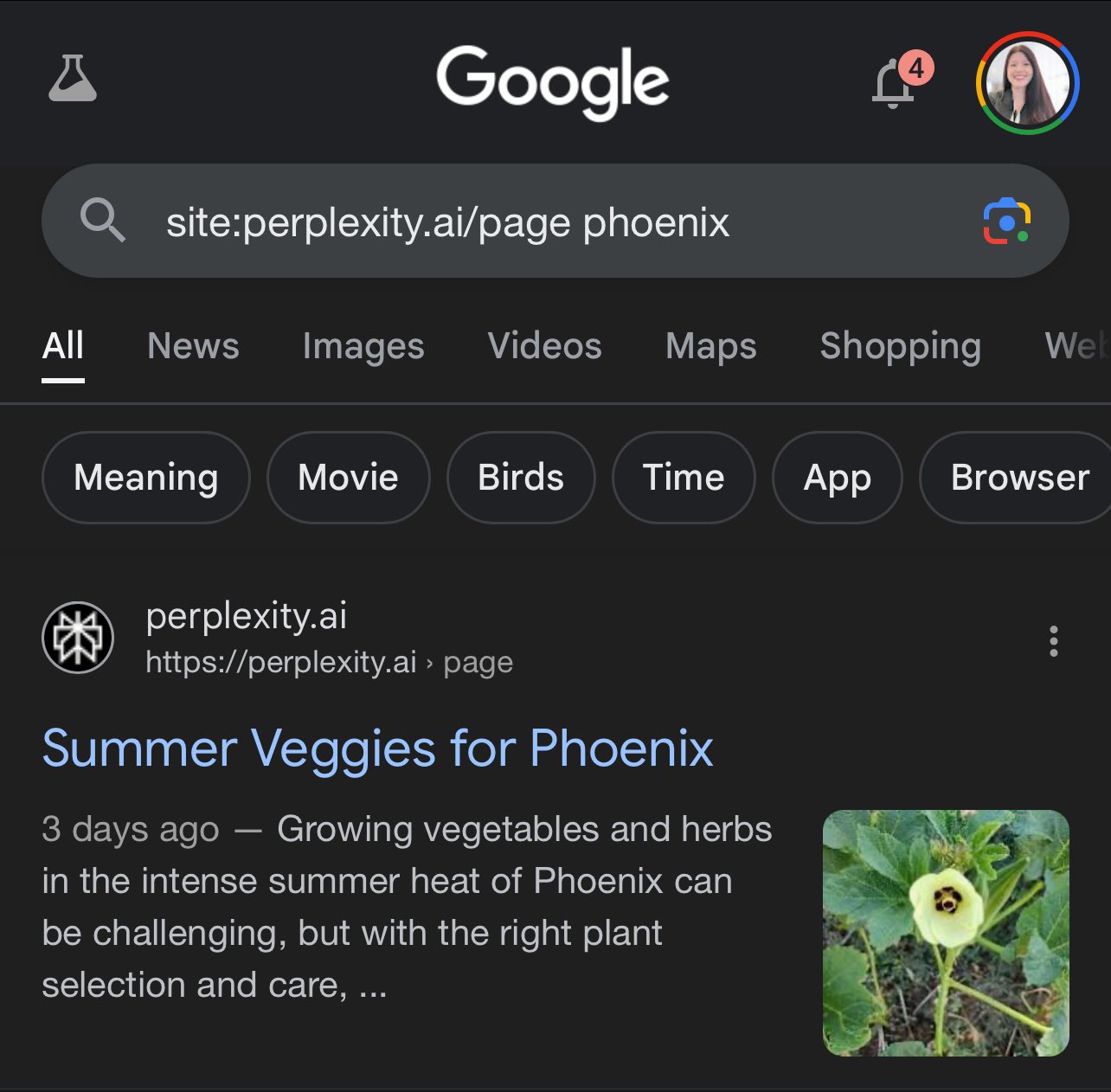

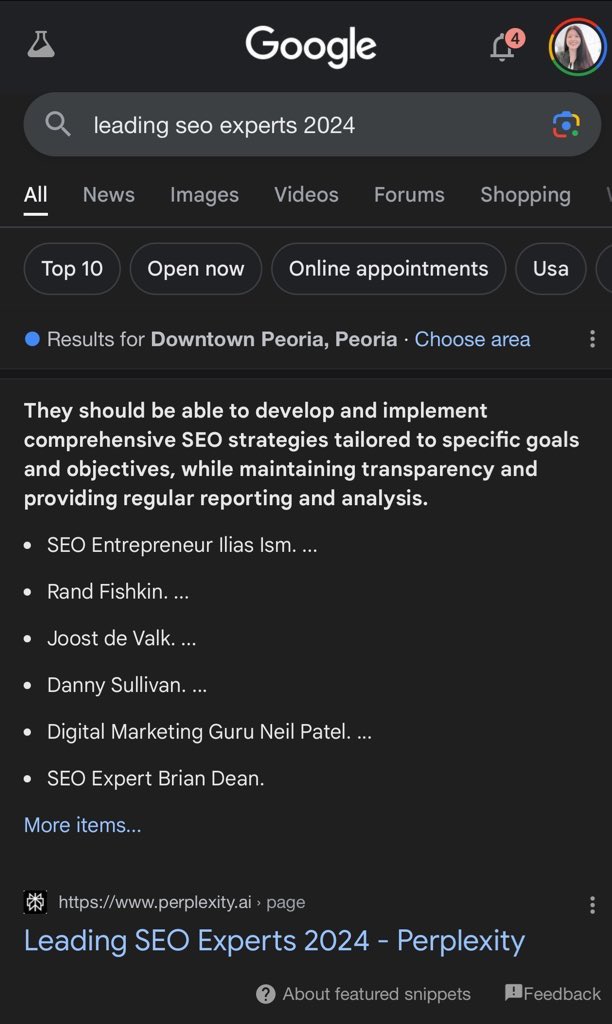

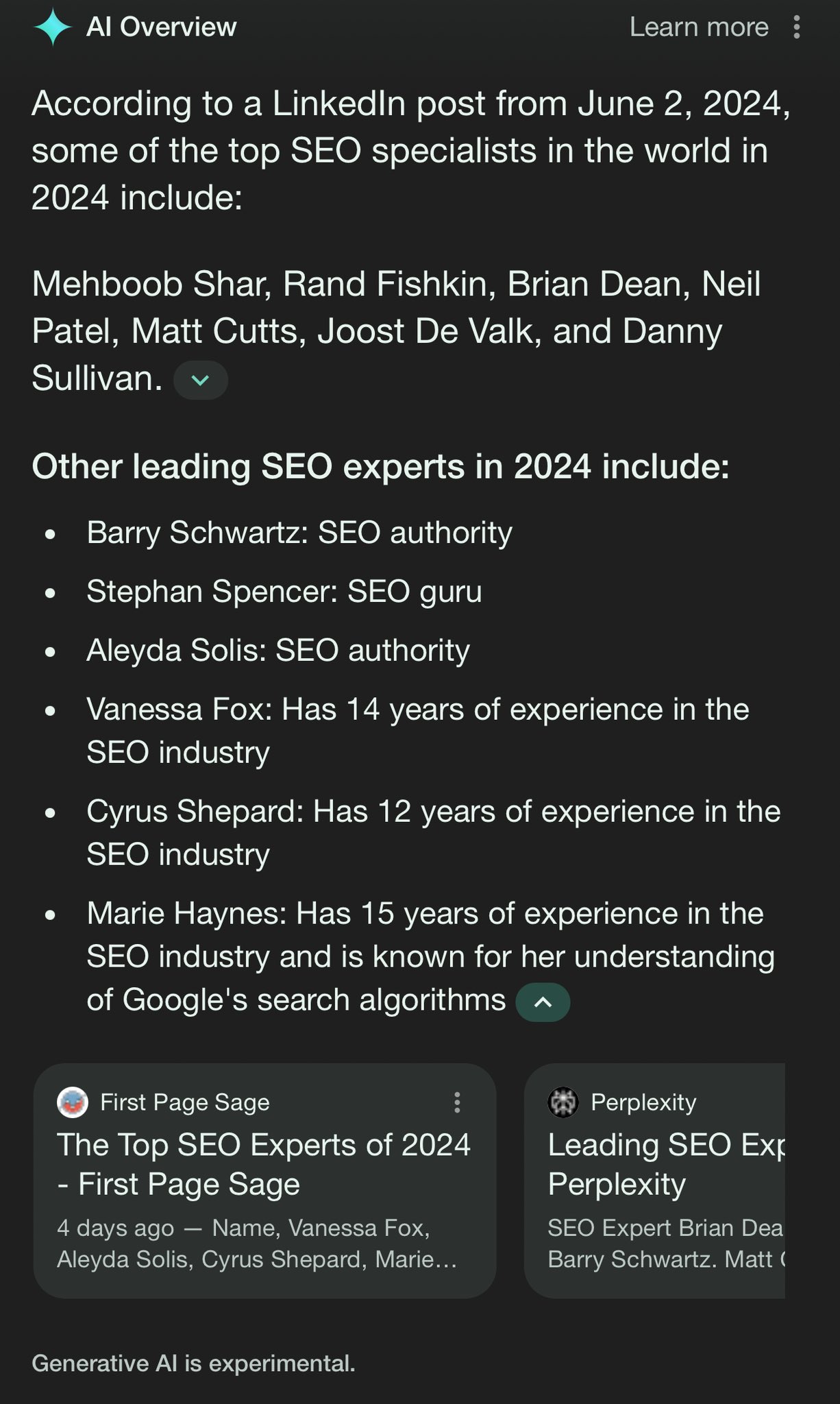

AI-powered search engine Perplexity introduced a new product – Perplexity Pages – to select free and paid users on May 30. Content from these Pages has started appearing in Google’s AI Overviews and featured snippets.

What are Perplexity Pages. Perplexity called it a way to share your knowledge with the world, via in-depth articles, detailed reports or informative guides. You can even select the audience type (beginner, advanced, anyone) when you have AI generate your content. You can also add sections, videos, images and more.

As TechCrunch explained it further:

- “All these pages are publishable and also searchable through Google. You can share the link to these pages with other users. They can ask follow-up questions on the topic as well. What’s more, users can also turn their existing conversation threads into pages with the click of a button.”

Pages in Google. These pages are being indexed by Google and included as citations in AI Overviews and appearing in featured snippets.

Here are examples showing this, shared on X by Kristi Hines:

Spam? This definitely seems like a case of one search engine spamming another search engine. Or, as Ryan Jones put it on X, “An AI overview of an AI overview in search results pages in my search results page.”

Aside from that absurdity, Perplexity Pages are ripe for abuse by those who will probably try to use it to hack their way into AI Overviews.

A big question is how Google will handle this new AI-generated content in its Search results long-term. This tactic could be short-lived, as pointed out in an X thread, started by Glenn Gabe. From the thread:

- Shared searches used to be indexed in Google, but the March 2024 core update hammered these pages.

- This could be comparable to LinkedIn Collaborative Articles, which surged briefly before a huge drop.

Perplexity’s announcement. Introducing Perplexity Pages

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Category seo news | Tags:

Social Networks : Technorati, Stumble it!, Digg, de.licio.us, Yahoo, reddit, Blogmarks, Google, Magnolia.

Google Search fixes issues with Site names not appearing for internal pages

Written on June 4, 2024 at 3:43 pm, by admin

Google has fixed an issue where some internal pages would not show the proper Site name in the Google Search results. This has been an issue since at least December 2023 and is now resolved.

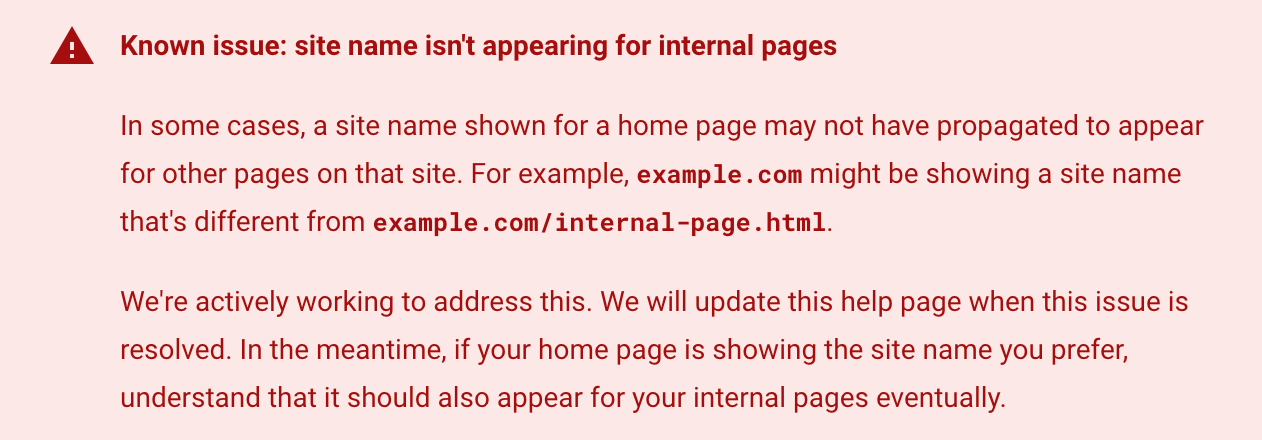

What changed. Google updated its site names documentation today to remove the “known issue” section that read:

In some cases, a site name shown for a home page may not have propagated to appear for other pages on that site. For example, example.com might be showing a site name that’s different from example.com/internal-page.html.

We’re actively working to address this. We will update this help page when this issue is resolved. In the meantime, if your home page is showing the site name you prefer, understand that it should also appear for your internal pages eventually.

Here is a screenshot of that section:

Still see the issue. You may still see this issue on some sites, that is because that it can take time for Google to reprocess all of the pages on a specific site. Google wrote in the updated documentation, “Not seeing your preferred site name for internal pages? If your home page is already showing your preferred site name, remember to also allow time for Google to recrawl and process your internal pages.”

Site names still may have other lingering issues, as we covered here.

Site names timeline. Here is the timeline Google posted of the evolution of site names since it launched in October:

- October 2022: Site names for the domain level were introduced for mobile search results for English, French, German and Japanese.

- April 2023 (I have this as March): Site names were added for desktop for the same set of languages.

- May 2023: Site names are supported on the subdomain level for the same set of languages and on mobile search results only.

Controlling site names. Google back in October 2022 explained that Google Search uses a number of ways to identify the site name for the search result. But if you want, you can use structured data on your home page to communicate to Google what the site name should be for your site. Google has specific documentation on this new Site name structured data available over here.

Why we care. If you still see issues with some of your internal pages not having the correct site name in Google Search, you may want to try to get Google to recrawl and reprocess the page. Using the Google URL Inspection tool may help trigger that process.

Otherwise, Google will update the pages over time, as it naturally reprocesses the pages.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Category seo news | Tags:

Social Networks : Technorati, Stumble it!, Digg, de.licio.us, Yahoo, reddit, Blogmarks, Google, Magnolia.

Google’s documentation leak: 12 big takeaways for link builders and digital PRs

Written on June 4, 2024 at 3:43 pm, by admin

Lots of insights and opinions have already been shared about last week’s leak of Google’s Content API Warehouse documentation, including the fantastic write-ups from:

- Rand Fishkin

- Mike King (on the iPullRank site and here on Search Engine Land)

- Andrew Ansley

But what can link builders and digital PRs learn from the documents?

Since news of the leak broke, Liv Day, Digitaloft’s SEO Lead, and I have spent a lot of time investigating what the documentation tells us about links.

We went into our analysis of the documents trying to gain insights around a few key questions:

- Do links still matter?

- Are some links more likely to contribute to SEO success than others?

- How does Google define link spam?

To be clear, the leaked documentation doesn’t contain confirmed ranking factors. It contains information on more than 2,500 modules and over 14,000 attributes.

We don’t know how these are weighted, which are used in production and which could exist for experimental purposes.

But that doesn’t mean the insights we gain from these aren’t useful. So long as we consider any findings to be things that Google could be rewarding or demoting rather than things they are, we can use them to form the basis of our own tests and come to our own conclusions about what is or isn’t a ranking factor.

Below are the things we found in the documents that link builders and digital PRs should pay close attention to. They’re based on my own interpretation of the documentation, alongside my 15 years of experience as an SEO.

1. Google is probably ignoring links that don’t come from a relevant source

Relevancy has been the hottest topic in digital PR for a long time, and something that’s never been easy to measure. After all, what does relevancy really mean?

Does Google ignore links that don’t come from within relevant content?

The leaked documents definitely suggest that this is the case.

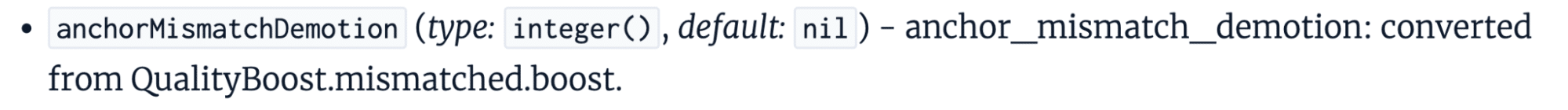

We see a clear anchorMismatchDemotion referenced in the CompressedQualitySignals module:

While we have little extra context, what we can infer from this is that there is the ability to demote (ignore) links when there is a mismatch. We can assume this to mean a mismatch between the source and target pages, or the source page and target domain.

What could the mismatch be, other than relevancy?

Especially when we consider that, in the same module, we also see an attribute of topicEmbeddingsVersionedData.

Topic embeddings are commonly used in natural language processing (NLP) as a way of understanding the semantic meaning of topics within a document. This, in the context of the documentation, means webpages.

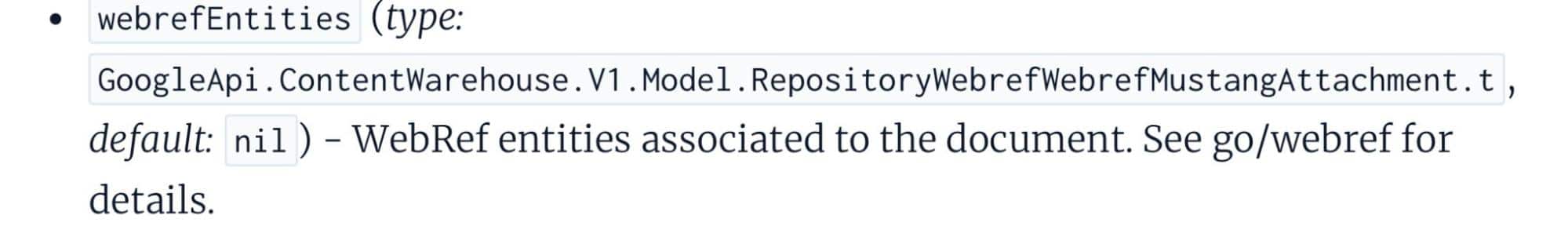

We also see a webrefEntities attribute referenced in the PerDocData module.

What’s this? It’s the entities associated with a document.

We can’t be sure exactly how Google is measuring relevancy, but we can be pretty certain that the anchorMismatchDemotion involves ignoring links that don’t come from relevant sources.

The takeaway?

Relevancy should be the biggest focus when earning links, prioritized over pretty much any other metric or measure.

2. Locally relevant links (from the same country) are probably more valuable than ones from other countries

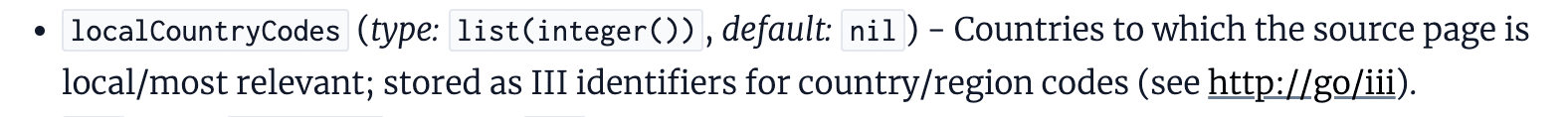

The AnchorsAnchorSource module, which gives us an insight into what Google stores about the source page of links, suggests that local relevance could contribute to the link’s value.

Within this document is an attribute called localCountryCodes, which stores the countries to which the page is local and/or the most relevant.

It’s long been debated in digital PR whether links coming from sites in other countries and languages are valuable. This gives us some indication as to the answer.

First and foremost, you should prioritize earning links from sites that are locally relevant. And if we think about why Google might weigh these links stronger, it makes total sense.

Locally relevant links (don’t confuse this with local publications that often secure links and coverage from digital PR; here we’re talking about country-level) are more likely to increase brand awareness, result in sales and be more accurate endorsements.

However, I don’t believe links from other locales are harmful. More than those where the country-level relevancy matches are weighted more strongly.

3. Google has a sitewide authority score, despite claiming they don’t calculate an authority measure like DA or DR

Maybe the biggest surprise to most SEOs reading the documentation is that Google has a “site authority” score, despite stating time and time again that they have no measure that’s like Moz’s Domain Authority (DA) or Ahrefs’ Domain Rating (DR).

In 2020, Google’s John Mueller stated:

- “Just to be clear, Google doesn’t use Domain Authority *at all* when it comes to Search crawling, indexing, or ranking.”

But later that year, did hint at a sitewide measure, saying about Domain Authority:

- “I don’t know if I’d call it authority like that, but we do have some metrics that are more on a site level, some metrics that are more on a page level, and some of those site-wide level metrics might kind of map into similar things.”

Clear as day, in the leaked documents, we see a SiteAuthority score.

To caveat this, though, we don’t know that this is even remotely in line with DA or DR. It’s also likely why Google has typically answered questions in the way they have about this topic.

Moz’s DA and Ahrefs’ DR are link-based scores based on the quality and quantity of links.

I’m doubtful that Google’s siteAuthority is solely link-based though, given that feels closer to PageRank. I’d be more inclined to suggest that this is some form of calculated score based on page-level quality scores, including click data and other NavBoost signals.

The likelihood is that, despite having a similar naming convention, this doesn’t align with DA and DR, especially given that we see this referenced in the CompressedQualitySignals module, not a link-specific one.

4. Links from within newer pages are probably more valuable than those on older ones

One interesting finding is that links from newer pages look to be weighted more strongly than those coming from older content, in some cases.

We see reference to sourceType in the context of anchors (links), where the quality of a link’s source page is recorded in correlation to the page’s index tier.

What stands out here, though, is the reference to newly published content (freshdocs) being a special case and considered to be the same as “high quality” links.

We can clearly see that the source type of a link can be used as an importance indicator, which suggests that this relates to how links are weighted.

What we must consider, though, is that a link can be defined as being “high quality” without being a fresh page, it’s just that these are considered the same quality.

To me, this backs up the importance of consistently earning links and explains why SEOs continue to recommend that link building (in whatever form, that’s not what we’re discussing here) needs consistent resources allocated. It needs to be an “always-on” activity.

5. The more Google trusts a site’s homepage, the more valuable links from that site probably are

We see a reference within the documentation (again, in the AnchorsAnchorSource module) to an attribute called homePageInfo, which suggests that Google could be tagging link sources as not trusted, partially trusted or fully trusted.

What this does define is that this attribute relates to instances when the source page is a website’s homepage, with a not_homepage value being assigned to other pages.

So, what could this mean?

It suggests that Google could be using some definition of “trust” of a website’s homepage within the algorithms. How? We’re not sure.

My interpretation: internal pages are likely to inherit the homepage’s trustworthiness.

To be clear: we don’t know how Google defines whether a page is fully trusted, not trusted or partially trusted.

But it would make sense that internal pages inherit a homepage’s trustworthiness and that this is used, to some degree, in the weighting of links and that links from fully trusted sites are more valuable than those from not trusted ones.

6. Google specifically tags links that come from high-quality news sites

Interestingly, we’ve discovered that Google is storing additional information about a link when it is identified as coming from a “newsy, high quality” site.

Does this mean that links from news sites (for example, The New York Times, The Guardian or the BBC) are more valuable than those from other types of site?

We don’t know for sure.

But when looking at this – alongside the fact that these types of sites are typically the most authoritative and trusted publications online, as well as those that would historically had a toolbar PageRank of 9 or 10 – it does make you think.

What’s for sure, though, is that leveraging digital PR as a tactic to earn links from news publications is undoubtedly incredibly valuable. This finding just confirms that.

7. Links coming from seed sites, or those links to from these, are probably the most valuable links you could earn

Seed sites and link distance ranking is a topic that doesn’t get talked about anywhere near as often as it should, in my opinion.

It’s nothing new, though. In fact, it’s something that the late Bill Slawski wrote about in 2010, 2015 and 2018.

The leaked Google documentation suggests that PageRank in its original form has long been deprecated and replaced by PageRank-NearestSeeds, referenced by the fact it defines this as the production PageRank value to be used. This is perhaps one of the things that the documentation is the clearest on.

If you’re unfamiliar with seed sites, the good news is that it isn’t a massively complex concept to understand.

Slawski’s articles on this topic are probably the best reference point for this:

“The patent provides 2 examples [of seed sites]: The Google Directory (It was still around when the patent was first filed) and the New York Times. We are also told: ‘Seed sets need to be reliable, diverse enough to cover a wide range of fields of public interests & well connected to other sites. In addition, they should have large numbers of useful outgoing links to facilitate identifying other useful & high-quality pages, acting as ‘hubs’ on the web.’

“Under the PageRank patent, ranking scores are given to pages based upon how far away they might be from those seed sets and based upon other features of those pages.”– Bill Slawski, PageRank Update (2018)

8. Google is probably using ‘trusted sources’ to calculate whether a link is spammy

When looking at the IndexingDocjoinerAnchorSpamInfo module, one that we can assume relates to how spammy links are processed, we see references to “trusted sources.”

It looks like Google can calculate the probability of link spam based on the number of trusted sources linking to a page.

We don’t know what constitutes a “trusted source,” but when looked at holistically alongside our other findings, we can assume that this could be based on the “homepage” trust.

Can links from trusted sources effectively dilute spammy links?

It’s definitely possible.

9. Google is probably identifying negative SEO attacks and ignoring these links by measuring link velocity

The SEO community has been divided over whether negative SEO attacks are a problem for some time. Google is adamant they’re able to identify such attacks, while plenty of SEOs have claimed their site was negatively impacted by this issue.

The documentation gives us some insight into how Google attempts to identify such attacks, including attributes that consider:

- The timeframe over which spammy links have been picked up.

- The average daily rate of spam discovered.

- When a spike started.

It’s possible that this also considers links intended to manipulate Google’s ranking systems, but the reference to “the anchor spam spike” suggests that this is the mechanism for identifying significant volumes, something we know is a common issue faced with negative SEO attacks.

There are likely other factors at play in determining how links picked up during a spike are ignored, but we can at least start to piece together the puzzle of how Google is trying to prevent such attacks from having a negative impact on sites.

10. Link-based penalties or adjustments can likely apply either to some or all of the links pointing to a page

It seems that Google has the ability to apply link spam penalties or ignore links on a link-by-link or all-links basis.

This could mean that, given one or more unconfirmed signals, Google can define whether to ignore all links pointing to a page or just some of them.

Does this mean that, in cases of excessive link spam pointing to a page, Google can opt to ignore all links, including those that would generally be considered high quality?

We can’t be sure. But if this is the case, it could mean that spammy links are not the only ones ignored when they are detected.

Could this negate the impact of all links to a page? It’s definitely a possibility.

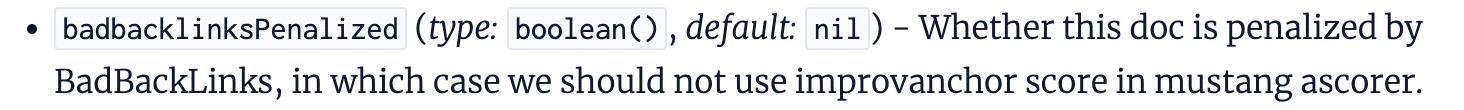

11. Toxic links are a thing, despite Google saying they aren’t

Just last month, Mueller stated (again) that toxic links are a made-up concept:

- “The concept of toxic links is made up by SEO tools so that you pay them regularly.”

In the documentation, though, we see reference given to “BadBackLinks.”

The information given here suggests that a page can be penalized for having “bad” backlinks.

While we don’t know what form this takes or how close this is to the toxic link scores given by SEO tools, we’ve got plenty of evidence to suggest that there is at least a boolean (typically true or false values) measure of whether a page has bad links pointing to it.

My guess is that this works in conjunction with the link spam demotions I talked about above, but we don’t know for sure.

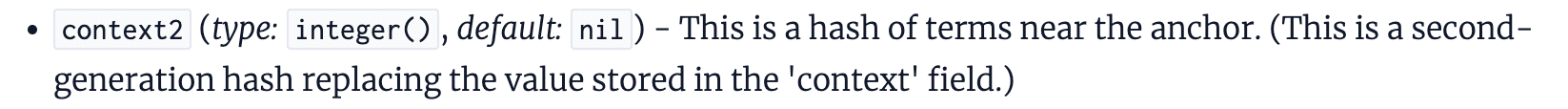

12. The content surrounding a link gives context alongside the anchor text

SEOs have long leveraged the anchor text of links as a way to give contextual signals of the target page, and Google’s Search Central documentation on link best practices confirms that “this text tells people and Google something about the page you’re linking to.”

But last week’s leaked documents indicate that it’s not just anchor text that’s used to understand the context of a link. The content surrounding the link is likely also used.

The documentation references context2, fullLeftContext, and fullRightContext, which are the terms near the link.

This suggests that there’s more than the anchor text of a link being used to determine the relevancy of a link. On one hand, it could simply be used as a way to remove ambiguity, but on the other, it could be contributing to the weighting.

This feeds into the general consensus that links from within relevant content are weighted far more strongly than those within content that’s not.

Key learnings & takeaways for link builders and digital PRs

Do links still matter?

I’d certainly say so.

There’s an awful lot of evidence here to suggest that links are still significant ranking signals (despite us not knowing what is and isn’t a ranking signal from this leak), but that it’s not just about links in general.

Links that Google rewards or does not ignore are more likely to positively influence organic visibility and rankings.

Maybe the biggest takeaway from the documentation is that relevancy matters a lot. It is likely that Google ignores links that don’t come from relevant pages, making this a priority measure of success for link builders and digital PRs alike.

But beyond this, we’ve gained a deeper understanding of how Google potentially values links and the things that could be weighted more strongly than others.

Should these findings change the way you approach link building or digital PR?

That depends on the tactics you’re using.

If you’re still using outdated tactics to earn lower-quality links, then I’d say yes.

But if your link acquisition tactics are based on earning links with PR tactics from high-quality press publications, the main thing is to make sure you’re pitching relevant stories, rather than assuming that any link from a high authority publication will be rewarded.

For many of us, not much will change. But it’s a concrete confirmation that the tactics we’re relying on are the best fit, and the reason behind why we see PR-earned links having such a positive impact on organic search success.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Category seo news | Tags:

Social Networks : Technorati, Stumble it!, Digg, de.licio.us, Yahoo, reddit, Blogmarks, Google, Magnolia.

Cut through inbox clutter with personalized, high-impact email by Edna Chavira

Written on June 4, 2024 at 3:43 pm, by admin

It’s harder than ever to capture your audience’s attention in an increasingly crowded inbox. With email volumes on the rise and click-through rates declining, creating personalized, engaging content that resonates with your audience is more important than ever.

That’s where the power of CDP-ESP integration comes in. By leveraging the combined strength of your customer data platform (CDP) and email service provider (ESP), you can create targeted, high-impact emails that drive results.

MarTech’s upcoming webinar, “Turn Your Customer Insights into Personalized, High-Impact Email,” will show you how integrating your CDP and ESP can help you overcome these challenges and take your email marketing to the next level. Register now!

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Category seo news | Tags:

Social Networks : Technorati, Stumble it!, Digg, de.licio.us, Yahoo, reddit, Blogmarks, Google, Magnolia.

Instagram tests unskippable video ads in main feed

Written on June 3, 2024 at 12:42 pm, by admin

Instagram is experimenting with a new ad format that prevents users from scrolling until they view a video ad in their main feed.

Why it matters. The move could significantly boost ad exposure for brands but risks alienating users who find forced viewing intrusive.

How it works.

- New in-feed ads display with a timer.

- Users can’t scroll past until timer runs down.

- Essentially “un-skippable” like some YouTube ads.

Why we care. On one hand, this feature is a great opportunity for advertisers to get their ads in front of an audience that are used to seeing ads. But could it come at a risk of losing customers who will find their scrolling experience significantly disrupted?

The big picture. With half of users’ feeds now AI-recommended content from unfollowed profiles, Instagram sees an opportunity to blend in more ads without seeming overly disruptive.

Ad blockers. YouTube’s unskippable ads are often cited as a top reason people use ad blockers, suggesting forced viewing is deeply unpopular.

Between the lines. Instagram’s shift to Reels-heavy, algorithm-driven feeds may be paving the way for more aggressive ad strategies.

What they’re saying. A Meta spokesperson told TechCrunch:

- “We’re always testing formats that can drive value for advertisers. As we test and learn, we will provide updates should this test result in any formal product changes.”

The other side. Users are not thrilled. Photographer Dan Levy shared an example, sparking backlash in comments.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Category seo news | Tags:

Social Networks : Technorati, Stumble it!, Digg, de.licio.us, Yahoo, reddit, Blogmarks, Google, Magnolia.