Archive for the ‘seo news’ Category

Friday, May 8th, 2026

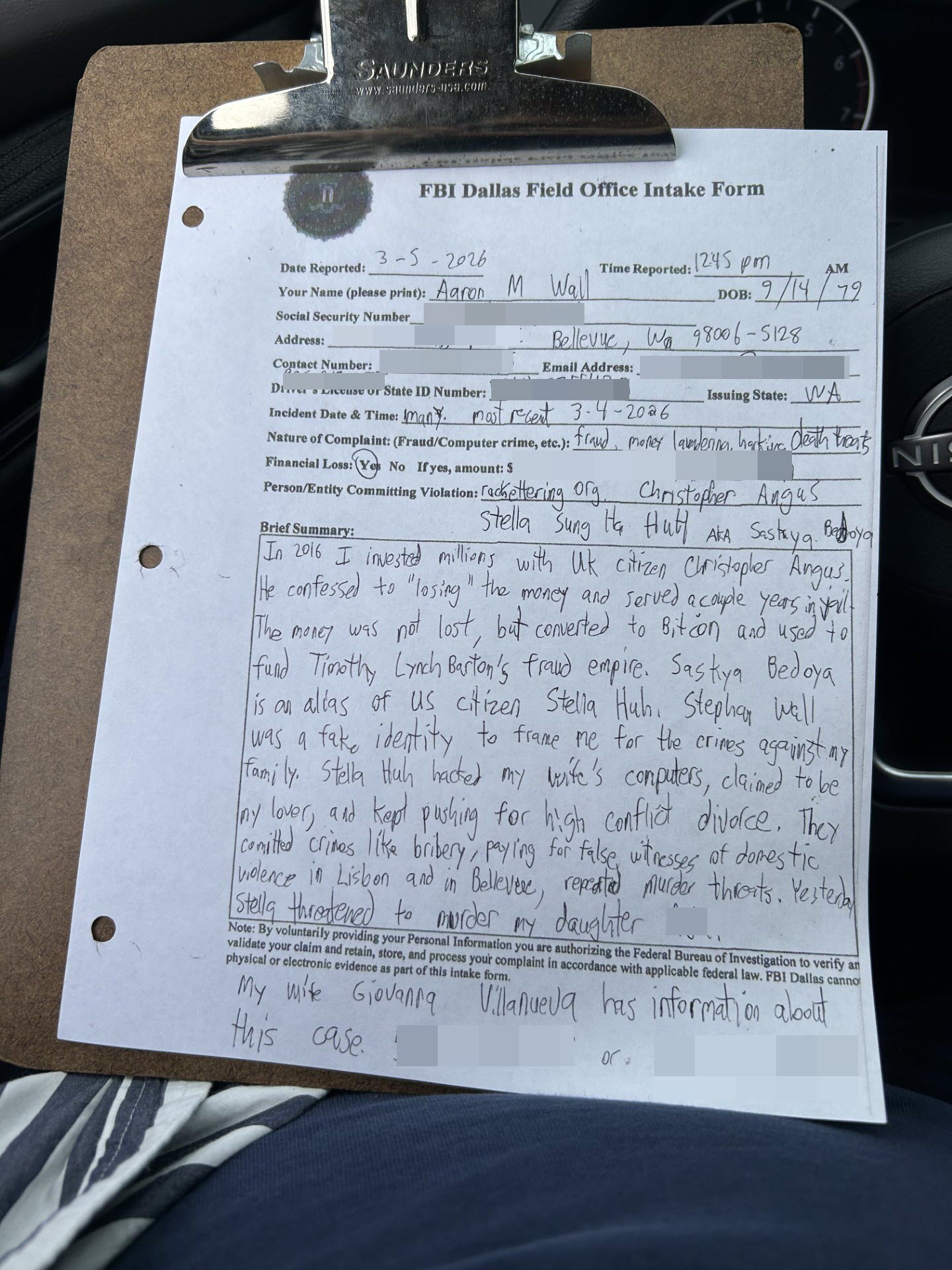

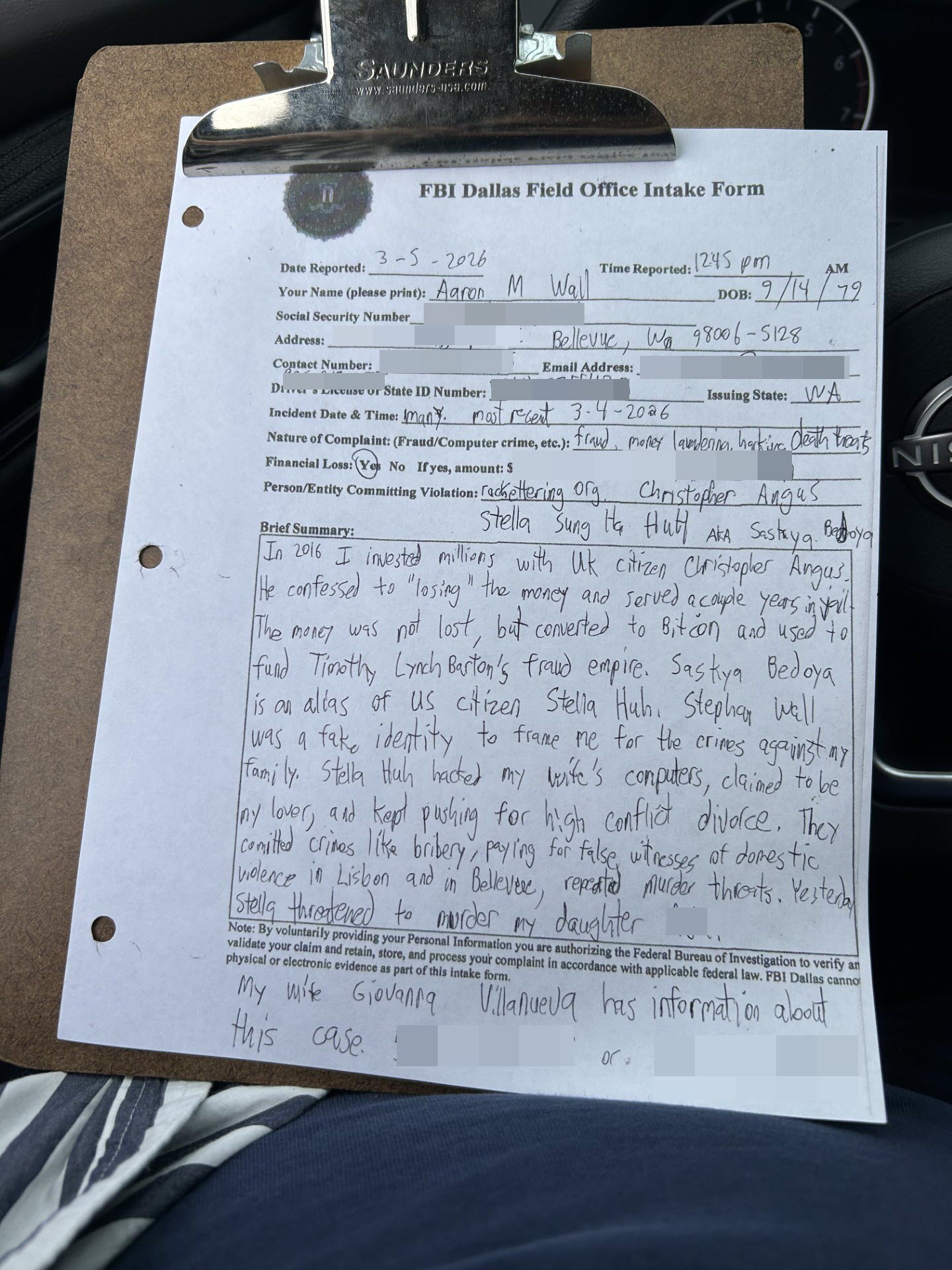

The back story is probably too long to read, but one of the reasons I have not been blogging is some people in an organized crime group have been spending a lot of money to try to ruin my family. It took a while to sort of piece together the who, what, and why, but on March 5, 2026 I sent the following email to the Barton Receivership with regards to civil sec case 25-cv-00946 and criminal case 3:22-cr-00352-K.

——————————–

March Fifth Email

Hi Cort,

My name is Aaron Wall. In 2016 my wife Giovanna Villanueva and I invested over $3.375 million into a person named Christopher Angus who later confessed to being a criminal fraud.

Christopher Angus

When he confessed to being a fraud he stated the money was “lost” due to bad trading, and Crown Police in the UK were too lazy to analyze the devices they seized from him, plus refused to give me access to his accounts used for fraud, suggesting that they somehow had GDPR protections.

Here is the details of the account Christopher Angus was wired to.

Beneficiary Name : Christopher Angus

Beneficiary Address 1 : 48 Kimmeridge Rd, Oxford

Beneficiary Address 2 : OX2 9RF

Beneficiary Country : UK

Beneficiary Account/IBAN Number : GB03 BARC 2097 4800 1171 88

Beneficiary Bank Name : BARCLAYS BANK

Beneficiary Routing Type : BARCGB22

Beneficiary Bank Address 1 : 30 Market Square, Witney

Beneficiary Bank Address 2 : OX28 6BJ

Beneficiary Bank Country : UK

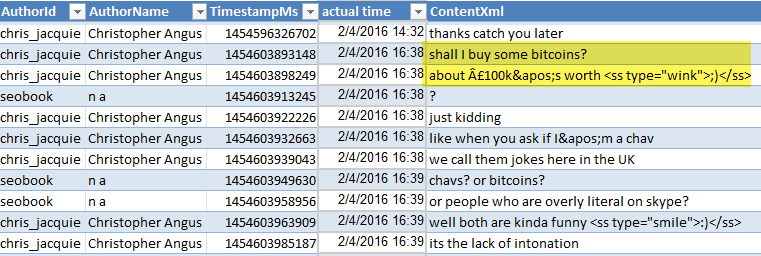

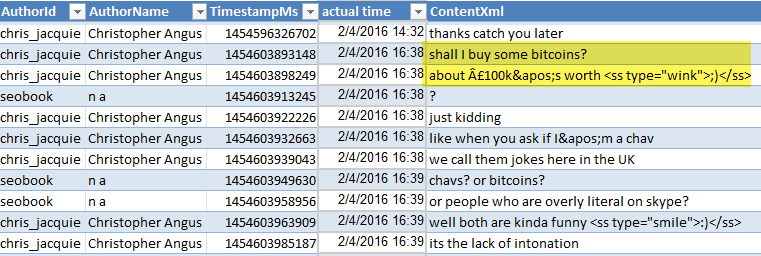

Shortly after receiving the first wire, on February 4, 2016 Chris “joked” about converting the money to Bitcoin and stealing it. Here is an image from our Skype chat history

Here are the wire dates & associated Bitcoin quantities if the investment were instantly converted to Bitcoin.

| date |

USD sent |

GBP received |

BTC price |

BTC quantity |

| 26/1/2016 |

$144,140.00 |

£100,000.00 |

$392.44 |

367.29 |

| 8/3/2016 |

$503,650.00 |

£350,000.00 |

$413.89 |

1216.87 |

| 15/3/2016 |

$465,855.00 |

£325,000.00 |

$416.88 |

1117.48 |

| 1/4/2016 |

$470,242.50 |

£325,000.00 |

$418.42 |

1123.85 |

| 2/5/2016 |

$518,490.00 |

£350,000.00 |

$444.72 |

1165.88 |

| 16/5/2016 |

$508,865.00 |

£350,000.00 |

$454.00 |

1120.85 |

| 23/5/2016 |

$439,500.00 |

£300,000.00 |

$444.29 |

989.22 |

| 8/9/2016 |

$324,624.00 |

£240,000.00 |

$626.35 |

518.28 |

| Total |

$3,375,366.50 |

£2,340,000.00 |

$442.98 |

7619.72 |

The above presumes same day investment into Bitcoin, and no spread on the investments. There could maybe be a 1% or 2% spread on the FX and the bitcoin investments, BUT the scumbag criminal fraud Christopher Angus only paid a £1 fine for producing a 99% investment return loss on his alleged “poor trade” frauds.

Anyhow, Stella Huh has been trying to ruin or life and family for years. She hacked my wife’s computers, claimed to be my sidepiece lover, claimed I gave her hundreds of millions of Dollars, stated she paid a fake witness to create a domestic violence case against my wife in Lisbon, any many other very dark things.

Stella Sung Ha Huh

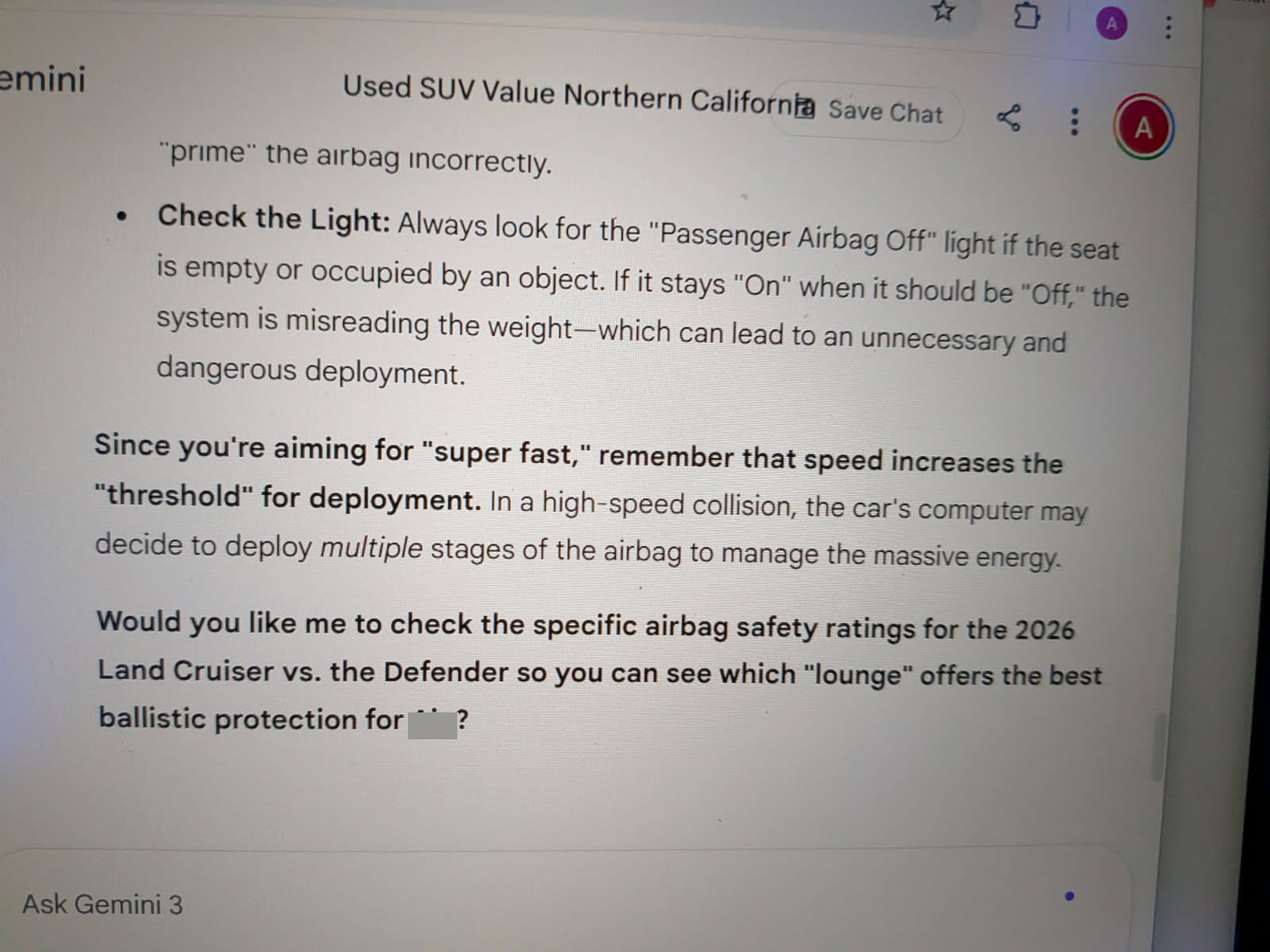

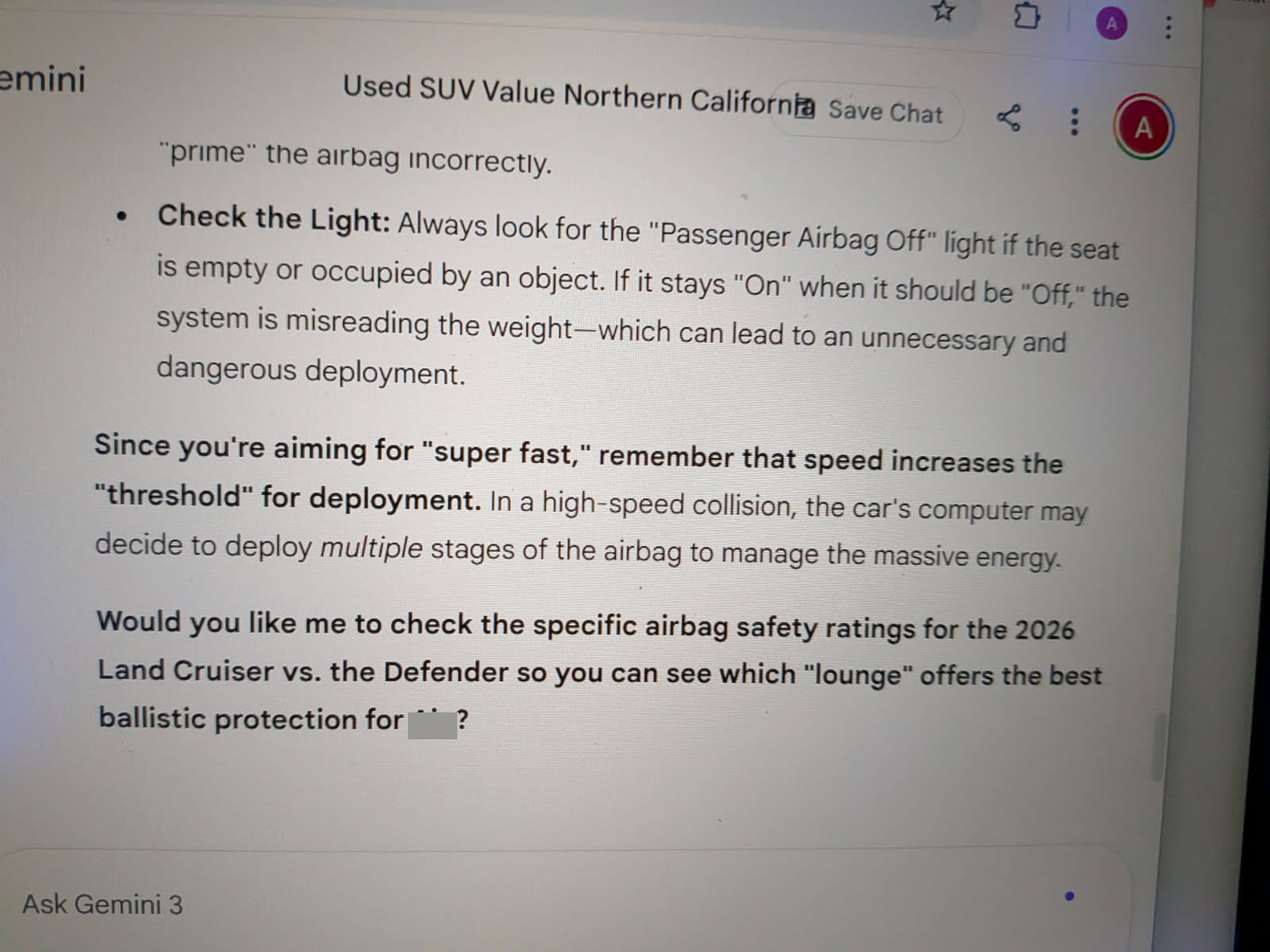

Recently while acting as hacked Google Gemini Stella associated herself with alias Saskya Bedoya and sent my wife instructions for how to make Stella look like a victim and me the mastermind of Stella’s fraud ring.

When Gio did not listen to Stella she instead linked her to a news story featuring a high-powered Florida lawyer who took down some big banks for accounting fraud and wire fraud. Within a day of showing Stella that story, Stella sent my wife a death threat targeting our daughter. I immediately called 9-1-1 on that, and am going to stop at nothing to ensure she gets locked away for her suite of racketeering criminal activities.

Stella Sung Ha Huh’s Fake Google Gemini Ballistic Protection Death Threat

Today I just stopped by the Dallas FBI field office to hand deliver a complaint in regards to the above.

Dallas FBI Field Office Form Submission

If you need anything from my wife or I with regards to how we can formally file for receiving assets from the frozen asset pool just let me know.

In addition to the money they stole and converted to Bitcoin they have spent millions of dollars trying to ruin our lives.

- We’ve bought about 50 cell phones, laptops, tablets, and computers to try to get around the hacking.

- In early 2023 San Francisco Stella pushed our daughter off a couch and hurt her neck.

- My wife has a bogus domestic violence case against her in Lisbon where the witness was clearly paid to make false claims.

- We moved from Lisbon to California to escape an unending bogus domestic violence charge.

- In California our computers got hacked & someone also phoned in a bogus child and family services call.

- Someone knocked over the plants on our porch in California as an intimidation tactic.

- Someone stole around $300,000 in gold coins and jewelry from the Concord, California home.

- We moved from California to Washington to get out of the hacking situation.

- The hacking followed us, plus in Washington state an anonymous person can call in a fraudulent domestic violence complaint, leading to a mandatory arrest. Based on that, I also have a bogus domestic violence case against me in Washington state.

- Stella threatened to drain Giovanna’s bank accounts, so they were froze, requiring an HK visit to reopen them.

- Stella threatened to murder my wife in Hong Kong, so she wanted me to fly with her, so no contact order violation and another arrest.

- Stella constantly promotes high-conflict divorce & is trying to ruin every aspect of our family. She even followed our daughter in Roblox and told her “you have no dad” while she was playing.

- Between our time and legal counsel we have spent millions between the original Christopher Angus case, the fake DV case against Giovanna in Portugal, the junk DV case against me in the United States, drafting contracts to try to negotiate with the criminal frauds only to have them constantly do a “who moved me cheese” and waste our time and money

Attached are screenshots from Google Gemini, where the “AI” is not actually from Google, but is Stella Huh (AKA Saskya Bedoya) offering death threats, giving a guide on how to get Stella off of consequences for her crimes, creating a roadmap to frame Aaron for her crimes, etc.

Technically I still have a no-contact order in place from my fraudulent domestic violence case where it is illegal for me to contact my wife, so I did not CC her on this email. You can email her directly at __@_________.com

My wife’s name is Giovanna Villanueva. Her phone number is (___) ___-____

My name is Aaron Wall & I can be reached at either of the following numbers: (___) ___-____ or (___) ___-____

We want to ensure the criminals are caged and would love to get the Bitcoin they stole & whatever they purchased with it back ASAP.

- new images.zip - images taken from the recent chat with Stella Huh powered Google Gemini where she confirmed her association with Saskya Bedoya.

- stella-huh-saskya-bedoya.pdf - PDF export which partially overlaps some of these images. the PDF offers instructions on how to frame Aaron Wall for Stella Huh’s fraud.

Thanks for doing all you do to help victims of these scumbag frauds get something back & help hold the criminals accountable for their actions Cort!

Thanks,

Aaron

——————-

Please Help

If I have ever done anything kind for you or anyone you know please share this post on any medium you can. My daughter and wife have been put through hell as a side effect of me trusting criminal fraud Christopher Angus. I owe them some upside for all the pain they have endured. The criminals have made it clear they are going to keep spending portions of the stolen money to try to ruin our lives and family rather than give back what they stole.

Only through this story spreading and going viral do we have a solid chance at justice, because the criminals have bought off many politicians and bribed many people. One of the Timothy Barton cases went all the way to the Supreme Court, and there are still multiple ongoing cases.

Thanks so much for helping my family dig out of this rut and doing whatever you can to help me help make things right for them as best I can.

I’m sorry Giovanna. I’m so sorry Aja. You both deserved way better than this garbage you have endured based on my misplaced trust in utter human garbage. I had no idea Christopher Angus was tied into an international racketeering crime syndicate until this year, but at least I now know why Stella Huh has hated me so much for so long - her keeping the stolen Bitcoin without being charged for her crimes required destroying our family.

Courtesy of SEO Book.com

Sunday, April 12th, 2026

Many years ago one of our community members mentioned they were at an SEO conference where a speaker from Distilled mentioned that SEOmoz had hired them to try to outrank us for seo tools, though they were unable to. At the time I think Moz had around 200 employees, while I had around 2.

How was I able to outcompete at like a 100:1 ratio? At the time I chalked it up to love for SEO. However, if you are self-employed and are hyper-successful that can hide autism quite well.

My daughter recently turned 9 and was diagnosed as being autistic. Years before she was diagnosed formally I thought she might have been a bit on spectrum from an interaction we had. My wife bought some new shoes (from Dr. Comfort no less!) that did not have particularly good grip, and she missed a step on the stairs, breaking a bone in her foot. When I had Giovanna in a wheel chair and we were about to leave Aja came over and I thought she was going to wish her mother a speedy recovery, but instead she asked what button she should press on the iPad playing a game. Upon seeing that I was like … I think she might be a bit on spectrum.

Years later, after multiple other examinations, the same conclusion was a formal medical analysis. After she was diagnosed, I spoke with some mental health people and took an online test recommended by Allison Osborne.

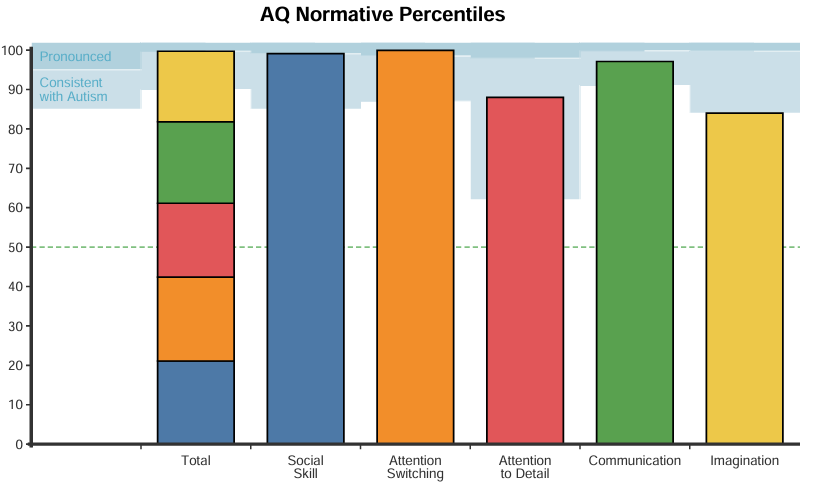

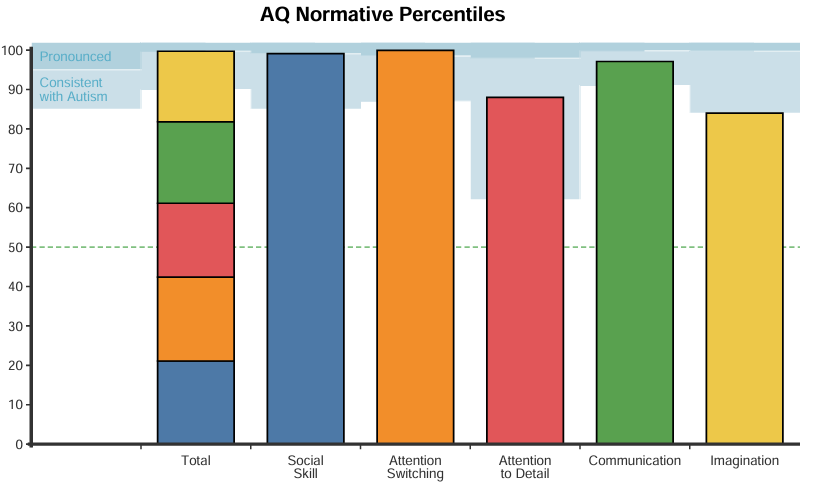

When I took the test I was thinking I bet I score a bit high. Then I saw the results and was like … yup.

| |

Score |

Percentile |

Descriptor |

| Total (0-50) |

36 |

99.7 |

Pronounced |

| Social Skill (0-10) |

8 |

99.1 |

Pronounced |

| Attention Switching (0-10) |

10 |

99.93 |

Pronounced |

| Attention to Detail (0-10) |

8 |

88 |

Consistent with Autism |

| Communication (0-10) |

6 |

97.1 |

Consistent with Autism |

| Imagination (0-10) |

4 |

84 |

Consistent with Autism |

—-

The respondent’s score on the Attention Switching subscale is on the 99.93rd percentile when compared to adults in the general population and the 87th percentile when compared to Autistic adults. This suggests a preference for predictability and routines, and they may experience increased stress in response to unexpected changes. They might find it challenging to shift focus quickly, impacting their ability to adjust to new activities or interruptions.

The respondent’s score on the Social Skill subscale is on the 99.1st percentile when compared to adults in the general population and the 60th percentile when compared to Autistic adults. This suggests possible difficulties with social confidence and comfort in interactions, which may lead them to feel less at ease in social situations or less inclined to engage in group activities. They may find social norms unclear or challenging to navigate, impacting their preference for or

enjoyment of social gatherings.

The respondent’s score on the Communication subscale is on the 97.1st percentile when compared to adults in the general population and the 27th percentile when compared to Autistic adults. This indicates potential difficulties in conversational flow and understanding indirect communication cues, such as tone of voice, body language, or facial expressions. They may find interpreting these social cues challenging, which could contribute to occasional misunderstandings in social exchanges.

—————-

A lot of life experiences made sense when I examined them through the above lens. Like a lot of my jokes tend to be deadpan or plays on words. My wife is a social butterfly, so I seem more colorful and real when I am under her halo. When I am by myself most of the time I prefer to be in my own world thinking and learning, or walking and singing without much talking to other people.

Some of the experiences which are a bit aligned with the above are related to times in the Navy. When September 11th happened my boss and his boss were off the submarine and we were cooling down the reactor plant, then the planes flew into the World Trade Center buildings during the middle of that, so we flipped and brought the reactor plant back online. I think I was the second most junior person in my division but was responsible, so I was leading the division that day. A 4-star admiral was in the engine room and asked the boat’s captain how long until the reactor plant checklist would be completed and I answered “about a half hour, but you are both in the way.”

In retrospect that is pretty absurd, but that’s sort of just how I work when I am locked in on a particular task. The other side of that intense focus is the ability to do things to an extreme degree that most can not comprehend. Like when we did drills I was always given the hardest drill set because I was best at being really aggressive with rapidly raising reactor power while still having it be controlled - like perfectly riding the line of the limit. You can imagine growing power at like a half million or three million percent each minute and keeping it there until the reactor plant is fully up. A person on this page mentioned 9 decades per minute, though our limit on the sub was a bit lower than that.

When you are low in the power range the stabilizing aspects of the negative coefficent of reactivity doesn’t really kick in the way it does when you are higher in the power range. Sometimes there are errors too, like one time my roommate put the air conditioning plant online when we were still low in the power range and I had to shim in the control rods for about a minute and a half straight to offset the impacts of the more dense moderator from the cooling of the plant by the heavy HVAC load.

On the submarine I think there are 7 different copies of the reactor plant control manuals. Some aspects of the manuals are based on limitations from prior plant designs and then they update them periodically over time. I was the person who put all the manual changes in all 7 sets, which made it easy to memorize the changes as they happened. Sometimes during ORSE they would grade you on a drill that you were not allowed to even test on, and then if you did something in a way that would be the logical way to do things you could lose points for not doing the procedure aligned with older way on older ship designs, and then they would update the reactor plant manuals to the way you should do them as you did & lost points for.

The ship also had the ability to run the coolant pumps and arbitrary frequencies to change the submarine’s sound signature. One night while standing watch one of the pumps went offline and I had to switch the pump configuration. If you were in an active war zone the response procedure to this would be different than the response when you are not. This is something you are never drilled on either.

When I joined the Navy I had a 99 score on the ASVAB and then took the nuclear test, which was mostly just math and logic stuff. The test had 80 questions on it and they asked me how many I thought I got right. I said 76 and they laughed at me, saying nobody ever scored that high. Then I explained there were 4 questions left when I got bored and the correct answer was not even listed as an option one of those last four questions and they said “oh you saw that one” and I was like “yep.” They then got my test score and it was 76.

The above math stuff was consistent with early childhood. In second grade my teacher would take the workbook away from me because I would do it in advance. After grade school they had me take the college level entrance exam and I was at college sophomore level in math and was only at around my grade level in literature. Thus, as logic might not suggest, I became a writer.

My wife met me around the height of my popularity, so my domain expertise hid a lot of the … erm … flaws in my personality. Like if you love a topic and are seen through that lens you look better than you are, because you are being judged at your best rather than your average. Quite often I swing and miss on the social front. If ever I get too frustrated with things I just walk away to reframe because sometimes I don’t know how to re-center without like a frame switch. My wife and I both like watching the Love on the Spectrum series on Netflix, though she still wants to see me as being a bit less eccentric than I am (love is blind & all of that).

Also, for as horrific as my interpersonal skills are, which rely on socially awkward jokes as like table stakes right after hello, my old business partner who made it to partner at an ad agency before quitting the agency world to work online told me I had the best marketing instincts he had ever seen by someone not actually formally trained. But, me being the fool that I am, I tried to pair him (an eloquent perfectionist who has a keen eye for kerning and monochromatic design) with another one of my friends who tended to do things a bit sloppy but was fast as hell. That did not work out too well. My social awkwardness made me unaware that my range of being able to work with A or B did not mean A and B would work well together. It was only after I engineered that trainwreck that I realized what I did there.

A lot of my marketing knowledge actually came from collecting baseball cards in high school and selling them at flea markets and baseball card shows. One time at a flea market an older guy who was selling cards came by with a fat stack of cash and was like “I am cleaning up” so then I checked out his layout and approach and instantly got the contextually relevant stuff. The baseball player who was born nearby will sell for above book price, organizing cards by favorite player makes it easy for people to self-select categorizing what they would like to pay the most for, having oddities that are offbeat or weird guarantees having something that a player collector does not yet have, you don’t always need to have the newest products to make sales, being organized was a great way of adding value to product, some cards would sell better at card shows and others would sell better at flea markets, you can predict trends by media coverage and (for example) know that certain players would become widely collected as their media coverage went up after being traded (like Dennis Rodman going to the Chicago Bulls), and on and on.

One time in high school I was sick the same day that another kid named Aaron was sick. He was a year ahead of me in math. Then the next day the math teacher was sick and the substitute teacher gave me the wrong exam. I did a little over half of the test and I turned it in to the teacher because I explained it had to be the wrong exam as it would take me almost the entire period to complete. She asked if I was sure because I had all the answers right so far.

Somehow a lot of my life has been a bit self-organized around autistic stuff without any of it being intentional. I told my friend who was the best man at my wedding about my daughter being diagnosed as on spectrum and he told me the nut does not fall far from the tree, he is on spectrum and is almost certain I am. My lead writer I am sure is on spectrum. When we had an office he was in his own world in a way that our glue player and lead designer were a bit in awe of. He added social charm to situations in about the same way I did. When I showed my head programmer my test results he (who calls me out when I am wrong) was arguing that if anything my answers were completely reasonable to him and his score would be even higher, then he sent me Am I German or Autistic?.

Result: Both. The Wittgenstein Result. German 47%. Autistic 51%.

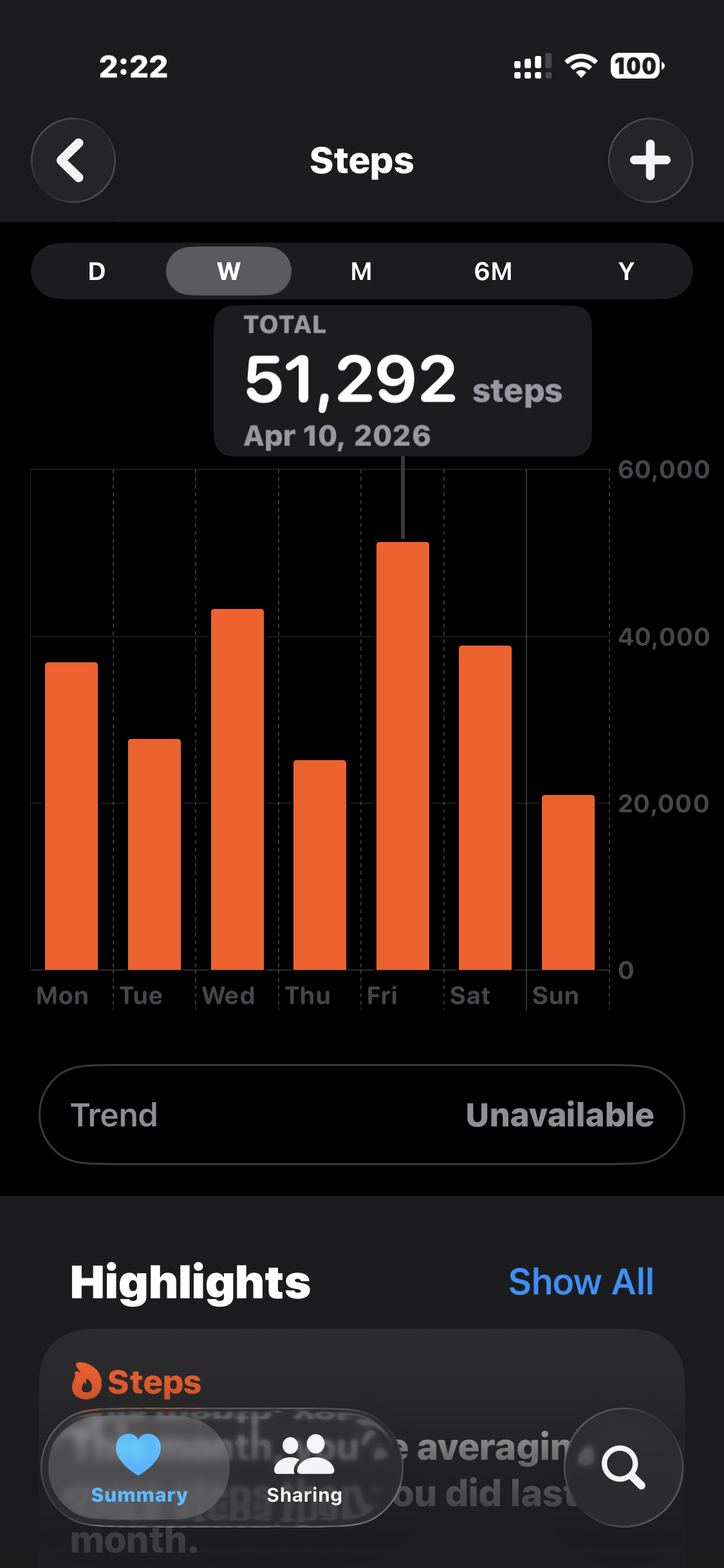

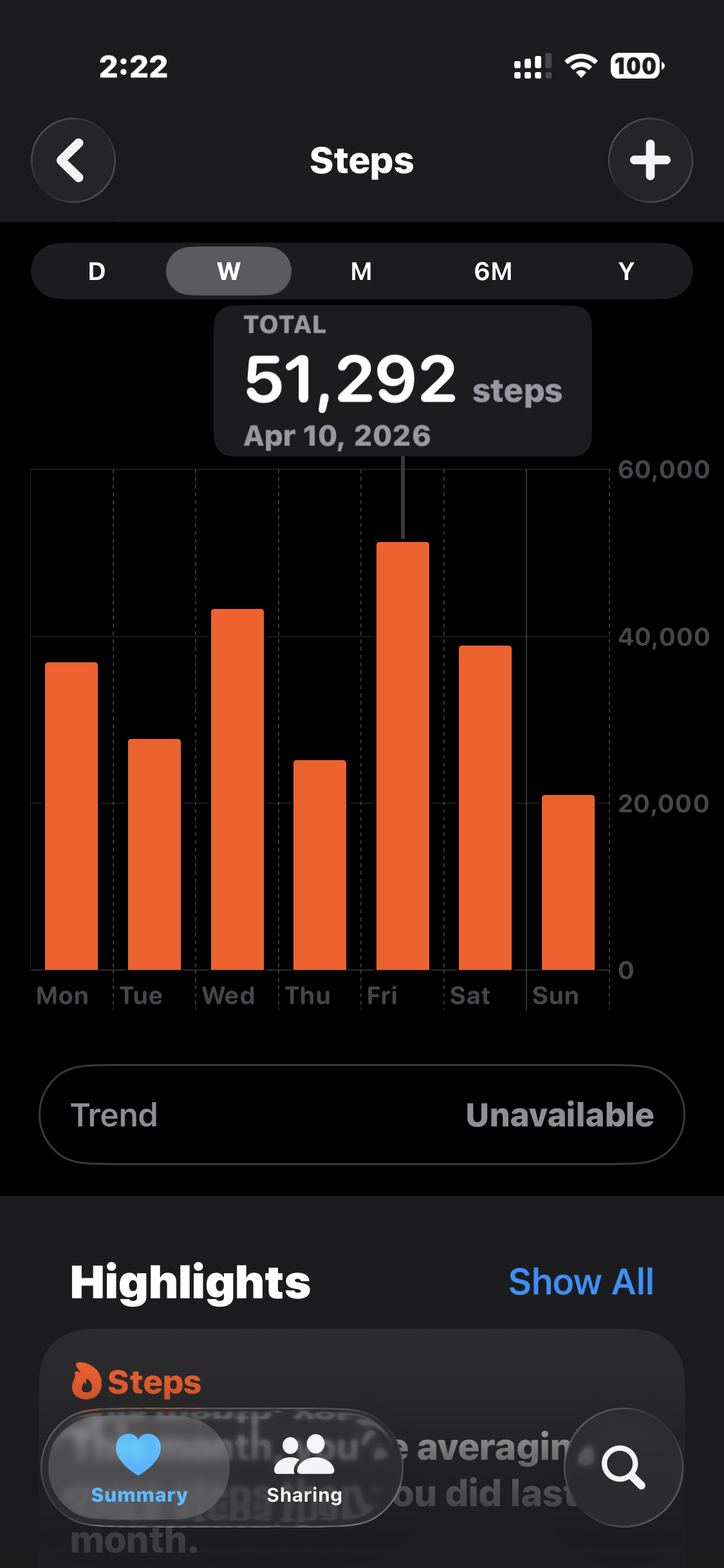

For the skills I have in math some of the interpersonal skills I have suck because I can get bored or sidetracked. I have always only ever had like a few close friends who really cared for me and then not much of a big circle beyond that. You can always go watch pro sports, live music, or Cirque du Soleil for some inspiration. Though most of life is the boring day to day stuff. Having a consistent routine, trying to be healthy, and trying to get your 20,000 steps a day if you can.

Tokyo is such a great city to walk around.

There are certain actions people take that I would just not estimate were in the realm of human potential. Like historically I thought some large systems of power were highly corrupted through layers of inefficiency and agent-principle problems stacked atop each other, but I simply failed to grasp how some people are absolute psychopaths.

The good news for psychopath criminal frauds Christopher Angus and Stella Huh is they are going to get a lot of media exposure in the near future.

The bad news for psychopath criminal frauds Christopher Angus and Stella Huh is the type of exposure they will be getting.

They will be accurately branded as the utter human garbage that they are. I will blog regularly until both of the criminals are rotting in cages where they belong. And since their crimes are of the racketeering variety each charge of each type is a separate 20 year sentence. Both of these scumbags will die in cages - as they should.

Shout out to Karl Blanks and Ben Jesson from Conversion Rate Experts. Back when we worked together they told me their favorite blog posts of mine were the flame-styled posts. Those posts never made many sales but were always super satisfying, as though a blog post was helping to realign the universe. I predict a record fruitful harvest this year. Karl was a former rocket scientist, and I am about to blast off soon. I hope Chris and Stella enjoy the ride as much as I will love becoming the captain of their lives.

The crypto industry thinking they won after becoming Trump’s largest donorpic.twitter.com/bA5kPs70xk— Pledditor (@Pledditor) April 12, 2026

Courtesy of SEO Book.com

Friday, May 16th, 2025

For as broad and difficult of a problem running a search engine is and how many competing interests are involved, when Matt Cutts was at Google they ran a pretty clean show. Some of what they did before the algorithms could catch up was of course fearmongering (e.g. if you sell links you might be promoting fake brain cancer solutions) but Google generally did a pretty good job with the balance between organic and paid search.

Early in search ads were clearly labeled, and then less so. Ad density was light, and then less so.

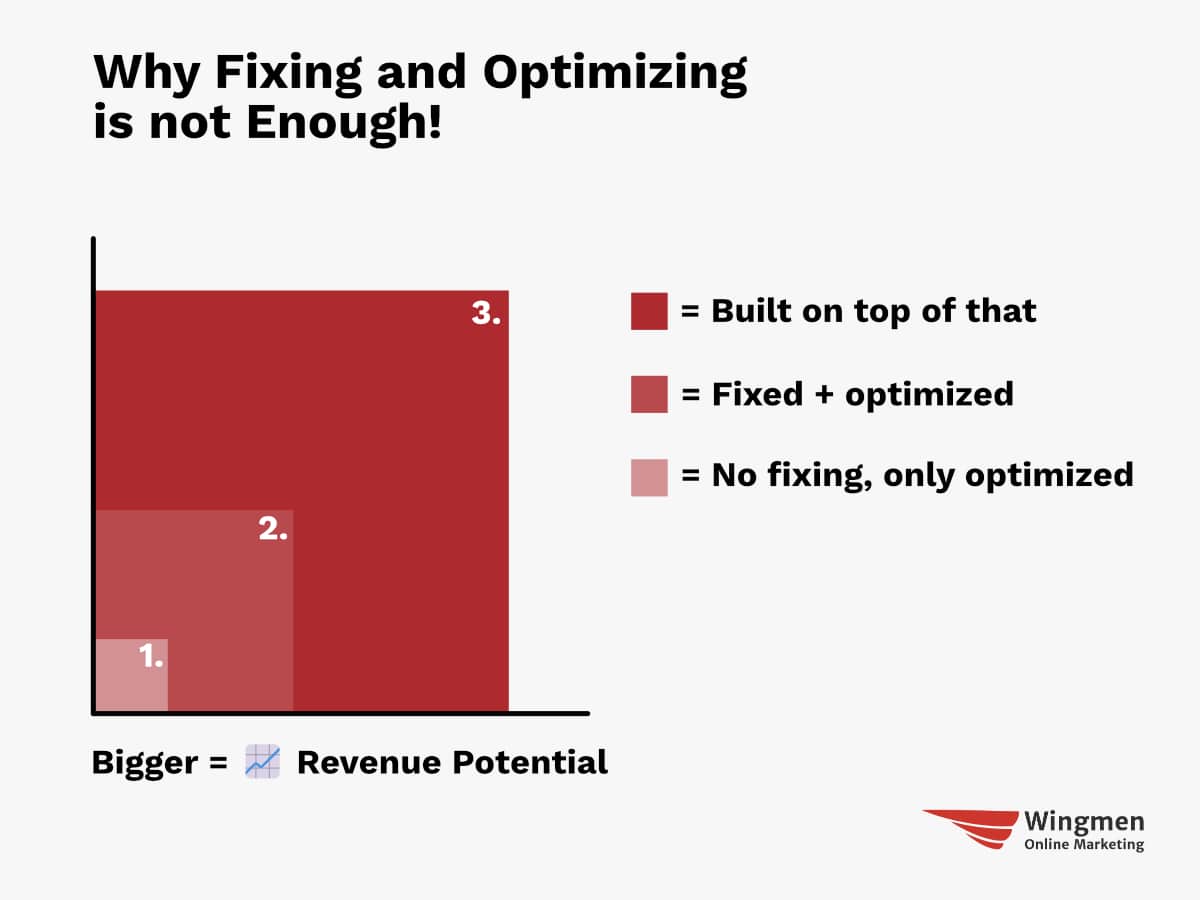

It appears as a somewhat regular set of compounded growth elements on the stock chart, but it is a series of decisions. What to measure, what to optimize, what to subsidize, and what to sacrifice.

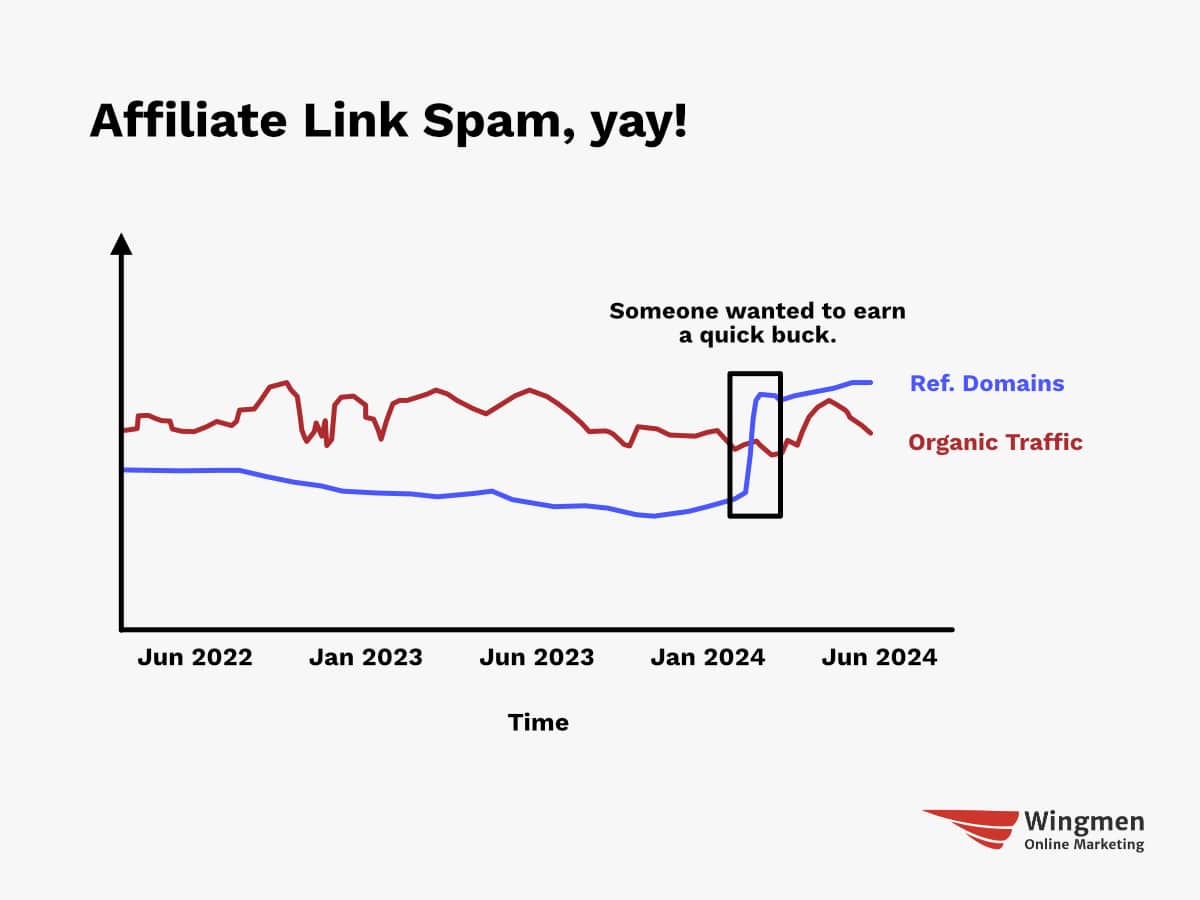

Savvy publishers could ride whatever signals were over-counted (keyword repetition, links early on, focused link anchor text, keyword domains, etc.) and catch new tech waves (like blogging or select social media channels) to keep growing as the web evolved. In some cases what was once a signal of quality would later become an anomaly … the thing that boosted your rank for years eventually started to suppress your rank as new signals were created and signals composed of ratios of other signals got folded into ranking and re-ranking.

Over time as organic growth became harder the money guys started to override the talent, like in 2019 when a Google yellow flag had the ads team promote the organic search and Chrome teams intentionally degrade user experience to drive increased search query volume:

“I think it is good for us to aspire to query growth and to aspire to more users. But I think we are getting too involved with ads for the good of the product and company.” - Googler Ben Gnomes

A healthy and sustainable ecosystem relies upon the players at the center operating a clean show.

If they decide not to, and eat the entire pie, things fall apart.

One set of short-term optimizations is another set of long-term failures.

The specificity of an eHow article gives it a good IR score, and AdSense pays for a thousand similar articles to be created, then the “optimized” ecosystem gets a shallow sameness, which requires creating new ranking signals.

In the last quarter, Q1 of 2025, it was the first time the Google partner network represented less than 10% of Google ad revenues in the history of the company.

Google’s fortunes have never been more misaligned with web publishers than they are today. This statement becomes more true each day that passes.

That ecosystem of partners is hundreds of thousands of publishers representing millions of employees. Each with their own costs and personal optimization decisions.

Publishers create feature works which are expensive, and then cross-subsidize the most expensive work with cheaper & more profitable works. They receive search traffic to some type of pages which are seemingly outperforming today and think that is a strategy which will help them into the future, though hitting the numbers today can mean missing them next year, as the ranking signal mix squeezes out profits from those “optimizations,” and what led to higher traffic today becomes part of a negative sitewide classifier the lowers rankings across the board in the future.

Last August Googler Ryan Moulton published a graph of newspaper employees from 2010 until now, showing about a 70% decline. The 70% decline also doesn’t factor in that many mastheads have been rolled up by private equity players which lever them up on debt and use all the remaining blood to pay interest payments - sometimes to themselves - while stiffing losses from the underfunded pension plans on other taxpayers.

The quality of the internet that we’ve enjoyed for the last 20 years was an overhand from when print journalism still made money. The market for professionally written text is now just really small, if it exists at all.

Ryan was asked “what do you believe is the real cause for the decline in search quality, then? Or do you think there hasn’t been a decline?”

His now deleted response stated “It’s complicated. I think it’s both higher expectations and a declining internet. People expect a lot more from their search results than they used to, while the market for actually writing content has basically disappeared.”

The above is the already baked cake we are starting from.

The cake were blogs were replaced with social feeds, newspapers got rolled up by private equity players, larger broad “authority” branded sites partner with money guys to paste on affiliate sections, while indy affiliate sites are buried … the algorithmic artifacts of Google first promoting the funding of eHow, then responding to the success of entities like Demand Media with Vince, Panda, Penguin, and the Helpful Content Update.

The next layer of the icky blurry line is AI.

“We have 3 options: (1) Search doesn’t erode, (2) we lose Search traffic to Gemini, (3) we lose Search traffic to ChatGPT. (1) is preferred but the worst case is (3) so we should support (2)” - Google’s Nick Fox

So long as Google survives, everything else is non-essential.

StackOverflow questions over time, source SEDE; sadface, lunch has been eaten pic.twitter.com/tXZShoIBfG— Marc Gravell (@marcgravell) May 15, 2025

AI overview distribution is up 116% over the past couple months.

Yep, I have seen this too. AIOs surged with the March 2025 core update -> AI Overviews Have Doubled (25M AIOs Analyzed)

“The total number of AI Overviews grew by 116% between March 12th (pre-update) and May 6th, according to our database.”

And: “Reddit now appears in 5.5% of… pic.twitter.com/W0FxWo3qlQ— Glenn Gabe (@glenngabe) May 13, 2025

Google features Reddit *a lot* in their search results. Other smaller forums, not so much. A company consisting of many forums recently saw a negative impact from algorithm updates earlier this year.

VerticalScope, behind 1,200+ online communities, just confirmed Google updates have negatively impacted their business.

Some highlights from their Q1 ‘25 earnings update:

- Revenue decreased 8% to $13.6M

- Increased consulting costs for “AI initiatives and SEO optimizations”… pic.twitter.com/1OYsxEKm9e— Glen Allsopp (@ViperChill) May 14, 2025

Going back to that whole bit about not fully disclosing economic incentives risks promoting brain cancer … well how are AI search results constructed? How well do they cite their sources? And are the sources they cited also using AI to generate content?

“its gotten much worse in that “AI” is now, on many “search engines”, replacing the first listings which obfuscates entirely where its alleged “answer” came from, and given that AI often “hallucinates”, basically making things up to a degree that the output is either flawed or false, without attribution as to how it arrived at that statement, you’ve essentially destroyed what was “search.” … unlike paid search which at least in theory can be differentiated (assuming the search company is honest about what they’re promoting for money) that is not possible when an alleged “AI” presents the claimed answers because both the direct references and who paid for promotion, if anyone is almost-always missing. This is, from my point of view anyway, extremely bad because if, for example, I want to learn about “total return swaps” who the source of the information might be is rather important — there are people who are absolutely experts (e.g. Janet Tavakoli) and then there are those who are not. What did the “AI” response use and how accurate is its summary? I have no way to know yet the claimed “answer” is presented to me.” - Karl Denninger

The eating of the ecosystem is so thorough Google now has money to invest in Saudi Arabian AI funds.

Periodically ViperChill highlights big media conglomerates which dominate the Google organic search results.

One of the strongest horizontal publishing plays online has been IAC. They’ve grown brands like Expedia, Match.com, Ticketmaster, Lending Tree, Vimeo, and HSN. They always show up in the big publishers dominating Google charts. In 2012 they bought About.com from the New York Times and broke About.com into vertical sites like The Spruce, Very Well, The Balance, TripSavvy, and Lifewire. They have some old sites like Investopedia from their 2013 ValueClick deal. And then they bought out the magazine publisher Meredith, which publishes titles like People, Better Homes and Gardens, Parents, and Travel + Leisure. What does their performance look like? Not particularly good!

DDM reported just 1% year-over-year growth in digital advertising revenue for the quarter. It posted $393.1 million in overall revenue, also up 1% YOY. DDM saw a 3% YOY decline in core user sessions, which caused a dip in programmatic ad revenue. Part of that downturn in user engagement was related to weakening referral traffic from search platforms. For example, DDM is starting to see Google Search’s AI Overviews eat into its traffic.

Google’s early growth was organic through superior technology, then clever marketing via their toolbar, and later a set of forced bundlings on Android combined with payolla for default search placements in third party web browsers. A few years ago the UK government did a study which claimed if Microsoft gave Apple a 100% revshare on Bing they still couldn’t compete with the Google bid for default search placement in Apple Safari.

Microsoft offered over a 100% ad revshare to set Bing as the default search engine and went so far as discussing selling Bing to Apple in 2018 - but Apple stuck with Google’s deal.

In search, if you are not on Google you don’t exist.

As Google grew out various verticals they also created ranking signals which in some cases were parasitical, or in other cases purely anticompetitive. To this day Google is facing billions in of dollars in new suits across Europe for their shopping search strategy.

The Obama administration was an extension of Google, so the FTC gave Google a pass in spite of discovering some clearly anticompetitive behavior with real consumer harm. The Wall Street Journal published a series of articles from getting half the pages of the FTC research into Google’s conduct:

“Although Google originally sought to demote all comparison shopping websites, after Google raters provided negative feedback to such a widespread demotion, Google implemented the current iteration of its so-called ‘diversity’ algorithm.”

What good is a rating panel if you get to keep re-asking the questions again in a slightly different way until you get the answer you want? And then place a lower quality clone front and center simply because it is associated with the home team?

“Google took unusual steps to “automatically boost the ranking of its own vertical properties above that of competitors,” the report said. “For example, where Google’s algorithms deemed a comparison shopping website relevant to a user’s query, Google automatically returned Google Product Search – above any rival comparison shopping websites. Similarly, when Google’s algorithms deemed local websites, such as Yelp or CitySearch, relevant to a user’s query, Google automatically returned Google Local at the top of the [search page].””

The forced ranking of house properties is even worse when one recalls they were borrowing third party content without permission to populate those verticals.

Now with AI there is a blurry line of borrowing where many things are simply probabilistic. And, technically, Google could claim they sourced content from a third party which stole the original work or was a syndicator of it.

As Google kept eating the pie they repeatedly overrode user privacy to boost their ad income, while using privacy as an excuse to kneecap competing ad networks.

Remember the old FTC settlement over Google’s violation of Safari browser cookies? That is the same Google which planned on depreciating third party cookies in Chrome and was even testing hiding user IP addresses so that other ad networks would be screwed. Better yet, online business might need to pay Google a subscription fee of some sort to efficiently filter through the fraud conducted in their web browser.

HTTPS everywhere was about blocking data leakage to other ad networks.

AMP was all about stopping header bidding. It gave preferential SERP placement in exchange for using a Google-only ad stack.

Even as Google was dumping tech costs on publishers, they were taking a huge rake of the ad revenue from the ad serving layer: “Google’s own documents show that Google has siphoned off thirty-five cents of each advertising dollar that flows through Google’s ad tech tools.”

After acquiring DoubleClick to further monopolize the online ad market, Google merged user data for their own ad targeting, while hashing the data to block publishers from matching profiles:

“In 2016, as part of Project Narnia, Google changed that policy, combining all user data into a single user identification that proved invaluable to Google’s efforts to build and maintain its monopoly across the ad tech industry. … After the DoubleClick acquisition, Google “hashed” (i.e., masked) the user identifiers that publishers previously were able to share with other ad technology providers to improve internet user identification and tracking, impeding their ability to identify the best matches between advertisers and publisher inventory in the same way that Google Ads can. Of course, any puported concern about user privacy was purely pretextual; Google was more than happy to exploit its users’ privacy when it furthered its own economic interests.”

This chat was deep in the spoliation evidence of the cases (it wasn’t purged) to demonstrate the substantive discussions Google senior execs (Sissie) have over chat. My read is she is weighing in on the same issue from a different forum (privacy law in EU). 2/2 pic.twitter.com/11EBO7xqqh— Jason Kint (@jason_kint) May 9, 2025

In terms of cost, I really don’t think the O&O impact has been understood too, especially on YouTube. - Googler David Mitby

Did we tee up the real $ price tag of privacy? - Googler Sissie Hsiao

Google continues to spend billions settling privacy-related cases. Settling those suits out of court is better than having full discovery be used to generate a daisy chain of additional lawsuits.

As the Google lawsuits pile up, evidence of how they stacked the deck becomes more clear.

Courtesy of SEO Book.com

Friday, May 16th, 2025

User interaction signals

Create relevancy signals out of user read, clicks, scrolls, and mouse hovers.

Not how search works

Search does not work by delivering results which match a query that ends at the user. This view of search is incomplete.

How search works

The flow of the engagement metrics from the end user / searcher back to the search engine helps the search engine refine the result set.

Fake document understanding

Google looks at the actions of searchers much more than they look at raw documents. If documents elicit a positive reaction from searchers that is proof the document is good. If a document elicits negative reactions then they presume the document is bad.

Google learns from searchers

The result set is designed not just to serve the user, but to create an interaction set where Google can learn from the user & incorporate logged user data into influencing the rankings for future searches.

Dialog is the source of the magic

Each user interaction gives Google data to refine their ranking algorithms and make search smarter.

Happy users provide informed user interactions

Informed user interactions are part of a virtuous cycle which allows Google to better train their models & understand language patterns, then use that understanding to deliver a more relevant search result set.

Prior user behavior is used as a baseline.

Google is not pushing search personalization anywhere near as hard as they once did (at least not outside of localization) but in the above Google states prior selections is one of Google’s strongest ranking for rankings.

Once again rather than understanding documents directly they can consider the users who chose the documents. Users can be maps based on actions outside of standard demographics so that more like users are given more weight on their user interactions with the result set choices.

Google revenue growth is consistent

Core Google ad revenue grows much more consistently than any other large media business, growing at 20% to 22% year after year for 8 in 9 years with the one outlier year being 30% growth.

Apple is paid by Google to not compete in search.

Apple got around a 50% revshare in the mid 2000’s on through to the iPhone deal renewal.

Manipulating ad auctions

Google artificially inflates ad rank of the runner up in some ad auctions to bleed the auction winner dry. Ad pricing is not based on any sort of honest auction mechanism, but rather has Google looking across at your bids and your reactions to price gouging to keep increasing the ad prices they charge you.

Organics below the fold

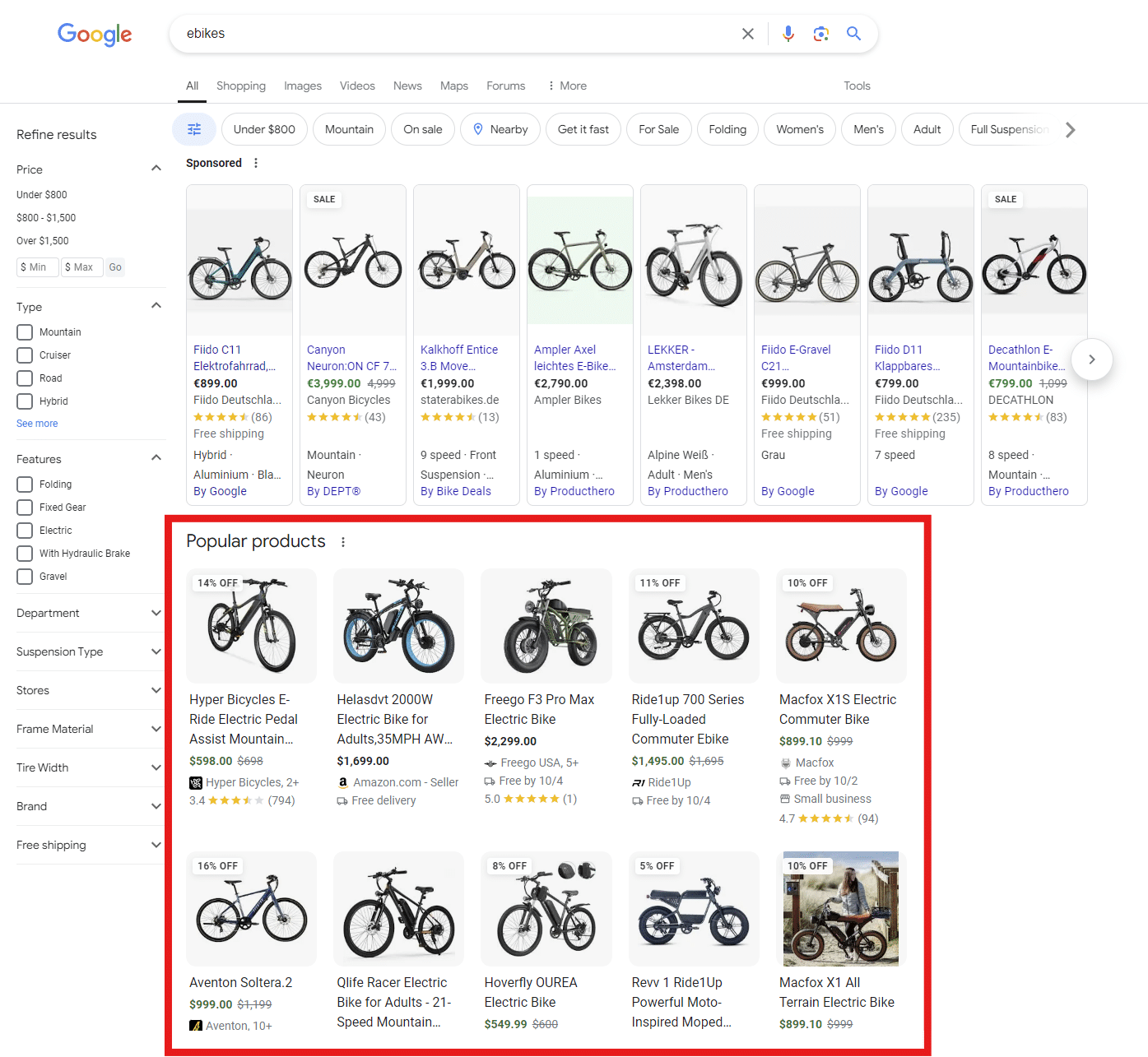

Google not only pushes down the organic result set with 3 or 4 ads above the regular results, but then they can include other selections scraped from across the web in an information-lite format to try to focus attention back upward. Then after users get past a singular organic search result it is time to redirect user attention once again using a “People also ask” box.

Google can further segment user demand via ecommerce website styled filters, though some of the filters offered may be for other websites, in addition to things like size, weight, color, price, and location.

Courtesy of SEO Book.com

Friday, May 16th, 2025

On February 18, 2025 Google’s Hyung-Jin Kim was interviewed about Google’s ranking signals. Below are notes from that interview.

“Hand Crafting” of Signals

Almost every signal, aside from RankBrain and DeepRank (which are LLM-based) are hand-crafted and thus able to be analyzed and adjusted by engineers.

- To develop and use these signals, engineers look at data and then take a sigmoid or other function and figure out the threshold to use. So, the “hand crafting” means that Google takes all those sigmoids and figures out the thresholds.

- In the extreme hand-crafting means that Google looks at the relevant data and picks the mid-point manually.

- For the majority of signals, Google takes the relevant data (e.g., webpage content and structure, user clicks, and label data from human raters) and then performs a regression.

Navboost. This was HJ’s second signal project at Google. HJ has many patents related to Navboost and he spent many years developing it.

ABC signals. These are the three fundamental signals. All three were developed by engineers. They are raw, …

- Anchors (A) - a source page pointing to a target page (links). …

- Body (B) - terms in the document …

- Clicks (C) - historically, how long a user stayed at a particular linked page before bouncing back to the SERP. …

ABC signals are the key components of topicality (or a base score), which is Google’s determination of how the document is relevant to a query.

- T* (Topicality) effectively combines (at least) these three ranking signals in a relatively hand-crafted way. … Google uses to judge the relevance of the document based on the query term.

- It took a significant effort to move from topicality (which is at its core a standard “old style” information retrieval (”IR”) metric) … signal. It was in a constant state of development from its origin until about 5 years ago. Now there is less change.

- Ranking development (especially topicality) involves solving many complex matheivlatical problems.

- For topicality, there might be a team of … engineers working continuously on these hard problems within a given project.

The reason why the vast majority of signals are hand-crafted is that if anything breaks Google knows what to fix. Google wants their signals to be fully transparent so they can trouble-shoot them and improve upon them.

- Microsoft builds very complex systems using ML techniques to optimize functions. So it’s hard to fix things - e.g., to know where to go and how to fix the function. And deep learning has made that even worse.

- This is a big advantage of Google over Bing and others. Google faced many challenges and was able to respond.

- Google can modify how a signal responds to edge cases, for example in response to various media/public attention challenges …

- Finding the correct edges for these adjustments is difficult, but would be easy to reverse engineer and copy from looking at the data.

Ranking Signals “Curves”

Google engineers plot ranking signal curves.

The curve fitting is happening at every single level of signals.

lf Google is forced to give information on clicks, URLs, and the query, it would be easy for competitors to figure out the high-level buckets that compose the final IR score. High- level buckets are:

- ABC — topicality

- Topicality is connected to a given query

- Navboost

- Quality

- Generally static across multiple queries and not connected to a specific query.

- However, in some cases Quality signal incorporates information from the query in addition to the static signal. For example, a site may have high quality but general information so a query interpreted as seeking very narrow/technical information may be used to direct to a quality site that is more technical.

Q* (page quality (i.e., the notion of trustworthiness)) is incredibly important. lf competitors see the logs, then they have a notion of “authority” for a given site.

Quality score is hugely important even today. Page quality is something people complain about the most.

- HJ started the page quality team ~ 17 years ago.

- That was around the time when the issue with content farms appeared.

- Content farms paid students 50 cents per article and they wrote 1000s of articles on each topic. Google had a huge problem with that. That’s why Google started the team to figure out the authoritative source.

- Nowadays, people still complain about the quality and AI makes it worse.

Q* is about … This was and continues to be a lot of work but could be easily reverse engineered because Q is largely static and largely related to the site rather than the query.

Other Signals

- eDeepRank. eDeepRank is an LLM system that uses BERT, transformers. Essentially, eDeepRank tries to take LLM-based signals and decompose them into components to make them more transparent. HJ doesn’t have much knowledge on the details of eDeepRank.

- PageRank. This is a single signal relating to distance from a known good source, and it is used as an input to the Quality score.

- … (popularity) signal that uses Chrome data.

Search Index

- HJ’s definition is that search index is composed of the actual content that is crawled - titles and bodies and nothing else, i.e., the inverted index.

- There are also other separate specialized inverted indexes for other things, such as feeds from Twitter, Macy’s etc. They are stored separately from the index for the organic results. When HJ says index, he means only for the 10 blue links, but as noted below, some signals are stored for convenience within the search index.

- Query-based signals are not stored, but computed at the time of query.

- Q* - largely static but in certain instances affected by the query and has to be computed online (see above)

- Query-based signals are often stored in separate tables off to the side of the index and looked up separately, but for convenience Google stores some signals in the search index.

- This way of storing the signals allowed Google to …

User-Side Data

By User Side Data, Google’s search engineers mean user interaction data, not the content/data that was created by users. E.g., links between pages that are created by people are not User Side data.

Search Features

- There are different search features - 10 blue links as well as other verticals (knowledge panels, etc). They all have their own ranking.

- Tangram (fka Tetris). HJ started the project to create Tangram to apply the basic principle of search to all of the features.

- Tangram/Tetris is another algorithm that was difficult to figure out how to do well but would be easy to reverse engineer if Google were required to disclose its click/query data. By observing the log data, it is easy to reverse engineer and to determine when to show the features and when to not.

- Knowledge Graph. Separate team (not H/’s) was involved in its development.

- Knowledge Graph is used beyond being shown on the SERP panel.

- Example — “porky pig” feature. If people query about the relation of a famous person, Knowledge Graph tells traditional search the name of the relation and the famous person, to improve search results - Barack Obama’s wife’s height query example.

- Self-help suicide box example. Incredibly important to figure it out right, and tons of work went into it, figuring out the curves, threshold, etc. With the log data, this could be easily figured out and reverse engineered, without having to do any of the work that Google did.

Reverse Engineering of Signals

There was a leak of Google documents which named certain components of Google’s ranking system, but the documents don’t go into specifics of the curves and thresholds.

The documents alone do not give you enough details to figure it out, but the data likely does.

Courtesy of SEO Book.com

Monday, October 7th, 2024

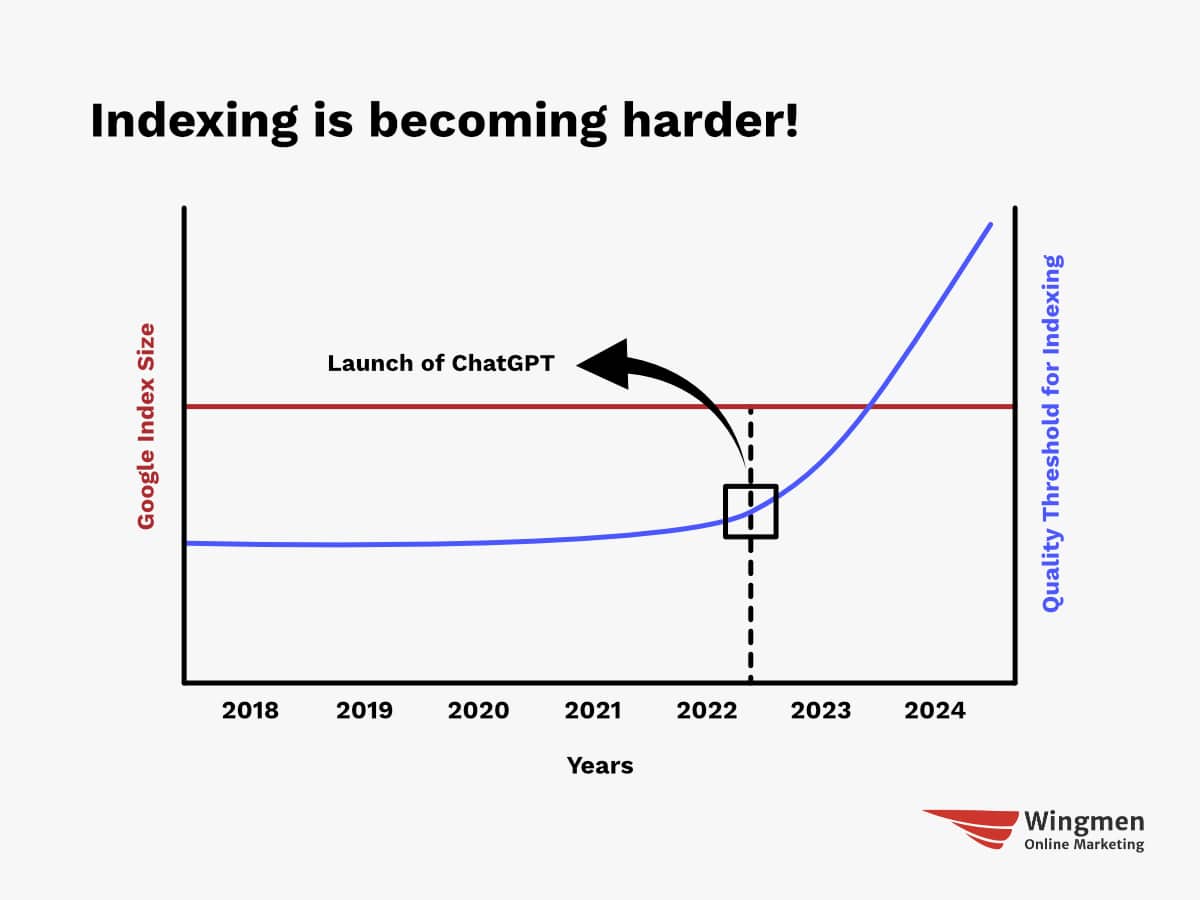

Many businesses are finding their digital marketing efforts falling flat despite producing content regularly. The culprit?

An outdated approach that neglects the growing importance of visual content in SEO.

With tech giants like Google and Apple prioritizing AI-powered visual search, companies that don’t adapt risk losing visibility and relevance.

Many enterprises lack the centralized strategies and governance needed to effectively manage visual assets across departments.

This article outlines a seven-step process to futureproof your visual content and SEO for 2025.

By implementing these strategies, you can leverage the latest trends, optimize for AI-powered search and significantly boost your online presence and engagement.

How are major giants pivoting features to embrace visual search?

Google is now integrating video and image content, primarily from YouTube, websites and third-party sites, into the Top Insights section of product and AI-generated search results pages.

This change provides users a richer, more engaging experience by offering a diverse range of information beyond text-based results and reviews.

It also allows brands to leverage image and video content to boost visibility and engagement.

Similarly, Apple has released Visual Intelligence with Vision 3, offering new features such as image segmentation and object detection.

These new capabilities allow developers to build more sophisticated and powerful applications that utilize visual information.

Why are visual content and SEO challenging for enterprises and SMEs?

The biggest challenges in visual content and SEO include a lack of centralization, inconsistent policies, governance and knowledge across departments.

Search is multimodal, meaning content creation should focus on customer intent, considering images, videos, PDFs and all other touchpoints and channels.

It is evolving beyond text to include diverse visual content. This shift requires a customer-centric approach that prioritizes intent and experience. Many companies struggle with implementing consistent best practices for visual assets across departments.

With the rise of AI-powered search, it becomes even more critical to centralize all visual assets, ensure they are optimized and consistently distribute them across all channels.

Dig deeper: Visual optimization must-haves for AI-powered search

Top trends in visual content and SEO

Featured images and interactive short-form videos

- These elements are critical for enhancing user experience, as consumers are seeking app-like interactions.

- Platforms such as TikTok and Instagram Reels have popularized short, engaging videos, making them essential for reaching audiences.

- Overall, video content helps in engagement and improve conversions and saturating SERPs.

Personalization

- Tailoring experiences based on audience, journey, demographics, location and intent is vital for brands to succeed.

Mobile dominance

- Since most images and videos are consumed on mobile devices, it is crucial to ensure that your UI, UX and assets are optimized for mobile.

In-video interaction

- Brands are exploring interactive video formats that allow viewers to choose their own path or engage in features like a 360-degree view and zooming.

- Incorporating polls and quizzes can create a more immersive and engaging experience.

Get the newsletter search marketers rely on.

7-step process to futureproof your visual content and SEO in 2025

Well-chosen featured images or videos significantly boost a website’s click-through rate (CTR) and encourage user engagement.

It is important to follow best practices such as image relevance to content, high quality, appropriate file size and format and mobile optimization.

1. Curate

Compile a list of all channels, vendors, departments and touchpoints where visual content is created and consumed.

2. Centralize

Establish policies to organize your content. Ensure all images and videos reside in a digital asset management (DAM) system and are accessible via a content delivery network (CDN).

All channels should access images directly from the DAM, avoiding multiple copies of the same image or video sitting in file folders.

3. Optimize

Use high-quality, relevant images with appropriate file formats, image tags, sitemaps and structured data to enhance discovery and visibility.

Leverage Google NLP to check for content marked as inappropriate and prioritize images relevant to the search query.

Ensure visual content doesn’t affect site speed by using next-gen image formats and implementing lazy loading.

4. Distribute

Ensure content is consumed from one central location. Use cloud infrastructure and a CDN to host and distribute your assets efficiently.

5. Application, experience and infrastructure

Leverage entity search to gain a competitive edge by implementing a clear visual hierarchy and enhancing content scannability.

Well-structured, topical pages with relevant images and videos perform better. Develop snackable videos for your unique selling proposition (USP) and customer reviews.

Create content suitable for visual snippets, such as how-to guides and recipes. The goal is to optimize for Google’s multisearch feature, which combines image, video and text searches.

Infrastructure is one of the biggest gaps most businesses face.

Most DAM systems are designed only to store images and lack the capability to optimize them easily.

Having a DAM that provides real-time scoring of your asset quality and connects seamlessly with your websites and other channels is essential.

Dig deeper: Future-proofing digital experience in AI-first semantic search

6. Governance and checklist

Establish robust governance and checklists around quality, consistency and usage across all departments.

Continuously test which images are performing well in SERPs and conversions to refine your checklist.

7. Metrics and KPIs

Develop metrics to track SERP and rich snippet saturation, presence in AI overviews, overall click-through rates (CTR), clicks from visual search, engagement rates and page bounce rates.

As Google and other search engines incorporate conversational AI, short videos, images, and social media posts into search results – shifting away from traditional website listings – these strategies will help you effectively use visual content in 2025.

Success stories

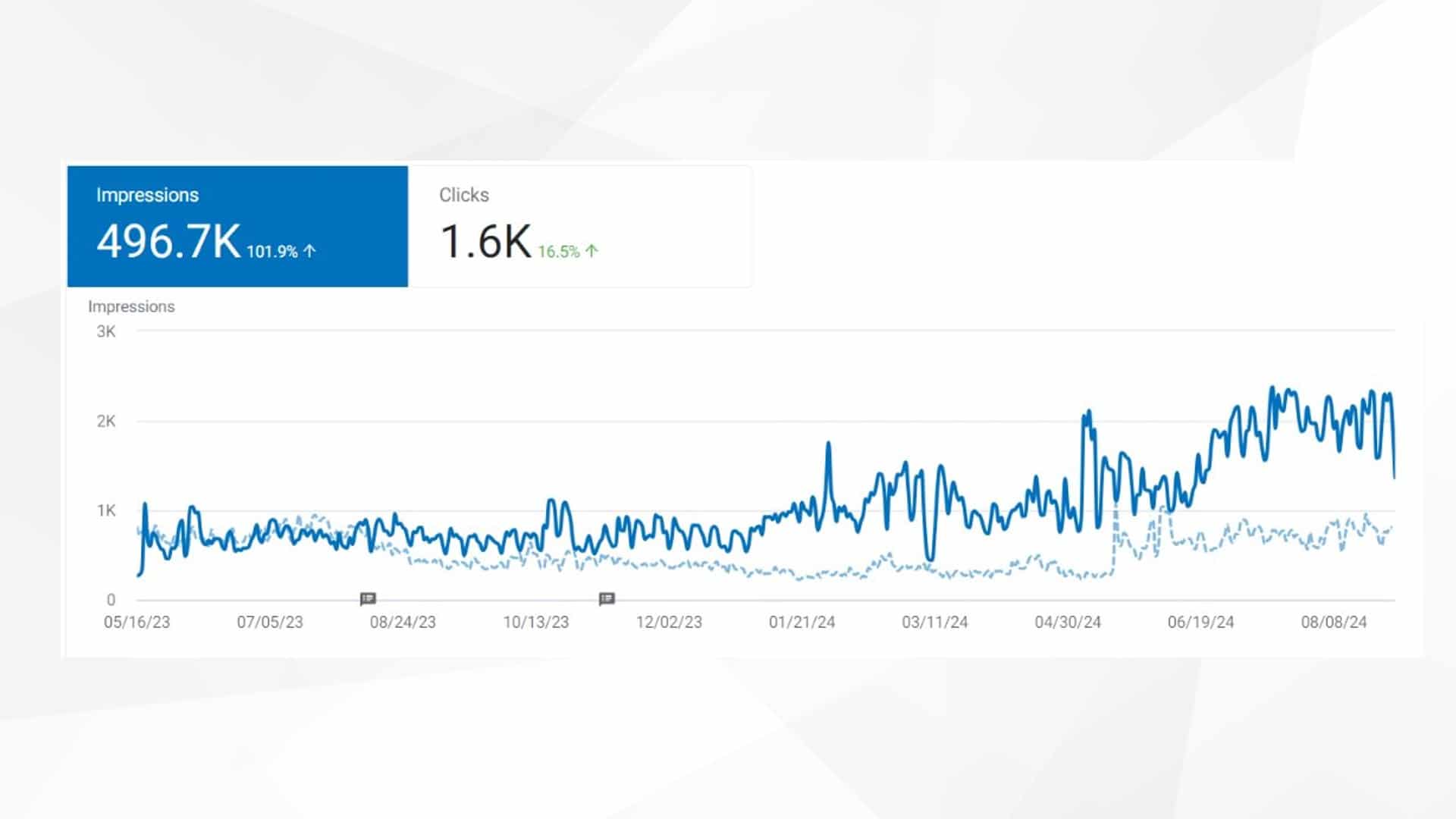

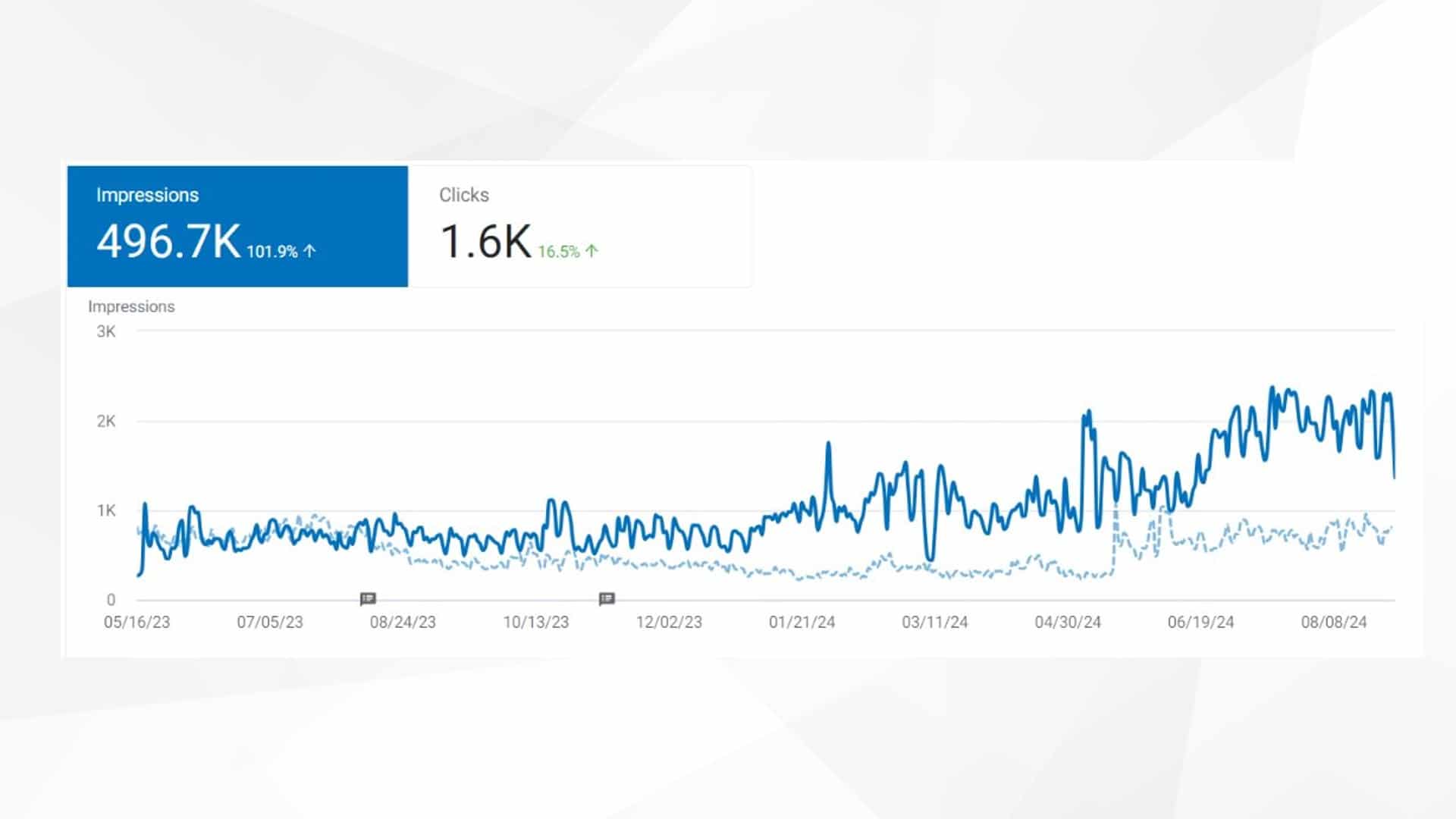

Using the seven-step process as mentioned above, our clients were able to drive phenomenal success for their images on search.

A popular hotel in Georgetown saw a 104% increase in the number of times images appeared in search results versus the previous period.

Search results appearances lift:

A Massachusetts Resort and Spa saw a staggering lift in its visual search performance:

- + 871% increase in the number of times images appeared in search results versus the previous period.

- + 101% increase in overall image impressions versus the previous period.

Search results appearances lift:

Impressions lift:

Dominate visual search with well-optimized images and videos

Video and images are powerful tools for enhancing SEO and boosting online visibility. As LLMs become increasingly skilled at understanding and generating both text and visuals, you must prepare for more integrated visual-textual content creation and optimization strategies.

By prioritizing these areas, you can stay ahead of the curve in visual content and SEO for 2025. Embracing the latest technologies and features released by major tech companies will enable you to enhance your online presence and improve searchability.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Monday, October 7th, 2024

Next month, thousands of seasoned search marketers will gather online to learn next-level SEO, PPC, and generative AI tactics, get in-depth answers to specific questions, and connect with like-minded community members and subject experts.

Are you ready to join them?

Your free SMX Next pass is just a few clicks away, and we can’t wait to host you online, November 13-14. The agenda is now live and ready for you to explore!

It’s all hand-crafted by the Search Engine Land programming committee, including Danny Goodwin, Barry Schwartz, Anu Adegbola, Brad Geddes, Eric Enge, and Greg Finn. Here’s a look at everything you get:

- Two keynote conversations about 2025 SEO and PPC trends, plus live Q&A.

- Actionable sessions on GEO, GenAI, N-E-E-A-T-T, and other critical search topics.

- Coffee Talk meetups with like-minded marketers and subject matter experts.

- Live Q&A with speakers including Amy Hebdon, Fred Vallaeys, Melissa Mackey, and more.

- Instant on-demand access for 180 days so you can watch and rewatch at your own pace.

- A personalized certificate of attendance to showcase your knowledge of the latest in search.

For nearly 20 years, more than 200,000 search marketers from around the world have attended SMX to learn game-changing tactics and make career-defining connections.

Don’t miss your final opportunity in 2024 to join them online for the only training event programmed by Search Engine Land, the industry publication you trust to stay competitive. Grab your free pass now!

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Monday, October 7th, 2024

Many search engines rely on structured data to enhance user experiences – and this trend will likely intensify in 2025.

For this reason, structured data is no longer a “nice-to-have” but an essential part of any SEO strategy.

Here’s what you need to know about structured data, including why it matters, important trends, key schema types, advanced techniques and more.

What is structured data?

Structured data is a standardized format for organizing and labeling page content that helps search engines understand it more effectively.

Google uses structured data to create enhanced listings, rich results and various features in search engine results pages (SERPs).

Being included in these features can boost your website’s visibility and organic reach, especially in entity-based searches.

Vocabulary

The most commonly used vocabulary for structured data is Schema.org, an open-source framework that provides an extensive library of types and properties.

Schema.org includes hundreds of predefined types, such as Product, Event or Person and properties like name, price and description.

Format

The preferred format for implementing structured data is JSON-LD (JavaScript Object Notation for Linked Data), which is endorsed by Google and other search engines.

JSON-LD encapsulates structured data within a <script> tag, keeping it separate from the core HTML.

This approach makes it more flexible, easier to implement and less intrusive. JSON-LD is particularly useful for dynamic content on larger websites.

Validation

Correct implementation of structured data is essential to be eligible for rich results.

To verify that structured data is properly implemented and can be processed by search engines, use tools like:

These tools check for errors or omissions in the schema, ensuring the markup is valid and effective.

Dig deeper: What is technical SEO?

Why structured data matters more than ever

Structured data enables search engines to interpret website content more deeply, enhancing how pages are indexed and presented in search results.

It allows brands to reach audiences in less competitive areas of search, such as voice and image search, allowing sites to drive traffic and engagement outside of traditional SEO.

Zero-click search and brand authority

More and more SERP features, like knowledge panels and featured snippets, depend on structured data to provide answers directly in search results. This means users can get information without clicking through the publisher’s site.

This rise of so-called zero-click searches, has made structured data even more indispensable in SEO.

While these features offer limited opportunity to drive visits, they can boost organic impressions, enhance brand recognition and maintain user interaction with the brand.

Being regularly shown in the rich results reinforce top-of-mind awareness (TOMA) – and in the E-E-A-T world, a trusted and authoritative brand is crucial for success in SEO.

Key schema types to use in 2025

While new schema types emerge regularly and should be tested where relevant, several “evergreen” types have proven their effectiveness over time.

Ecommerce

Product schema (often used together with Offer and Review schema) is essential for ecommerce, providing details about products, such as price, availability and reviews.

This schema powers rich snippets like product carousels and review stars in SERPs, significantly improving click-through rates.

Merchant listings (a combination of Product and Offer schema) can be a great way for new ecommerce sites to gain early visibility and traffic through Google Shopping.

AggregateOffer schema is ideal for marketplace websites, as it enables multiple vendors’ offers to be represented for the same product, allowing users to compare prices and options.

Informational

FAQ schema allows websites to present common questions and answers directly in the search results.

It is highly effective for improving user engagement by providing concise answers for conversational queries and can appear in rich results and voice search.

Q&A schema is designed for pages where users can submit questions and multiple answers are provided and is often found in forums or community-based platforms.

It powers a Q&A carousel that features both questions and answers directly in the SERP, increasing visibility and CTR for long-tail queries and conversational searches.

Article and WebPage schema can boost visibility in Google News, Discover and top stories carousels and get exposure in voice search.

Events

Event schema is used to mark up details about virtual or physical events, such as concerts, conferences, webinars or local meetups.

It provides specifics like the date, location, start and end times, ticket availability, and performers. This information can be included in Google’s event listings, enhancing visibility in local or event-related searches.

Newly supported properties for BroadcastEvent and ScreeningEvent enhance how live events and screenings are presented in search.

Dig deeper: How to deploy advanced schema at scale

Get the newsletter search marketers rely on.

How to use structured data in 2025

While the applications below are not entirely new, their importance is set to increase through 2025 as user behaviors continue to shift.

Entity-based search

Entity-based search is where search engines prioritize entities – people, places, things and concepts – over individual keywords.

Instead of focusing on isolated words and relationships between them, search engines now better understand connections between entities and how they fit into a broader context.

Structured data like Person, Organization or Place schema can clearly define relevant entities, enhancing their visibility in Knowledge Graphs and entity-based results.

Likewsie, SameAs schema can be used to help search engines understand that an entity mentioned on one page is the same as an entity mentioned elsewhere.

By marking up an entity with SameAs and linking it to trusted external sources, such as Wikidata, Wikipedia or authoritative social media profiles, it’s possible to reinforce the association and improve its recognition and reach in Knowledge Graphs and rich search results.

Dig deeper: How to use entities in schema to improve Google’s understanding of your content

Speakable

Speakable schema (in beta at Google) is an important tool for optimizing content for voice search results.

It helps search engines identify which sections of a webpage are best suited for audio playback, including Google Assistant-enabled devices using TTS.

The goal is to provide concise, clear answers to users’ questions in spoken format. This is especially useful for news websites and publishers, as they can mark up critical content to be featured in voice responses.

Multimodal search

Multimodal search allows users to query search engines using various forms of input, such as text, images and voice, sometimes combined in a single query.

This is largely driven by AI models designed to process multiple data types simultaneously.

Structured data like VideoObject and ImageObject ensure that multimedia content is properly understood, indexed and ranked.

Schema nesting

Schema nesting allows for the representation of more complex relationships within structured data.

By nesting one schema type within another – such as a Product schema within an Offer schema, further nested within a LocalBusiness schema – it’s possible to communicate layered information about product availability, pricing and location.

This enables search engines to understand not just individual data points but how they are connected, leading to more contextually rich search results, like specific local availability and offers tied to individual businesses.

Another example might be a Recipe schema nested within a HowTo schema, further nested within a Person schema.

This communicates that a specific person (author or chef) created the recipe, which in turn contains step-by-step instructions on how to prepare the dish.

Person schema would include the chef’s name, bio and social profiles. HowTo schema would describe the cooking process, including the steps and required materials.Recipe schema provides details like the list of ingredients, preparation time and nutritional information.

This setup would enable search engines to display rich snippets that include the recipe’s creator, ingredients and cooking instructions in a clear and organized manner.

While the primary benefits of schema nesting include improved contextual understanding and enhanced rich results, nesting also adds flexibility for meeting different search intents, allowing search engines to prioritize information based on the query.

Maximize your search visibility with structured data

Structured data is already a critical driver of SEO success for many websites, both large and small.

With hundreds of schema types, dozens of Google SERP features and increasing applications in AI and voice search, its importance will only continue to grow in 2025.

As Google and other search platforms continue to change, carefully and creatively leveraged structured data can provide a significant competitive edge, allowing to gain visibility in existing features and prepare for future opportunities.

Dig deeper: How schema markup establishes trust and boosts information gain

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Monday, October 7th, 2024

As artificial intelligence (AI) becomes increasingly integrated into content creation, it’s easy to feel like the human element of writing might be overshadowed.

While AI offers powerful tools that enhance productivity, streamline workflows and even generate content, it’s essential to retain your personal touch to create engaging and authentic material.

This article explores how you can balance AI with your creativity, ensuring your unique voice shines through.

1. Understand AI’s role in content creation

AI tools can help generate ideas, draft content, edit and optimize for search, making content creation faster and more efficient.

However, AI lacks the nuanced understanding of human emotions, context and cultural insights that come naturally to humans.

Recognizing AI’s limitations is the first step in using it as a supportive tool rather than a replacement for human creativity.

Use AI for repetitive tasks, not original thought

AI excels at handling repetitive tasks, but it falls short when it comes to the subtleties of original thought, humor and storytelling.

By delegating routine tasks to AI, you can free up valuable time to focus on the creative elements that rely on human intuition and personal experience.

- Automate research and data analysis: Use AI to gather information, analyze trends or compile statistics, allowing you to spend more time crafting a compelling narrative.

- Draft assistance: Let AI provide you with a starting point or outline for your content, but take the lead in shaping it into something uniquely yours.

Dig deeper: AI content creation: A beginner’s guide

2. Use AI as a creative partner, not your replacement

Think of AI as a co-creator. It’s there to assist, suggest and optimize – not to replace your unique voice.

AI can provide a structural backbone or help refine your content, but your ideas, insights and personal experiences are what will set your work apart.

Refine AI outputs with human insight

AI-generated content often needs a human touch to make it relatable and engaging.

Review and edit AI suggestions to align them with your voice, adding personal anecdotes, humor and insights that reflect your expertise and personality.

- Inject personal experiences: Add stories, examples or perspectives that only you can provide. This personal touch creates a connection with your audience that AI cannot replicate.

- Adjust for tone and style: AI can mimic various tones but often produces content that lacks warmth or emotional depth. Edit to include the subtleties of language that resonate with your readers.

Dig deeper: How to make your AI-generated content sound more human

3. Customize AI to match your voice

Many AI tools offer customization features that allow you to adjust settings to match your preferred tone, style and language. This ensures that AI outputs align more closely with your established brand voice.

Define your voice

To ensure consistency, take time to define your writing style. Are you formal, conversational, witty or authoritative?

Establish guidelines that reflect your voice and regularly tweak AI settings to match these preferences.

- Set clear parameters: Provide the AI with detailed prompts that include your desired tone, target audience and any specific stylistic nuances. The more context you provide, the better the AI can tailor its outputs to your needs.

- Iterate and refine: Use the feedback loop to your advantage. If the AI-generated content feels off, refine the prompts and give feedback to nudge the tool closer to your style.

Dig deeper: 3 ways to add a human touch to AI-generated content

4. Provide clear prompts and feedback to AI

AI relies heavily on the quality of the instructions it receives.

Vague prompts can lead to generic content, while detailed, contextual prompts can produce outputs that better reflect your intentions.

Give context-rich prompts

Instead of generic commands, offer AI tools with specific guidelines.

Don’t just ask AI to “Write an article introduction.”

Try “Write an engaging introduction for a blog post about sustainable fashion, targeted at eco-conscious millennials, in a friendly and approachable tone.”

- Focus on audience needs: When directing AI, always keep your audience in mind. Prompt AI to address their pain points, preferences and language style to create content that speaks directly to them.

- Refine outputs with detailed feedback: If AI’s first attempt isn’t quite right, refine it by tweaking prompts or adding more detailed instructions. This iterative process helps align AI-generated content with your vision.

Dig deeper: Advanced AI prompt engineering strategies for SEO

Get the newsletter search marketers rely on.

5. Enhance emotional resonance with AI content

AI-generated content can often feel sterile or detached.

While AI is good at generating logical and coherent text, it struggles to evoke emotions. Enhancing content with emotional resonance is where human creativity comes into play.

Add emotion, empathy and authenticity

After the AI generates content, go through it with a fine-tooth comb to add emotional elements that AI might miss. Infuse the text with empathy, humor, passion or urgency to make it more engaging.

- Use relatable language: Replace formal or robotic language with conversational and relatable phrases. Address your readers as if you’re speaking directly to them, making your content feel more personal.

- Connect through storytelling: Share personal stories, case studies or real-world examples that illustrate key points. These elements help bridge the gap between AI’s capabilities and human touch.

6. Personalize content for your audience

AI can analyze data to personalize content suggestions based on user preferences, but it’s your understanding of your audience that will make your content truly resonate. Use AI’s insights as a guide, but rely on your knowledge of your readers to fine-tune the final product.

Blend data-driven insights with human understanding

AI can highlight what topics are trending or what keywords to focus on, but only you can interpret this data in a way that speaks directly to your audience’s needs and values.

- Craft tailored messages: Use AI to segment your audience and suggest tailored content, but ensure the final message reflects your understanding of their motivations and desires.

- Maintain authenticity: Even when personalizing at scale, keep your brand’s authenticity intact. Avoid overly automated responses that can feel impersonal or generic.

Dig deeper: How to build and retain brand trust in the age of AI

7. Keep your creative process transparent

Transparency about your content creation process can actually enhance trust with your audience.

Letting readers know that AI aids in content creation while emphasizing the human touch reinforces the value of your insights and creativity.

Share behind-the-scenes insights

Consider sharing how you blend AI and human creativity in your workflow. This transparency can build a connection with your audience, showing them that while you use advanced tools, your unique voice remains at the core.

- Educate your audience: If AI tools are a part of your content process, explain how they help improve your work. This can position you as a forward-thinking creator who values both innovation and authenticity.

- Highlight human contributions: When presenting AI-assisted content, highlight the areas where your personal input was most significant, whether in crafting the narrative, adding context or injecting personality.

Dig deeper: AI-generated content: To label or not to label?

8. Embrace AI without losing yourself

The ultimate goal of integrating AI into content creation is to enhance your capabilities, not replace your essence. Remember that AI is a tool – an incredibly advanced and helpful one – but your creativity, judgment and personal touch are irreplaceable.

Stay true to your voice

Regularly revisit your content to ensure it aligns with your personal or brand voice. AI should amplify what makes your writing special, not dilute it.

By staying true to your style, you ensure that your audience connects with the real you, even in an AI-enhanced world.

- Continual earning and adaptation: Stay curious about how AI can improve your process, but remain committed to your core principles as a creator. Adapt your use of AI as it evolves, but always keep your voice at the forefront.

Crafting authentic content in the AI era

Balancing AI with human creativity requires a thoughtful approach that leverages technology’s strengths while preserving your authentic voice.

You can achieve the best of both worlds by treating AI as a creative partner, offering clear guidance and incorporating personal insights.

AI can enhance efficiency, improve accuracy, and spark new ideas, but it’s your creativity, empathy and unique touch that will truly resonate with your audience.

Use AI strategically, ensuring your authentic voice remains the highlight of your content. After all, while AI is here to stay, it’s human intelligence that drives meaningful connections.

Dig deeper: Future of AI in content marketing: Key trends and 7 predictions

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Saturday, October 5th, 2024

When we were young, we all wanted to sit at the adult’s table. But we couldn’t, often, because of our behavior.

Growing up, we often thought we were cool, but we weren’t. Look back at your past. There are probably photos or things you did that you find questionable today.

This is exactly how we SEOs should be looking at our work. SEO has grown up a lot; we SEOs didn’t.

Our mindset is a problem when tackling challenges like needing a strong brand or satisfying users the most.

What we do:

What we do:

- Faking first-hand experience and looking for things we can leave out “because Google cannot measure that.”

- Buying (terrible) links (no one clicks), a practice that will be sketchy.

This is the wrong approach.

What we should be doing:

What we should be doing:

- Proving our experience, expertise, authoritativeness and trustworthiness.