Archive for the ‘seo news’ Category

Thursday, August 4th, 2022

When the acorn that would become the SEO industry started to grow, indexing and ranking at search engines were both based purely on keywords.

The search engine would match keywords in a query to keywords in its index parallel to keywords that appeared on a webpage.

Pages with the highest relevancy score would be ranked in order using one of the three most popular retrieval techniques:

- Boolean Model

- Probabilistic Model

- Vector Space Model

The vector space model became the most relevant for search engines.

I’m going to revisit the basic and somewhat simple explanation of the classic model I used back in the day in this article (because it is still relevant in the search engine mix).

Along the way, we’ll dispel a myth or two – such as the notion of “keyword density” of a webpage. Let’s put that one to bed once and for all.

The keyword: One of the most commonly used words in information science; to marketers – a shrouded mystery

“What’s a keyword?”

You have no idea how many times I heard that question when the SEO industry was emerging. And after I’d given a nutshell of an explanation, the follow-up question would be: “So, what are my keywords, Mike?”

Honestly, it was quite difficult trying to explain to marketers that specific keywords used in a query were what triggered corresponding webpages in search engine results.

And yes, that would almost certainly raise another question: “What’s a query, Mike?”

Today, terms like keyword, query, index, ranking and all the rest are commonplace in the digital marketing lexicon.

However, as an SEO, I believe it’s eminently useful to understand where they’re drawn from and why and how those terms still apply as much now as they did back in the day.

The science of information retrieval (IR) is a subset under the umbrella term “artificial intelligence.” But IR itself is also comprised of several subsets, including that of library and information science.

And that’s our starting point for this second part of my wander down SEO memory lane. (My first, in case you missed it, was: We’ve crawled the web for 32 years: What’s changed?)

This ongoing series of articles is based on what I wrote in a book about SEO 20 years ago, making observations about the state-of-the-art over the years and comparing it to where we are today.

The little old lady in the library

So, having highlighted that there are elements of library science under the Information Retrieval banner, let me relate where they fit into web search.

Seemingly, librarians are mainly identified as little old ladies. It certainly appeared that way when I interviewed several leading scientists in the emerging new field of “web” Information Retrial (IR) all those years ago.

Brian Pinkerton, inventor of WebCrawler, along with Andrei Broder, Vice President Technology and Chief Scientist with Alta Vista, the number one search engine before Google and indeed Craig Silverstein, Director of Technology at Google (and notably, Google employee number one) all described their work in this new field as trying to get a search engine to emulate “the little old lady in the library.”

Libraries are based on the concept of the index card – the original purpose of which was to attempt to organize and classify every known animal, plant, and mineral in the world.

Index cards formed the backbone of the entire library system, indexing vast and varied amounts of information.

Apart from the name of the author, title of the book, subject matter and notable “index terms” (a.k.a., keywords), etc., the index card would also have the location of the book. And therefore, after a while “the little old lady librarian” when you asked her about a particular book, would intuitively be able to point not just to the section of the library, but probably even the shelf the book was on, providing a personalized rapid retrieval method.

However, when I explained the similarity of that type of indexing system at search engines as I did all those years back, I had to add a caveat that’s still important to grasp:

“The largest search engines are index based in a similar manner to that of a library. Having stored a large fraction of the web in massive indices, they then need to quickly return relevant documents against a given keyword or phrase. But the variation of web pages, in terms of composition, quality, and content, is even greater than the scale of the raw data itself. The web as a whole has no unifying structure, with an enormous variant in the style of authoring and content far wider and more complex than in traditional collections of text documents. This makes it almost impossible for a search engine to apply strictly conventional techniques used in libraries, database management systems, and information retrieval.”

Inevitably, what then occurred with keywords and the way we write for the web was the emergence of a new field of communication.

As I explained in the book, HTML could be viewed as a new linguistic genre and should be treated as such in future linguistic studies. There’s much more to a Hypertext document than there is to a “flat text” document. And that gives more of an indication to what a particular web page is about when it is being read by humans as well as the text being analyzed, classified, and categorized through text mining and information extraction by search engines.

Sometimes I still hear SEOs referring to search engines “machine reading” web pages, but that term belongs much more to the relatively recent introduction of “structured data” systems.

As I frequently still have to explain, a human reading a web page and search engines text mining and extracting information “about” a page is not the same thing as humans reading a web page and search engines being” fed” structured data.

The best tangible example I’ve found is to make a comparison between a modern HTML web page with inserted “machine readable” structured data and a modern passport. Take a look at the picture page on your passport and you’ll see one main section with your picture and text for humans to read and a separate section at the bottom of the page, which is created specifically for machine reading by swiping or scanning.

Quintessentially, a modern web page is structured kind of like a modern passport. Interestingly, 20 years ago I referenced the man/machine combination with this little factoid:

“In 1747 the French physician and philosopher Julien Offroy de la Mettrie published one of the most seminal works in the history of ideas. He entitled it L’HOMME MACHINE, which is best translated as “man, a machine.” Often, you will hear the phrase ‘of men and machines’ and this is the root idea of artificial intelligence.”

I emphasized the importance of structured data in my previous article and do hope to write something for you that I believe will be hugely helpful to understand the balance between humans reading and machine reading. I totally simplified it this way back in 2002 to provide a basic rationalization:

- Data: a representation of facts or ideas in a formalized manner, capable of being communicated or manipulated by some process.

- Information: the meaning that a human assigns to data by means of the known conventions used in its representation.

Therefore:

- Data is related to facts and machines.

- Information is related to meaning and humans.

Let’s talk about the characteristics of text for a minute and then I’ll cover how text can be represented as data in something “somewhat misunderstood” (shall we say) in the SEO industry called the vector space model.

The most important keywords in a search engine index vs. the most popular words

Ever heard of Zipf’s Law?

Named after Harvard Linguistic Professor George Kingsley Zipf, it predicts the phenomenon that, as we write, we use familiar words with high frequency.

Zipf said his law is based on the main predictor of human behavior: striving to minimize effort. Therefore, Zipf’s law applies to almost any field involving human production.

This means we also have a constrained relationship between rank and frequency in natural language.

Most large collections of text documents have similar statistical characteristics. Knowing about these statistics is helpful because they influence the effectiveness and efficiency of data structures used to index documents. Many retrieval models rely on them.

There are patterns of occurrences in the way we write – we generally look for the easiest, shortest, least involved, quickest method possible. So, the truth is, we just use the same simple words over and over.

As an example, all those years back, I came across some statistics from an experiment where scientists took a 131MB collection (that was big data back then) of 46,500 newspaper articles (19 million term occurrences).

Here is the data for the top 10 words and how many times they were used within this corpus. You’ll get the point pretty quickly, I think:

Word frequency

the: 1130021

of 547311

to 516635

a 464736

in 390819

and 387703

that 204351

for 199340

is 152483

said 148302

Remember, all the articles included in the corpus were written by professional journalists. But if you look at the top ten most frequently used words, you could hardly make a single sensible sentence out of them.

Because these common words occur so frequently in the English language, search engines will ignore them as “stop words.” If the most popular words we use don’t provide much value to an automated indexing system, which words do?

As already noted, there has been much work in the field of information retrieval (IR) systems. Statistical approaches have been widely applied because of the poor fit of text to data models based on formal logics (e.g., relational databases).

So rather than requiring that users will be able to anticipate the exact words and combinations of words that may appear in documents of interest, statistical IR lets users simply enter a string of words that are likely to appear in a document.

The system then takes into account the frequency of these words in a collection of text, and in individual documents, to determine which words are likely to be the best clues of relevance. A score is computed for each document based on the words it contains and the highest scoring documents are retrieved.

I was fortunate enough to Interview a leading researcher in the field of IR when researching myself for the book back in 2001. At that time, Andrei Broder was Chief Scientist with Alta Vista (currently Distinguished Engineer at Google), and we were discussing the topic of “term vectors” and I asked if he could give me a simple explanation of what they are.

He explained to me how, when “weighting” terms for importance in the index, he may note the occurrence of the word “of” millions of times in the corpus. This is a word which is going to get no “weight” at all, he said. But if he sees something like the word “hemoglobin”, which is a much rarer word in the corpus, then this one will get some weight.

I want to take a quick step back here before I explain how the index is created, and dispel another myth that has lingered over the years. And that’s the one where many people believe that Google (and other search engines) are actually downloading your web pages and storing them on a hard drive.

Nope, not at all. We already have a place to do that, it’s called the world wide web.

Yes, Google maintains a “cached” snapshot of the page for rapid retrieval. But when that page content changes, the next time the page is crawled the cached version changes as well.

That’s why you can never find copies of your old web pages at Google. For that, your only real resource is the Internet Archive (a.k.a., The Wayback Machine).

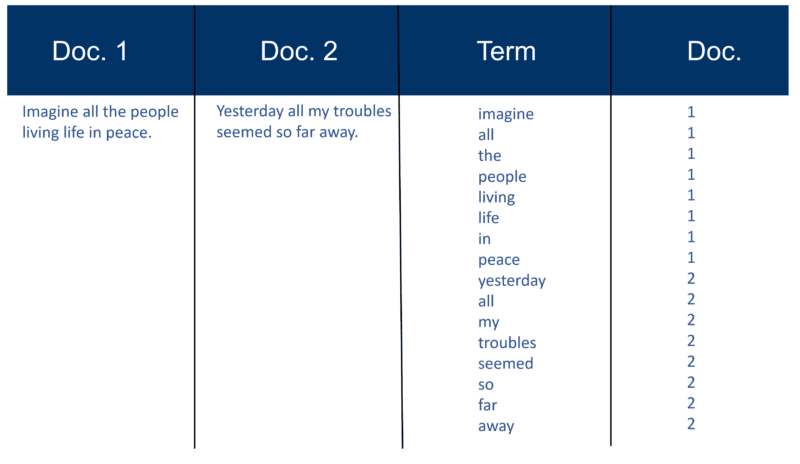

In fact, when your page is crawled it’s basically dismantled. The text is parsed (extracted) from the document.

Each document is given its own identifier along with details of the location (URL) and the “raw data” is forwarded to the indexer module. The words/terms are saved with the associated document ID in which it appeared.

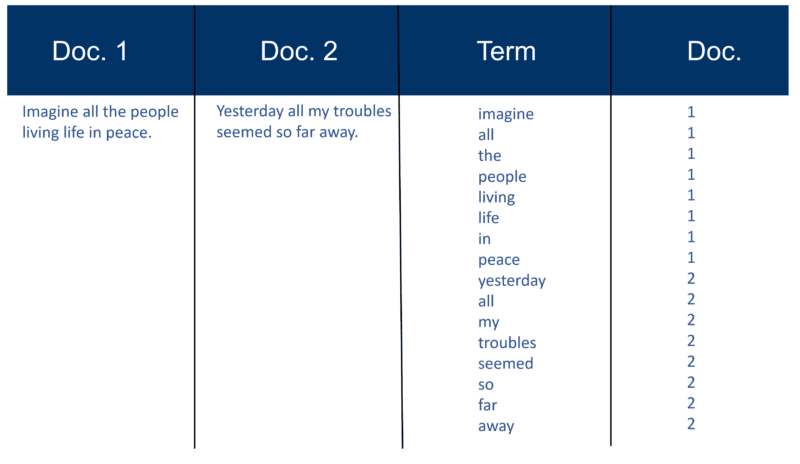

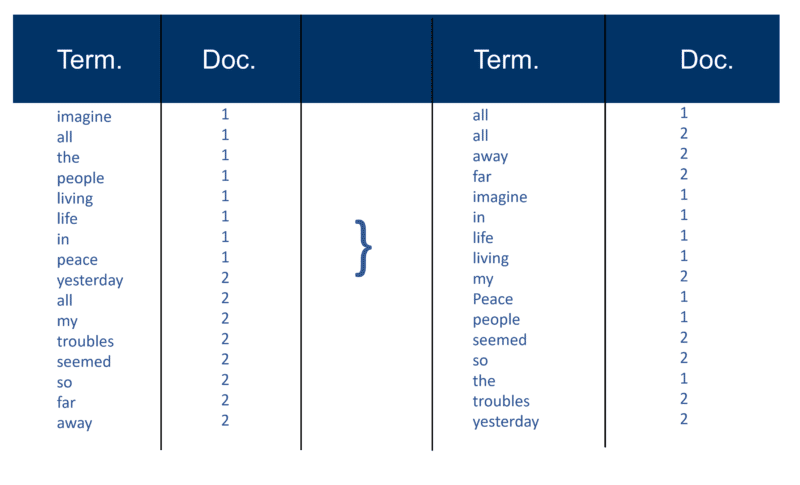

Here’s a very simple example using two Docs and the text they contain that I created 20 years ago.

Recall index construction

After all the documents have been parsed, the inverted file is sorted by terms:

In my example this looks fairly simple at the start of the process, but the postings (as they are known in information retrieval terms) to the index go in one Doc at a time. Again, with millions of Docs, you can imagine the amount of processing power required to turn this into the massive ‘term wise view’ which is simplified above, first by term and then by Doc within each term.

You’ll note my reference to “millions of Docs” from all those years ago. Of course, we’re into billions (even trillions) these days. In my basic explanation of how the index is created, I continued with this:

Each search engine creates its own custom dictionary (or lexicon as it is – remember that many web pages are not written in English), which has to include every new ‘term’ discovered after a crawl (think about the way that, when using a word processor like Microsoft Word, you frequently get the option to add a word to your own custom dictionary, i.e. something which does not occur in the standard English dictionary). Once the search engine has its ‘big’ index, some terms will be more important than others. So, each term deserves its own weight (value). A lot of the weighting factor depends on the term itself. Of course, this is fairly straight forward when you think about it, so more weight is given to a word with more occurrences, but this weight is then increased by the ‘rarity’ of the term across the whole corpus. The indexer can also give more ‘weight’ to words which appear in certain places in the Doc. Words which appeared in the title tag <title> are very important. Words which are in <h1> headline tags or those which are in bold <b> on the page may be more relevant. The words which appear in the anchor text of links on HTML pages, or close to them are certainly viewed as very important. Words that appear in <alt> text tags with images are noted as well as words which appear in meta tags.

Apart from the original text “Modern Information Retrieval” written by the scientist Gerard Salton (regarded as the father of modern information retrieval) I had a number of other resources back in the day who verified the above. Both Brian Pinkerton and Michael Maudlin (inventors of the search engines WebCrawler and Lycos respectively) gave me details on how “the classic Salton approach” was used. And both made me aware of the limitations.

Not only that, Larry Page and Sergey Brin highlighted the very same in the original paper they wrote at the launch of the Google prototype. I’m coming back to this as it’s important in helping to dispel another myth.

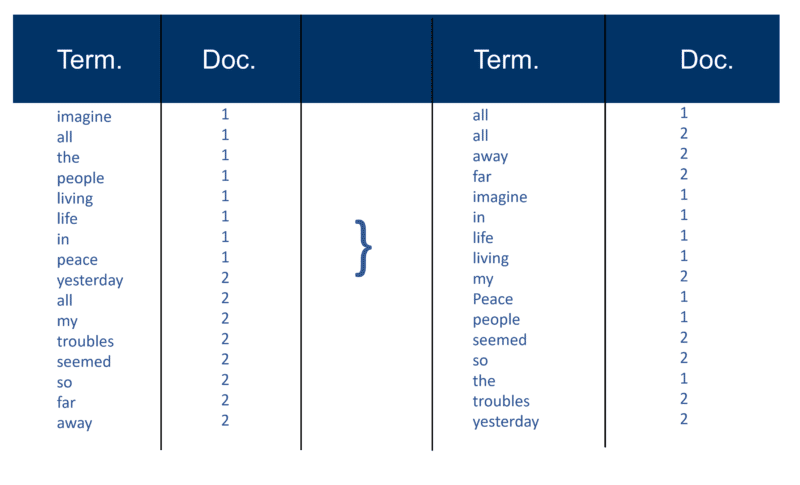

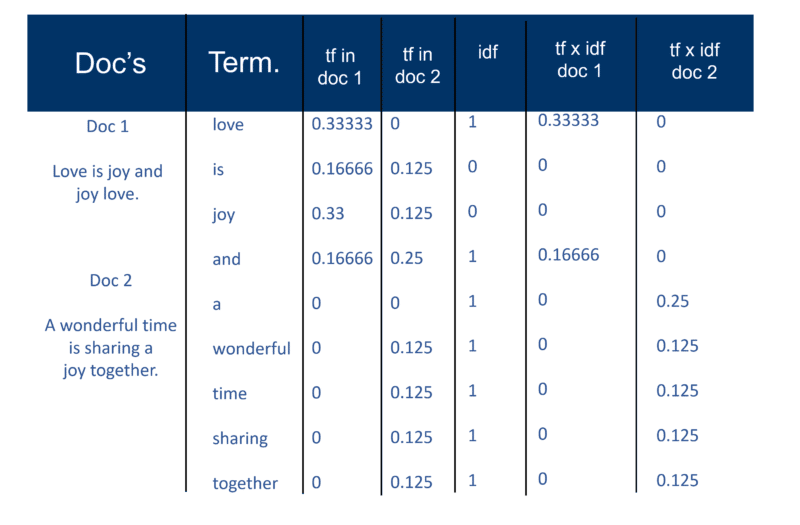

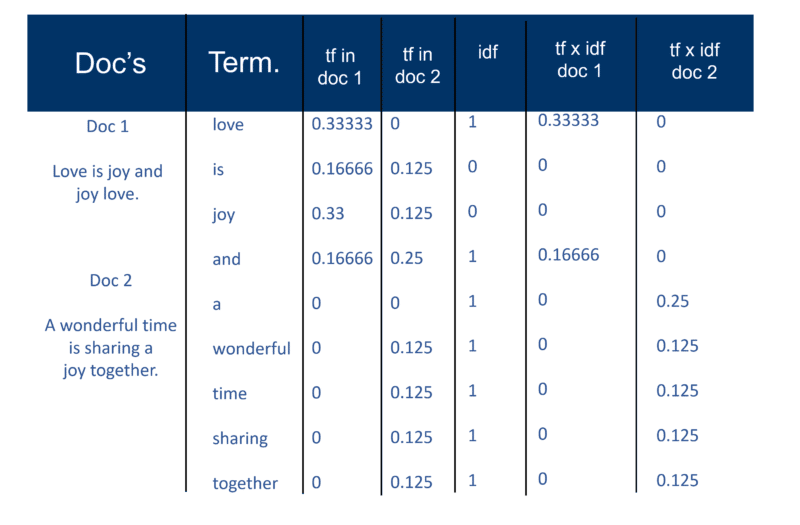

But first, here’s how I explained the “classic Salton approach” back in 2002. Be sure to note the reference to “a term weight pair.”

Once the search engine has created its ‘big index’ the indexer module then measures the ‘term frequency’ (tf) of the word in a Doc to get the ‘term density’ and then measures the ‘inverse document frequency’ (idf) which is a calculation of the frequency of terms in a document; the total number of documents; the number of documents which contain the term. With this further calculation, each Doc can now be viewed as a vector of tf x idf values (binary or numeric values corresponding directly or indirectly to the words of the Doc). What you then have is a term weight pair. You could transpose this as: a document has a weighted list of words; a word has a weighted list of documents (a term weight pair).

The Vector Space Model

Now that the Docs are vectors with one component for each term, what has been created is a ‘vector space’ where all the Docs live. But what are the benefits of creating this universe of Docs which all now have this magnitude?

In this way, if Doc ‘d’ (as an example) is a vector then it’s easy to find others like it and also to find vectors near it.

Intuitively, you can then determine that documents, which are close together in vector space, talk about the same things. By doing this a search engine can then create clustering of words or Docs and add various other weighting methods.

However, the main benefit of using term vectors for search engines is that the query engine can regard a query itself as being a very short Doc. In this way, the query becomes a vector in the same vector space and the query engine can measure each Doc’s proximity to it.

The Vector Space Model allows the user to query the search engine for “concepts” rather than a pure “lexical” search. As you can see here, even 20 years ago the notion of concepts and topics as opposed to just keywords was very much in play.

OK, let’s tackle this “keyword density” thing. The word “density” does appear in the explanation of how the vector space model works, but only as it applies to the calculation across the entire corpus of documents – not to a single page. Perhaps it’s that reference that made so many SEOs start using keyword density analyzers on single pages.

I’ve also noticed over the years that many SEOs, who do discover the vector space model, tend to try and apply the classic tf x idf term weighting. But that’s much less likely to work, particularly at Google, as founders Larry Page and Sergey Brin stated in their original paper on how Google works – they emphasize the poor quality of results when applying the classic model alone:

“For example, the standard vector space model tries to return the document that most closely approximates the query, given that both query and document are vectors defined by their word occurrence. On the web, this strategy often returns very short documents that are only the query plus a few words.”

There have been many variants to attempt to get around the ‘rigidity’ of the Vector Space Model. And over the years with advances in artificial intelligence and machine learning, there are many variations to the approach which can calculate the weighting of specific words and documents in the index.

You could spend years trying to figure out what formulae any search engine is using, let alone Google (although you can be sure which one they’re not using as I’ve just pointed out). So, bearing this in mind, it should dispel the myth that trying to manipulate the keyword density of web pages when you create them is a somewhat wasted effort.

Solving the abundance problem

The first generation of search engines relied heavily on on-page factors for ranking.

But the problem you have using purely keyword-based ranking techniques (beyond what I just mentioned about Google from day one) is something known as “the abundance problem” which considers the web growing exponentially every day and the exponential growth in documents containing the same keywords.

And that poses the question on this slide which I’ve been using since 2002:

If a music student has a web page about Beethoven’s Fifth Symphony and so does a world-famous orchestra conductor (such as Andre Previn), who would you expect to have the most authoritative page?

If a music student has a web page about Beethoven’s Fifth Symphony and so does a world-famous orchestra conductor (such as Andre Previn), who would you expect to have the most authoritative page?

You can assume that the orchestra conductor, who has been arranging and playing the piece for many years with many orchestras, would be the most authoritative. But working purely on keyword ranking techniques only, it’s just as likely that the music student could be the number one result.

How do you solve that problem?

Well, the answer is hyperlink analysis (a.k.a., backlinks).

In my next installment, I’ll explain how the word “authority” entered the IR and SEO lexicon. And I’ll also explain the original source of what is now referred to as E-A-T and what it’s actually based on.

Until then – be well, stay safe and remember what joy there is in discussing the inner workings of search engines!

The post Indexing and keyword ranking techniques revisited: 20 years later appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Thursday, August 4th, 2022

You’re invited!

Join Martin Salle, enterprise sales leader from Algolia and AWS, for a special conversation and hands-on solution demonstration.

In this session:

Leaders in every industry use Algolia Search and Discovery to create dynamic digital experiences that drive business results.

Algolia’s flexible search-as-a-service and full suite of APIs allow teams to easily develop tailored, fast search and discovery experiences that delight and convert. To date, Algolia has over 10,000 customers that perform over 10 billion search queries per month.

For marketers, Algolia offers secure, reliable, and scalable tools to easily manage every step of the omnichannel user experience without the need for IT.

You will learn:

- Learn about major challenges marketers are facing today

- Hear how Algolia can help your organization

- See an on-demand demo of the solution in practice

Join the session here.

Speaker bio:

Martin was among the first employees at Algolia when joining over five years ago, coming from Google. He has helped scale the customer success and sales teams ever since. Today, Martin looks after Algolia’s enterprise customers focusing on the Fortune 100.

About Algolia:

Algolia provides an API platform for dynamic experiences that enable organizations to predict intent and deliver results. Algolia achieves this with an API-first approach that allows developers and business teams to surface relevant content when wanted — satisfying the demand for instant gratification — and building and optimizing online experiences that enhance online engagement, increase conversion rates, and enrich lifetime value to generate profitable growth. More than 10,000 companies, including Under Armour, Lacoste, Birchbox, Stripe, Slack, Medium and Zendesk, rely on Algolia to manage over 1.5 trillion search queries a year. Algolia is headquartered in San Francisco with offices in New York, Atlanta, Paris, London and Bucharest. To learn more, visit www.algolia.com.

The post Personalize each digital customer experience appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Thursday, August 4th, 2022

Meta has announced that they’re going to shift their focus on Reels, and their live shopping feature will be sunset on October 1.

What it means. After October 1 users will still be able to use Facebook Live to broadcast events, but you’ll no longer be able to host new or scheduled live shopping events. The feature was created two years ago as a way for creators and brands to connect with shoppers, find new buyers, and connect with viewers.

Facebook says. “As consumers’ viewing behaviors are shifting to short-form video, we are shifting our focus to Reels on Facebook and Instagram, Meta’s short-form video product,” the company said in the blog post. “If you want to reach and engage people through video, try experimenting with Reels and Reels ads on Facebook and Instagram. You can also tag products in Reels on Instagram to enable deeper discovery and consideration. If you have a shop with checkout and want to host Live Shopping events on Instagram, you can set up Live Shopping on Instagram.”

Following in TikToks footsteps. Last month TikTok announced they were abandoning plans to bring a live QVC-style shopping video feature to the US. The announcement came after a disastrous UK launch, though popular in Asia.

Read the blog post. You can read more details about the announcement on Facebook’s blog post.

Why we care. Brands and creators that used Facebook live shopping to expand their reach and promote products will have to find another way. However it seemed like live shopping served a different purpose and demographic, so swapping it for Reels doesn’t make much sense at the moment.

Since you can tag products in Reels, we suggest that brands and advertisers who use videos to promote shift their focus and cross their fingers.

The post The Facebook live shopping feature is going away appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Wednesday, August 3rd, 2022

Google has just announced the Google tag – a simple, centralized, reusable tag that’s built to improve data quality and adopt new features without having to modify and install more code. The Google tag is an upgrade and rebrand of the global site tag.

What is the Google tag. The new Google tag is a single, reusable tag built on top of your existing gtag.js implementations. Google says the tag will roll out in the next week and should be placed on all pages of your website. They claim the tag will “help you confidently measure impact and preserve user trust.”

New codeless features. According to Christophe Combette, Director, Ads Measurement Products, Google, the new site tag will have more codeless features such as analyzing events, managing the tags from one central screen, and using a single line of code to enable more products, accounts and features from the interface. There will also be more privacy-safe measurement capabilities, better data quality, and increased ease of use. Users will also be able to use their existing Google tag installation when setting up another Google product or account or creating new conversion actions, instead of configuring additional code each time.

Will the global site tag be sunset. While Combette claims that the global site tag branding will be sunset, the tag itself will not. Users with the global site tag installed will continue to operate as normal. The global site tag will continue to work and tag implementations will not change. The global site tag will have new capabilities if you want to adjust settings or create new accounts.

Advertisers with multiple instances of gtag.js can now combine those tags and centrally manage their settings in the Google tag screens in Google Ads and Google Analytics. Since it’ll be easier to set up sitewide tagging and combine or reuse tags, you can easily increase the number of tagged pages with consistent configuration. This helps improve measurement, leading to better-quality customer insights. You can also now manage user access to your tag settings across products in one dedicated place, giving you more control over who has access to critical measurement settings.

What products will have the new Google tag. Google says, “The Google tag already works with many Google products such as Google Ads, Campaign Manager, Display & Video 360, Search Ads 360, Google Analytics, and more. The new codeless tagging capabilities of the Google tag will initially be available in Google Ads and Google Analytics, and will expand to other products over time.”

What Google says. You can read about the new Google tag, its capabilities, and best practices here.

Why we care. The release of a new tag may be confusing for advertisers. It’s unclear whether advertisers who are using the global site tag should leave theirs installed, or replace it with the new Google tag. It’s also unclear on what happens if users decide not to install the new Google tag. If the global site tag will feature the new capabilities, what incentive do advertisers have to use the new one? However, the new Google tag seems more intuitive and easier to manage. If Google can make the transition smooth and uncomplicated, then it could be a win.

Q&A from Christophe Combette, Director, Ads Measurement Products, Google

What is tagging and why is it so important?

Understanding the effectiveness of online advertising and on-site behavior with digital marketing and measurement tools requires advertisers to measure various interactions on their websites. It is the foundation of measurement and also is essential in enabling businesses to implement privacy-centric measurement tools, such as Consent Mode or the Privacy Sandbox in the coming months. Tagging is critical to an enduring and effective measurement strategy.

Tagging is the underlying code that powers the sitewide measurement in Google Ads and Google Analytics, so advertisers, businesses, and marketers can better understand customer activity and glean actionable insights. To get a bit more technical, tags are the code snippets that make this online measurement possible. Tagging typically involves a marketer or developer adding a base or global tag for each measurement tool that they use to all pages of their website, as well as sometimes adding event tags to measure key interactions such as submitting a contact form or completing an online purchase.

What is the new Google tag?

Google tag is an expansion and rebrand of the existing global site tag. In a time where measurement is more essential than ever, it’s critical that businesses are able to set up a strong foundation. To help advertisers, marketers, and businesses continue to measure conversions while adapting to ecosystem changes and respecting users’ privacy, it’s essential for them to have high quality, site-wide tagging.

Google tag is – at its core – one tag. For example, if an advertiser has two Google Ads accounts, they only need to tag once, where previously multiple tags would have been needed. This works the same for an advertiser who uses both Google Ads and Google Analytics.

The new Google tag includes codeless features and experiences for customers that will allow them to take advantage of more privacy-safe measurement capabilities, better data quality, and increased ease of use.

Why is Google tag replacing the global site tag?

The Google tag is an evolution of the global site tag and built upon its existing capabilities. It is a more efficient and seamless experience for customers and it will enable businesses, both big and small, to more effectively manage tags and more easily leverage privacy-safe measurement solutions.

Is there a deprecation date for the global site tag?

Advertisers, marketers and businesses who are currently using the global site tag, will not need to make any changes. While the global site tag branding will be sunset, tag implementations do not change and existing tag implementations will continue to work. Those with global site tag will now have new capabilities available to them should they need to adjust tag settings, create new accounts within existing products, or begin using new products (i.e. Google Ads or Google Analytics).

How is the new Google tag different from the current global site tag?

The new Google tag will incorporate more codeless features where previously in-page code changes may have been required. For example, prior to Google tag, each event you wanted to understand would need to be set up through in-page coding – something that takes both expertise and bandwidth. With the new codeless capabilities, advertisers and businesses can do that all through the UI.

Additionally, Google Analytics and Google Ads customers will be able to combine and manage their tags from one central location in newly introduced “Google tag” screens. For advertisers without deep technical knowledge (especially those with multiple accounts or using multiple Google products), this makes it easier and faster to manage your tags and improves consistency.

For example, prior to the Google tag, when getting started with Google Analytics or Google Ads, an advertiser was instructed to install the global site tag (gtag.js). If they wanted to set up a second product or account, they would be required to add an additional line of code to the gtag.js implementation to begin measuring with that second product. With the new Google tag experience, customers will be able to use a single installation of gtag.js to enable more products, accounts and features from the product interface.

Is the implementation of the new tag the same, or how will it change?

Generally, the first implementation of the Google tag on a website is the same as with the global site tag. The key difference is that the tag now only needs to be installed once. Setting up a second or third product or account will not require additional tag implementation. Additionally, we’re working with some of the top Content Management Systems and web platforms to make simpler integrations available – so, if an advertiser or business uses a popular CMS, they may not need to implement any code at all.

Will the Google tag be used for all Google products, or just Google Ads? Or, if not all products, which Google products can the tag be used for.

The Google tag already works with many Google products such as Google Ads, Campaign Manager, Display & Video 360, Search Ads 360, Google Analytics, and more. The new codeless tagging capabilities of the Google tag will initially be available in Google Ads and Google Analytics, and will expand to other products over time.

The how it works (from the same support documentation) – what’s different and does anything stay the same.

When it comes to what’s different, it’s all about simplification, ease of use, flexibility, and improved features. Customers who are currently set up and are not looking to make any expansions, will not see any changes – though more features and flexibility will be immediately available to them.

For new customers, or customers who are looking to set up a second product or account, they will now be able to use the one existing tag to expand, eliminating a number of steps along the way.

What are best practices for marketers using the Google tag?

First and foremost, the Google tag should be installed on all pages of an advertiser, business, or marketer’s site. By doing this, it will ensure that they get the best possible measurement from their Google tag and will enable them to adapt new features as they become available.

Next, if you already have multiple tags installed on your site, consider combining them so that you can manage settings centrally and take advantage of combined page coverage.

Organizations with custom-built websites should think about working with developers to implement events and parameters for key user actions. While more and more measurement will be possible codelessly, working with developers to instrument important events and data that would be otherwise difficult to capture codelessly (such as ecommerce data from custom websites) can help achieve richer measurement.

Are there any things to watch out for? (EG configuration? any issues if you have both the global site tag and new Google tag in your code at the same time? Could that cause issues)

The Google tag will make the tagging experience more seamless for customers. As previously mentioned, customers with multiple existing tags should consider combining them in the new Google tag screen in Google Ads and Analytics to centralize management of settings.

After two Google tags have been combined, for example, using an ID from either original tag will work to load the combined tag. When two instances of a combined tag run on a given page, only the first on-page configuration will take effect. Customers who use many on-page configurations in their global site tags today should be thoughtful about updating tagging settings when combining tags. The new Google tag screens will guide users through these actions.

Is it compatible with Google Tag Manager?

Customers who use Google Tag Manager should not need to make any changes today. We will have more to share about how these Google tag capabilities work with Google Tag Manager and how customers with the Google tag can upgrade to Google Tag Manager in the coming months.

Will businesses/marketers get any new types of data as a result of this change?

The Google tag will make it easier for businesses/marketers to measure various data and interactions on their websites, but the types of data they get will be consistent with what’s possible today.

The post Google releases simple, centralized tag solution appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Wednesday, August 3rd, 2022

Twitter announced last week they were testing a new feature that would allow images, videos and GIFs in one tweet.

How it works. Multimedia tweets cannot be created or viewed on a desktop at the moment, and are only available to on the Twitter app. Additionally, the feature is only available to a handful of users at the moment with no word on when the company plans to make the feature public.

I wasn’t able to find any examples of a multimedia tweet on my own feed and didn’t have access to create my own, but the screenshot below from Alessandro Paluzzi shows what it looks like.

What Twitter is saying. In a statement reported to TechCrunch, Twitter said:

We’re testing a new feature with select accounts for a limited time that will allow people to mix up to four media assets into a single tweet, regardless of format. We’re seeing people have more visual conversations on Twitter and are using images, GIFS and videos to make these conversations more exciting. With this test we’re hoping to learn how people combine these different media formats to express themselves more creatively on Twitter beyond 280 characters.

Tests continue on the edit feature. Additionally, Twitter continues to test the edit feature on a limited number of accounts. Jane Manchun Wong found that when you edit a Tweet, the original one doesn’t disappear, but shows a notification that says “There’s a new version of this Tweet” just below it, and the new, edited Tweet is then shown above.

Embedded Tweets will show whether it’s been edited, or whether there’s a new version of the Tweet

When a site embeds a Tweet and it gets edited, the embed doesn’t just show the new version (replacing the old one). Instead, it shows an indicator there’s a new version pic.twitter.com/mAz5tOiyOl

— Jane Manchun Wong (@wongmjane) August 1, 2022

Initial testing. Twitter began testing the edit feature back in April to Twitter Blue subscribers only. 73% of the 4 million voters that replied to an Elon Musk poll replied that they wanted an edit option. The day after the poll, Twitter responded that they were in fact testing an edit feature and that it had nothing to do with Musk’s poll.

Why we care. Twitter users have been asking for an edit option for a long time. There is no indication that non Twitter Blue users will have access, so this could be their way of enticing users into paying for the subscription. As for multimedia posts, it could be a fun feature for brands to showcase their products, but likely won’t move the revenue needle much.

The post Twitter continues testing the edit button, allowing multimedia tweets appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Wednesday, August 3rd, 2022

Marketers are being asked to do more with available resources while still delivering against tough targets. Pivoting this from a no-win mission to a job-well-done scenario is a no-brainer.

During this webinar, you’ll learn how Hertz has tackled their ESP sprawl, grew its marketing team and scaled operations through consolidation efforts.

Register today for “Why Finding the Right Platform is the Key to Winning in Email Marketing,” presented by Salesforce.

The post Email marketing webinar: Find the right platform appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Wednesday, August 3rd, 2022

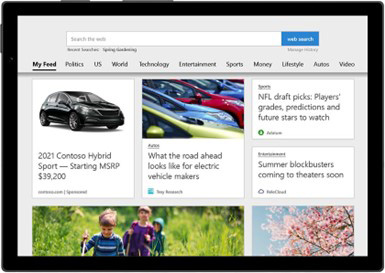

Microsoft has just announced nine (9!) new updates on their blog. The updates include new Automotive Ads, vertical-based ads, and more. Let’s dive in.

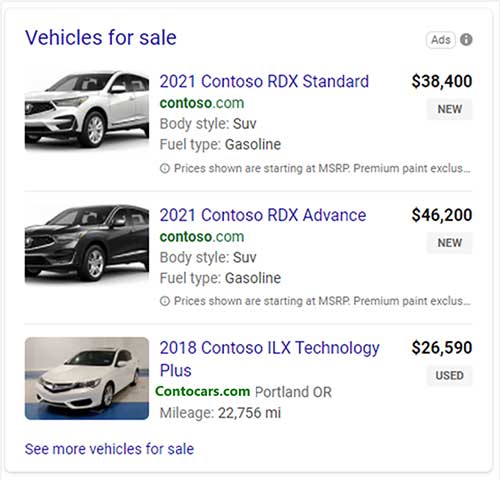

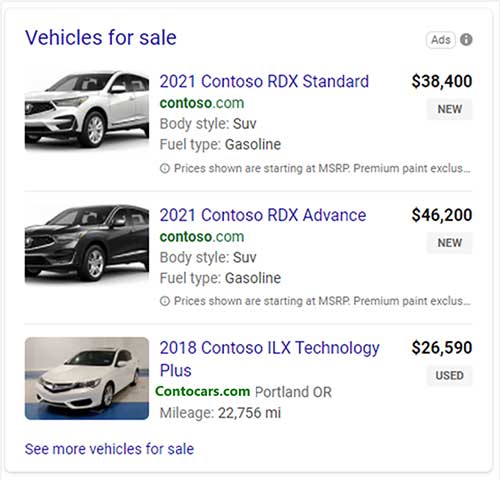

Automotive Ads

The new Automotive Ads are specific ad formats that are unique to the auto industry. The new ad format will display a photo of the vehicle for sale, the year, make, and model, condition, dealer website, price, and a short description. After a North American and Europe test, the ads will be rolled out to advertisers in the Asia Pacific and Latin American markets in the coming weeks.

Learn more about Automotive Ads and how to set them up here.

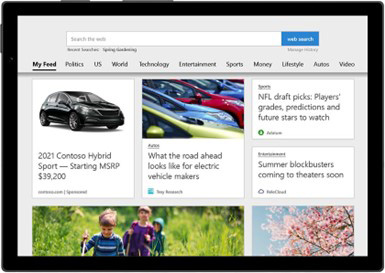

Vertical-based ads

Vertical ads are created from dynamic feeds and appear in different formats depending on what the user is searching for. The ads use intent data and the user needs to create the ad. Microsoft AI uses the data feed to source the necessary information to display in the ad. Microsoft claims that the new ad format will minimize the time and resources required to set up and manage campaigns.

Learn more about Automotive Ads and how to set them up here.

Audience network updates

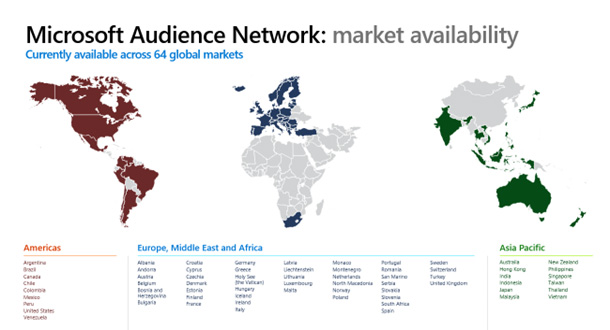

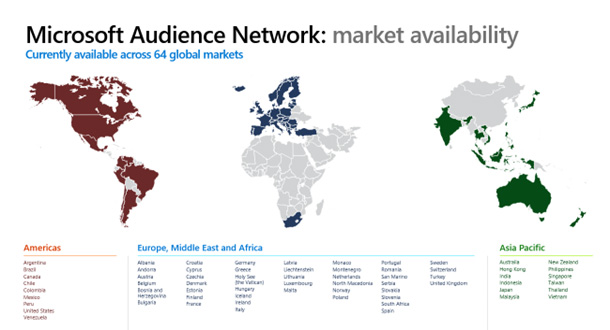

Market expansions

The Microsoft Audience Network connects advertisers to MSN, Outlook, Microsoft Edge, and other publisher partners. Now Microsoft is expanding to 64 markets, with more coming later this year.

New ad formats

Dynamic Remarketing will be expanded to verticals outside of just retail. You can now use Dynamic remarketing for travel, auto, and event ads. When the dynamic feed is set up, advertisers will have two options to set up Dynamic remarketing.

- Standard Universal Event Tracking (UET). Using the UET tag, you can send ads to users who have been on your website. The tag will not need to be updated if the product ID is presented. Microsoft will know if a visitor has been to the website if the product ID shows in the URL

- UET with additional parameters. Updating your UET tag to pass back additional parameters will give advertisers access to more audiences. With those parameters, you can access:

- General visitors

- Product searchers

- Product viewers

- Cart abandoners

- Past buyers

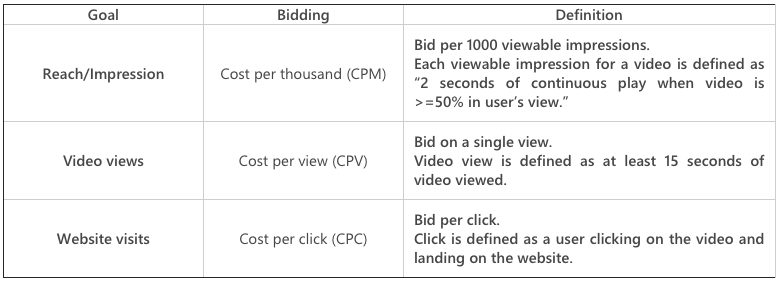

Additional bidding solutions

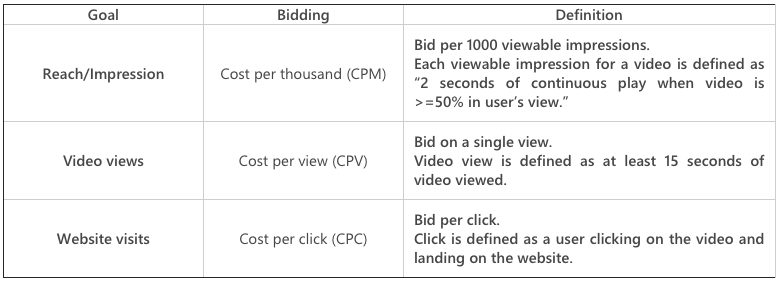

For video ads, there are now three different bidding options.

Additionally, a pilot for automated bidding has just been launched. You can use Enhanced CPC to help maximize conversions on audience campaigns. Microsoft says that more automated bidding solutions will be released soon.

Customer data platform integrations for Customer Match

Last month Microsoft announced that they were expanding Customer Match to new markets. Today they announced that if you use a Customer Data Platform you can connect it to Microsoft Advertising to import your customer lists. Current integrations include Ampertiy and Adobe Ad Cloud for Search. More integrations will be available soon.

Expanding audience targeting new markets

In-market Audiences are now available in:

Latin America: Aruba, Bahamas, Bolivia, Cayman Islands, Costa Rica, Dominica, Dominican Republic, Ecuador, El Salvador, French Guiana, Guatemala, Guyana, Haiti, Honduras, Martinique, Montserrat, Panama, Paraguay, Puerto Rico, Trinidad and Tobago, and Uruguay.

Asia Pacific: Bangladesh, Brunei, Fiji, French Polynesia, Guam, Maldives, Mongolia, Nepal, New Caledonia, Papua New Guinea, and Sri Lanka.

Similar Audiences are now available in more markets

Albania, Bosnia and Herzegovina, Hong Kong, Iceland, Japan, Montenegro, North Macedonia, Serbia, South Africa, Taiwan, and Turkey.

Smart Campaigns are expanding to new markets

Microsoft is now piloting new markets in France, Germany, Ireland, Italy, The Netherlands, New Zealand, and Singapore.

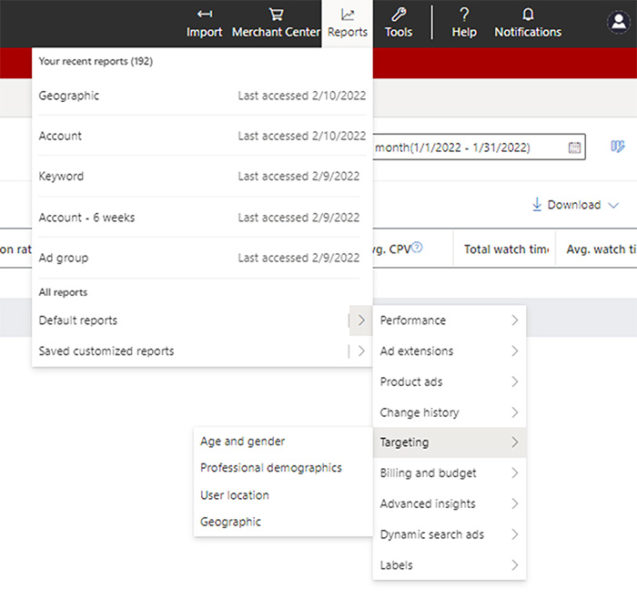

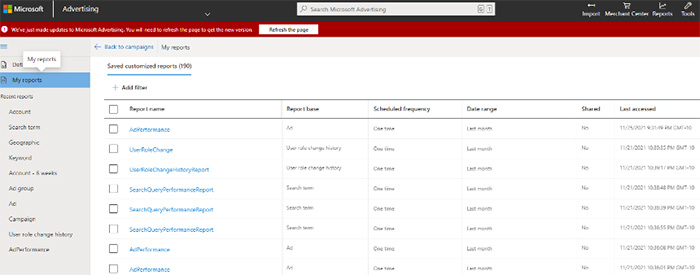

Reporting experience updates

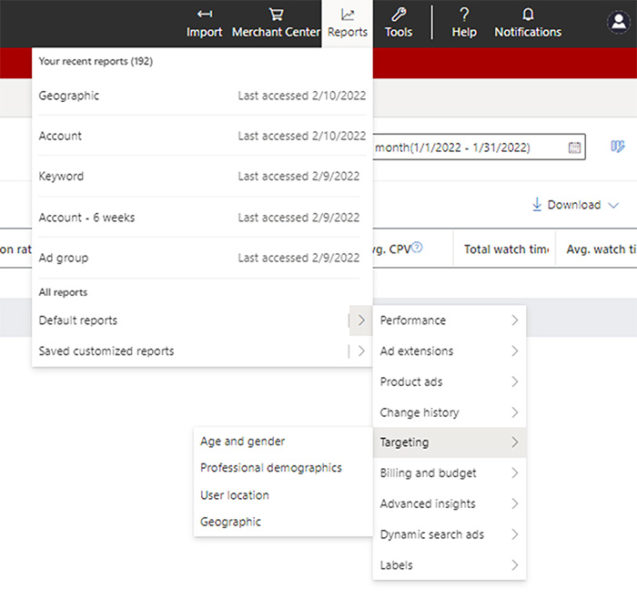

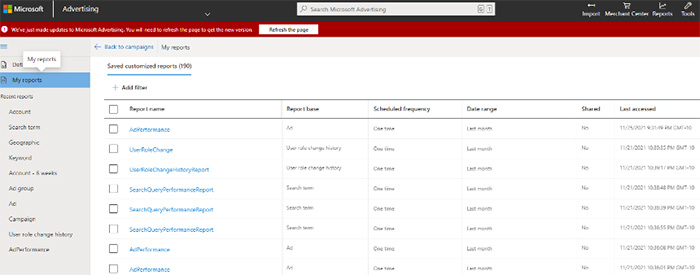

There is a new drop-down menu that will help advertisers access all reports. Those reports can be found on the updated landing page grid. You can also filter the grid to quickly access the reports you need.

RSA reminder!

Microsoft also added a reminder that August is the last month to migrate your Expanded Text Ads (ETAs) to Responsive Search Ads. Starting on August 29, RSAs will be the only search ad type that can be created or edited in standard search campaigns. Existing Expanded Text Ads will still serve, but you won’t be able to edit or add them.

You can read the entire announcement from Microsoft, with all of the updates here.

Why we care. Microsoft continues to release numerous updates and products, which is giving Google a run for its money. Microsoft and their partner networks reach about 724 million users each month and these new features could be good news for advertisers looking for Google alternatives, or to expand their impression share.

The post Microsoft announces Automotive Ads, Audience Network expansion, and 7 other updates appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Monday, August 1st, 2022

Whenever I visit LinkedIn lately, I almost always see an update from Sara Taher, the SEO Team Lead at Flywheel Digital.

And many of her posts tend to get a lot of engagement. Her updates regularly attract hundreds of reactions, comments and shares.

So what’s her secret to posting effectively on LinkedIn and being so visible? Has she figured out the LinkedIn algorithm?

Taher was kind enough to give a little insight into her LinkedIn posting strategy. What follows are highlights of our Q&A. You may notice that much of the advice Taher shares aligns with LinkedIn’s advice for creators.

Pick your LinkedIn goal(s). Taher’s biggest goal on LinkedIn? To build her personal brand.

- “My audience is SEO and digital marketers mainly, but I was able to attract some tech-savvy business owners and some marketing managers looking for SEO consultants,” Taher said.

Keys to a successful LinkedIn post. Taher said she doesn’t use a schedule. She writes based on inspiration. Very often, Taher said she gets an idea for a post from something she is actively working on.

So what formula works for Taher?

- “The formula is four things. Provide 1. relatable and 2. actionable information. 3. Be yourself and 4. be real.”

- “SEOs can understand my posts, I make sure it’s easy and simple to understand. They also find it relevant to everyday SEO tasks.”

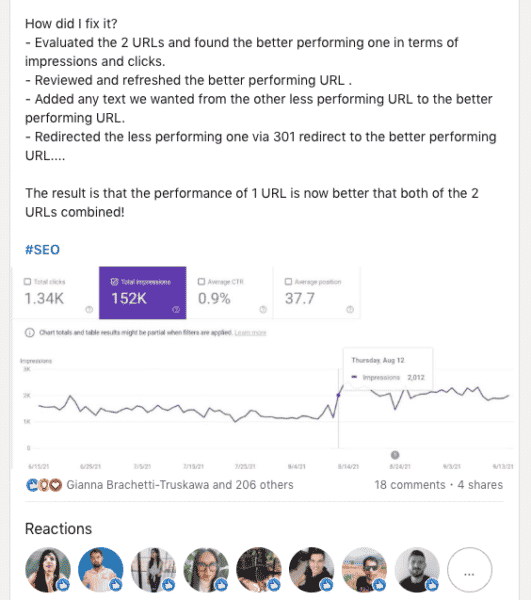

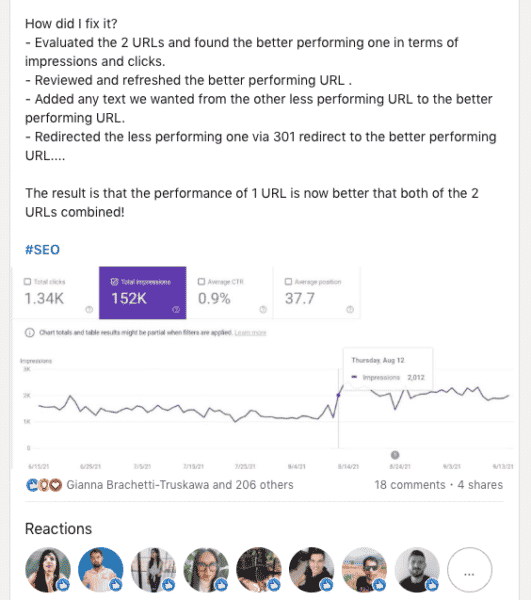

This post is one example of her doing that, when she discussed content audits.

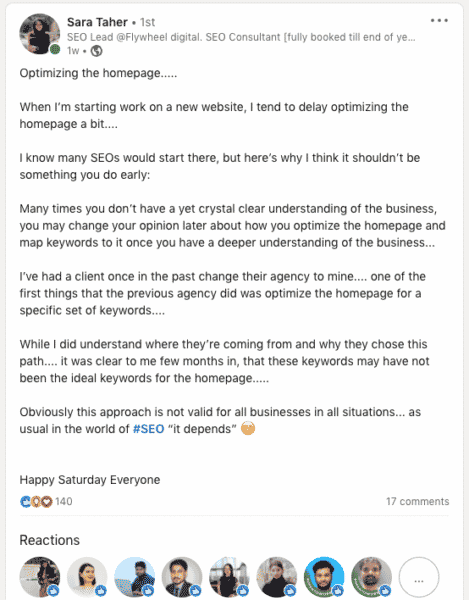

Here’s another, in which she discussed optimizing the homepage:

In addition to sharing SEO insights and tips, Taher opens up about herself.

In this post, she noted how posting something about herself made her feel “seen” as a person for the first time.

And in this post, she discussed confidence, which she admitted she struggles with.

Is there a “best time” to post on LinkedIn? I know, I know. Posting at mid-morning, midweek guarantees nothing in terms of visibility or engagement for your LinkedIn post. But I was curious if Taher had noticed any days or times that worked generally for her. Here’s what she said:

- “My audience is international with different time zones and even sometimes different weekend days (some countries take Friday and Saturday off as weekend). I just found that posting first thing in the morning my time, and generally posting on Sundays, Tuesdays and Wednesdays, would get the most engagement.”

What to avoid. Taher also has some advice on what doesn’t work:

- “Definitely trying to be like someone else. Don’t try to imitate other people’s style,” Taher said.

Taher said she learned a lot by following other SEO experts on LinkedIn and connecting with them. But you can learn from others without copying them.

Defining success. Success will depend on your goals. So how does Tahker measure “success” for her LinkedIn post?

- “Success for me is when I get engagement on a post. And when I get positive feedback from followers.”

One final bit of advice. And this is something more people in our space need to hear:

- “Posting and becoming a public figure in the SEO community is a responsibility, as people actually follow your advice. So don’t post anything you are not 100% sure of.”

The post Tips for how to post effectively on LinkedIn from SEO Sara Taher appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Monday, August 1st, 2022

MarTech recently surveyed nearly 300 marketers from brands across the U.S. to uncover their most significant challenges and strategies for overcoming them.

Join our panel to learn more about how marketers overcome their biggest challenges and the technology they adopt to drive results. Attendees will receive a copy of the report.

Register today for “What Marketers Must Know to Rise Above Economic and Buyer Uncertainty,” presented by Highspot.

The post Webinar: Marketers’ secrets to overcoming challenges appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Monday, August 1st, 2022

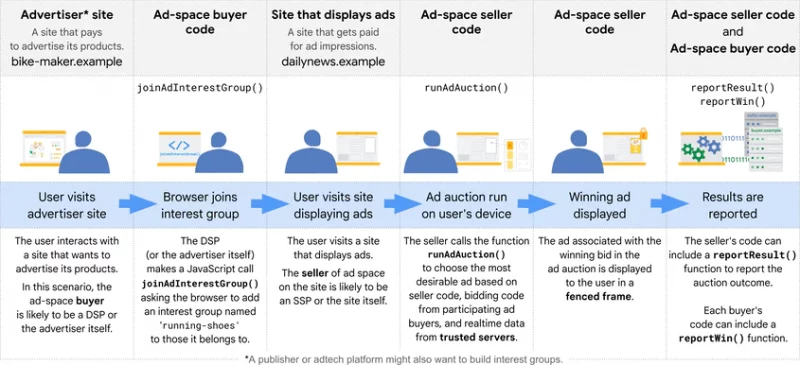

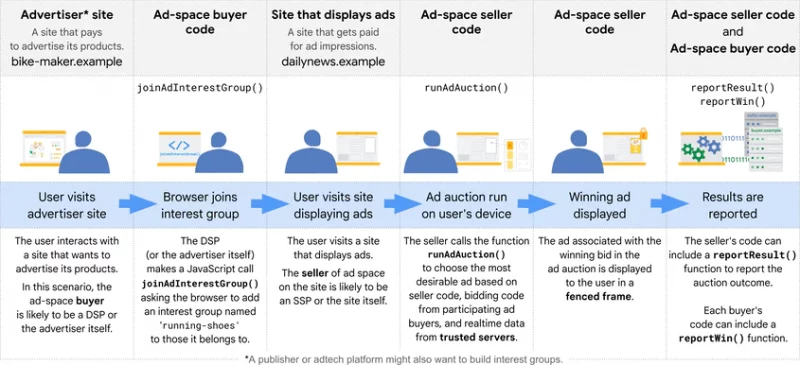

FLEDGE is a Privacy Sandbox proposal for remarketing and audiences. It’s designed so that it can’t be used by third parties to track user browsing behavior across websites. Google will begin testing the API on AsSense accounts on August 28.

What is FLEDGE. The API uses interest groups to enable sites to display ads they believe are relevant to their users. According to Google, when a user visits a website that wants to advertise its products, an interest group owner (such as a DSP working for the site) can ask the user’s browser to add membership for the interest group. The group owner (in this example, the DSP) does this by calling the JavaScript function navigator.joinAdInterestGroup(). If the call is successful, the browser records:

- The name of the interest group: for example, ‘custom-bikes’.

- The owner of the interest group: for example, ‘https://dsp.example’.

- Interest group configuration information to enable the browser to access bidding code, ad code, and real-time data, if the group’s owner is invited to bid in an online ad auction. This information can be updated later by the interest group owner.

Later when a user visits a website that sells ad space, the seller for the website can use FLEGDE to run an ad auction to select the most appropriate ads to display to the users. Bidding is only run for the interest groups the user is a member of, and whose owners have been invited to bid.

Learn more about FLEDGE. You can learn more about the API here.

AdSense testing. Testing for FLEDGE will begin on August 28 in AdSense. Google does not predict any revenue change or performance impacts “for now.” You can turn off access to FLEDGE on your Chrome browsers by following the instructions here.

Why we care. Google announced last week that cookies will remain in Chrome until at least mid to late 2024. However, if the FLEDGE API test is successful, audiences and remarketing ads may become less specific and advertisers could have a tougher time converting. On the other hand, as users are added to interest groups like “running shoes” for example, they could possibly be served additional ads whose products are in the same category. If you’re an advertiser, learn how the FLEDGE API could categorize your product or service and how this may impact your remarketing ads.

The post Google begins testing FLEDGE API on AdSense appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

If a music student has a web page about Beethoven’s Fifth Symphony and so does a world-famous orchestra conductor (such as Andre Previn), who would you expect to have the most authoritative page?

If a music student has a web page about Beethoven’s Fifth Symphony and so does a world-famous orchestra conductor (such as Andre Previn), who would you expect to have the most authoritative page?