Archive for the ‘seo news’ Category

Friday, August 12th, 2022

“What’s the difference between crawling, rendering, indexing and ranking?”

Lily Ray recently shared that she asks this question to prospective employees when hiring for the Amsive Digital SEO team. Google’s Danny Sullivan thinks it’s an excellent one.

As foundational as it may seem, it isn’t uncommon for some practitioners to confuse the basic stages of search and conflate the process entirely.

In this article, we’ll get a refresher on how search engines work and go over each stage of the process.

Why knowing the difference matters

I recently worked as an expert witness on a trademark infringement case where the opposing witness got the stages of search wrong.

Two small companies declared they each had the right to use similar brand names.

The opposition party’s “expert” erroneously concluded that my client conducted improper or hostile SEO to outrank the plaintiff’s website.

He also made several critical mistakes in describing Google’s processes in his expert report, where he asserted that:

- Indexing was web crawling.

- The search bots would instruct the search engine how to rank pages in search results.

- The search bots could also be “trained” to index pages for certain keywords.

An essential defense in litigation is to attempt to exclude a testifying expert’s findings – which can happen if one can demonstrate to the court that they lack the basic qualifications necessary to be taken seriously.

As their expert was clearly not qualified to testify on SEO matters whatsoever, I presented his erroneous descriptions of Google’s process as evidence supporting the contention that he lacked proper qualifications.

This might sound harsh, but this unqualified expert made many elementary and apparent mistakes in presenting information to the court. He falsely presented my client as somehow conducting unfair trade practices via SEO, while ignoring questionable behavior on the part of the plaintiff (who was blatantly using black hat SEO, whereas my client was not).

The opposing expert in my legal case is not alone in this misapprehension of the stages of search used by the leading search engines.

There are prominent search marketers who have likewise conflated the stages of search engine processes leading to incorrect diagnoses of underperformance in the SERPs.

I have heard some state, “I think Google has penalized us, so we can’t be in search results!” – when in fact they had missed a key setting on their web servers that made their site content inaccessible to Google.

Automated penalizations might have been categorized as part of the ranking stage. In reality, these websites had issues in the crawling and rendering stages that made indexing and ranking problematic.

When there are no notifications in the Google Search Console of a manual action, one should first focus on common issues in each of the four stages that determine how search works.

It’s not just semantics

Not everyone agreed with Ray and Sullivan’s emphasis on the importance of understanding the differences between crawling, rendering, indexing and ranking.

I noticed some practitioners consider such concerns to be mere semantics or unnecessary “gatekeeping” by elitist SEOs.

To a degree, some SEO veterans may indeed have very loosely conflated the meanings of these terms. This can happen in all disciplines when those steeped in the knowledge are bandying jargon around with a shared understanding of what they are referring to. There is nothing inherently wrong with that.

We also tend to anthropomorphize search engines and their processes because interpreting things by describing them as having familiar characteristics makes comprehension easier. There is nothing wrong with that either.

But, this imprecision when talking about technical processes can be confusing and makes it more challenging for those trying to learn about the discipline of SEO.

One can use the terms casually and imprecisely only to a degree or as shorthand in conversation. That said, it is always best to know and understand the precise definitions of the stages of search engine technology.

The 4 stages of search

Many different processes are involved in bringing the web’s content into your search results. In some ways, it can be a gross oversimplification to say there are only a handful of discrete stages to make it happen.

Each of the four stages I cover here has several subprocesses that can occur within them.

Even beyond that, there are significant processes that can be asynchronous to these, such as:

- Types of spam policing.

- Incorporation of elements into the Knowledge Graph and updating of knowledge panels with the information.

- Processing of optical character recognition in images.

- Audio-to-text processing in audio and video files.

- Assessing and application of PageSpeed data.

- And more.

What follows are the primary stages of search required for getting webpages to appear in the search results.

Crawling

Crawling occurs when a search engine requests webpages from websites’ servers.

Imagine that Google and Microsoft Bing are sitting at a computer, typing in or clicking on a link to a webpage in their browser window.

Thus, the search engines’ machines visit webpages similar to how you do. Each time the search engine visits a webpage, it collects a copy of that page and notes all the links found on that page. After the search engine collects that webpage, it will visit the next link in its list of links yet to be visited.

This is referred to as “crawling” or “spidering” which is apt since the web is metaphorically a giant, virtual web of interconnected links.

The data-gathering programs used by search engines are called “spiders,” “bots” or “crawlers.”

Google’s primary crawling program is “Googlebot” is, while Microsoft Bing has “Bingbot.” Each has other specialized bots for visiting ads (i.e., GoogleAdsBot and AdIdxBot), mobile pages and more.

This stage of the search engines’ processing of webpages seems straightforward, but there is a lot of complexity in what goes on, just in this stage alone.

Think about how many web server systems there can be, running different operating systems of different versions, along with varying content management systems (i.e., WordPress, Wix, Squarespace), and then each website’s unique customizations.

Many issues can keep search engines’ crawlers from crawling pages, which is an excellent reason to study the details involved in this stage.

First, the search engine must find a link to the page at some point before it can request the page and visit it. (Under certain configurations, the search engines have been known to suspect there could be other, undisclosed links, such as one step up in the link hierarchy at a subdirectory level or via some limited website internal search forms.)

Search engines can discover webpages’ links through the following methods:

- When a website operator submits the link directly or discloses a sitemap to the search engine.

- When other websites link to the page.

- Through links to the page from within its own website, assuming the website already has some pages indexed.

- Social media posts.

- Links found in documents.

- URLs found in written text and not hyperlinked.

- Via the metadata of various kinds of files.

- And more.

In some instances, a website will instruct the search engines not to crawl one or more webpages through its robots.txt file, which is located at the base level of the domain and web server.

Robots.txt files can contain multiple directives within them, instructing search engines that the website disallows crawling of specific pages, subdirectories or the entire website.

Instructing search engines not to crawl a page or section of a website does not mean that those pages cannot appear in search results. Keeping them from being crawled in this way can severely impact their ability to rank well for their keywords.

In yet other cases, search engines can struggle to crawl a website if the site automatically blocks the bots. This can happen when the website’s systems have detected that:

- The bot is requesting more pages within a time period than a human could.

- The bot requests multiple pages simultaneously.

- A bot’s server IP address is geolocated within a zone that the website has been configured to exclude.

- The bot’s requests and/or other users’ requests for pages overwhelm the server’s resources, causing the serving of pages to slow down or error out.

However, search engine bots are programmed to automatically change delay rates between requests when they detect that the server is struggling to keep up with demand.

For larger websites and websites with frequently changing content on their pages, “crawl budget” can become a factor in whether search bots will get around to crawling all of the pages.

Essentially, the web is something of an infinite space of webpages with varying update frequency. The search engines might not get around to visiting every single page out there, so they prioritize the pages they will crawl.

Websites with huge numbers of pages, or that are slower responding might use up their available crawl budget before having all of their pages crawled if they have relatively lower ranking weight compared with other websites.

It is useful to mention that search engines also request all the files that go into composing the webpage as well, such as images, CSS and JavaScript.

Just as with the webpage itself, if the additional resources that contribute to composing the webpage are inaccessible to the search engine, it can affect how the search engine interprets the webpage.

Rendering

When the search engine crawls a webpage, it will then “render” the page. This involves taking the HTML, JavaScript and cascading stylesheet (CSS) information to generate how the page will appear to desktop and/or mobile users.

This is important in order for the search engine to be able to understand how the webpage content is displayed in context. Processing the JavaScript helps ensure they may have all the content that a human user would see when visiting the page.

The search engines categorize the rendering step as a subprocess within the crawling stage. I listed it here as a separate step in the process because fetching a webpage and then parsing the content in order to understand how it would appear composed in a browser are two distinct processes.

Google uses the same rendering engine used by the Google Chrome browser, called “Rendertron” which is built off the open-source Chromium browser system.

Bingbot uses Microsoft Edge as its engine to run JavaScript and render webpages. It’s also now built upon the Chromium-based browser, so it essentially renders webpages very equivalently to the way that Googlebot does.

Google stores copies of the pages in their repository in a compressed format. It seems likely that Microsoft Bing does so as well (but I have not found documentation confirming this). Some search engines may store a shorthand version of webpages in terms of just the visible text, stripped of all the formatting.

Rendering mostly becomes an issue in SEO for pages that have key portions of content dependent upon JavaScript/AJAX.

Both Google and Microsoft Bing will execute JavaScript in order to see all the content on the page, and more complex JavaScript constructs can be challenging for the search engines to operate.

I have seen JavaScript-constructed webpages that were essentially invisible to the search engines, resulting in severely nonoptimal webpages that would not be able to rank for their search terms.

I have also seen instances where infinite-scrolling category pages on ecommerce websites did not perform well on search engines because the search engine could not see as many of the products’ links.

Other conditions can also interfere with rendering. For instance, when there is one or more JaveScript or CSS files inaccessible to the search engine bots due to being in subdirectories disallowed by robots.txt, it will be impossible to fully process the page.

Googlebot and Bingbot largely will not index pages that require cookies. Pages that conditionally deliver some key elements based on cookies might also not get rendered fully or properly.

Indexing

Once a page has been crawled and rendered, the search engines further process the page to determine if it will be stored in the index or not, and to understand what the page is about.

The search engine index is functionally similar to an index of words found at the end of a book.

A book’s index will list all the important words and topics found in the book, listing each word alphabetically, along with a list of the page numbers where the words/topics will be found.

A search engine index contains many keywords and keyword sequences, associated with a list of all the webpages where the keywords are found.

The index bears some conceptual resemblance to a database lookup table, which may have originally been the structure used for search engines. But the major search engines likely now use something a couple of generations more sophisticated to accomplish the purpose of looking up a keyword and returning all the URLs relevant to the word.

The use of functionality to lookup all pages associated with a keyword is a time-saving architecture, as it would require excessively unworkable amounts of time to search all webpages for a keyword in real-time, each time someone searches for it.

Not all crawled pages will be kept in the search index, for various reasons. For instance, if a page includes a robots meta tag with a “noindex” directive, it instructs the search engine to not include the page in the index.

Similarly, a webpage may include an X-Robots-Tag in its HTTP header that instructs the search engines not to index the page.

In yet other instances, a webpage’s canonical tag may instruct a search engine that a different page from the present one is to be considered the main version of the page, resulting in other, non-canonical versions of the page to be dropped from the index.

Google has also stated that webpages may not be kept in the index if they are of low quality (duplicate content pages, thin content pages, and pages containing all or too much irrelevant content).

There has also been a long history that suggests that websites with insufficient collective PageRank may not have all of their webpages indexed – suggesting that larger websites with insufficient external links may not get indexed thoroughly.

Insufficient crawl budget may also result in a website not having all of its pages indexed.

A major component of SEO is diagnosing and correcting when pages do not get indexed. Because of this, it is a good idea to thoroughly study all the various issues that can impair the indexing of webpages.

Ranking

Ranking of webpages is the stage of search engine processing that is probably the most focused upon.

Once a search engine has a list of all the webpages associated with a particular keyword or keyword phrase, it then must determine how it will order those pages when a search is conducted for the keyword.

If you work in the SEO industry, you likely will already be pretty familiar with some of what the ranking process involves. The search engine’s ranking process is also referred to as an “algorithm”.

The complexity involved with the ranking stage of search is so huge that it alone merits multiple articles and books to describe.

There are a great many criteria that can affect a webpage’s rank in the search results. Google has said there are more than 200 ranking factors used by its algorithm.

Within many of those factors, there can also be up to 50 “vectors” – things that can influence a single ranking signal’s impact on rankings.

PageRank is Google’s earliest version of its ranking algorithm invented in 1996. It was built off a concept that links to a webpage – and the relative importance of the sources of the links pointing to that webpage – could be calculated to determine the page’s ranking strength relative to all other pages.

A metaphor for this is that links are somewhat treated as votes, and pages with the most votes will win out in ranking higher than other pages with fewer links/votes.

Fast forward to 2022 and a lot of the old PageRank algorithm’s DNA is still embedded in Google’s ranking algorithm. That link analysis algorithm also influenced many other search engines that developed similar types of methods.

The old Google algorithm method had to process over the links of the web iteratively, passing the PageRank value around among pages dozens of times before the ranking process was complete. This iterative calculation sequence across many millions of pages could take nearly a month to complete.

Nowadays, new page links are introduced every day, and Google calculates rankings in a sort of drip method – allowing for pages and changes to be factored in much more rapidly without necessitating a month-long link calculation process.

Additionally, links are assessed in a sophisticated manner – revoking or reducing the ranking power of paid links, traded links, spammed links, non-editorially endorsed links and more.

Broad categories of factors beyond links influence the rankings as well, including:

Conclusion

Understanding the key stages of search is a table-stakes item for becoming a professional in the SEO industry.

Some personalities in social media think that not hiring a candidate just because they don’t know the differences between crawling, rendering, indexing and ranking was “going too far” or “gate-keeping”.

It’s a good idea to know the distinctions between these processes. However, I would not consider having a blurry understanding of such terms to be a deal-breaker.

SEO professionals come from a variety of backgrounds and experience levels. What’s important is that they are trainable enough to learn and reach a foundational level of understanding.

The post The 4 stages of search all SEOs need to know appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Wednesday, August 10th, 2022

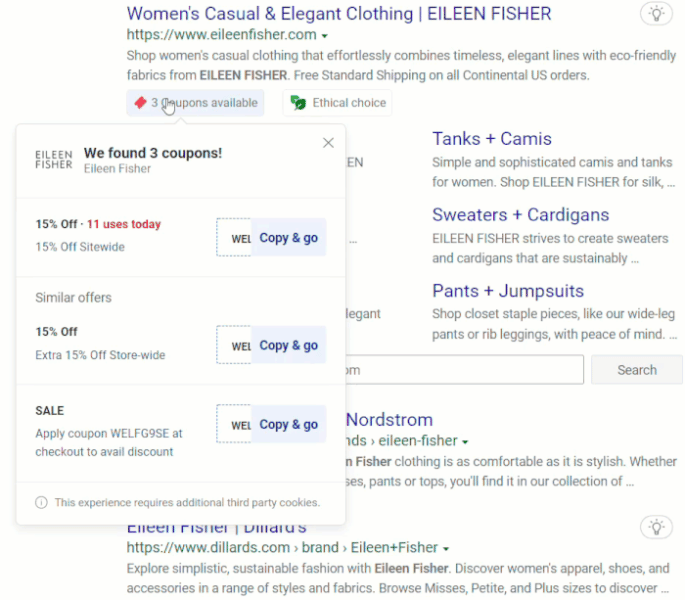

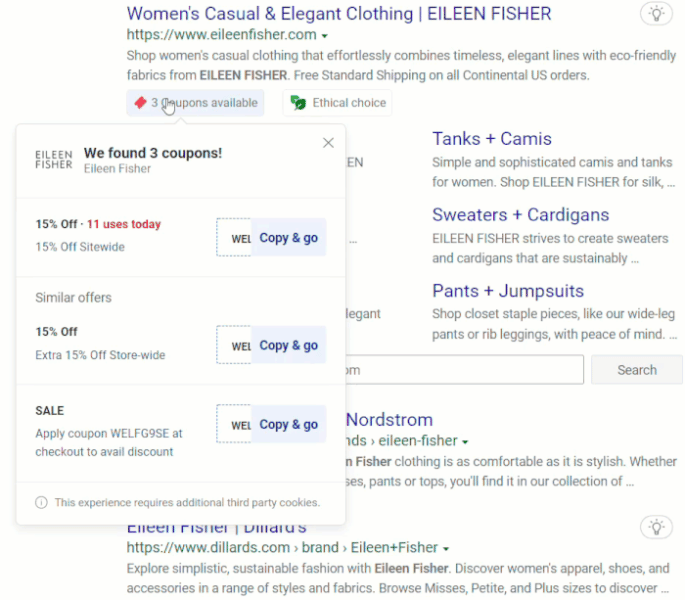

Microsoft Bing has recently added three notations to its search results pages that show price history, available coupons and ethical choice ratings.

Price history. Bing shows a graph showing whether a product price has increased, decreased or is stable. It highlights the high, median and low prices. Here’s what it looks like:

Microsoft Bing’s Price history annotation

Microsoft Bing’s Price history annotation

This annotation is available in the U.S., Canada, France, Germany, Great Britain, Australia and India.

Available coupons. Bing shows searchers directly in the search results when coupons are available for a website. The coupon will automatically be copied and applied to the purchase for shoppers without needing to do a separate search for a code. Here’s what it looks like:

Microsoft Bing’s Coupons available annotation

Microsoft Bing’s Coupons available annotation

This annotation is now available in the U.S., UK, Canada, Australia, Germany and France.

Ethical choice. This annotation indicates whether a brand sells eco-friendly, upcycled or fair trade fashion. Microsoft Bing recently expanded its Ethical Shopping Hub, which assigns a rating (powered by Good on You) based on a brand’s impact on people, the planet and animals.

Official announcement. You can read more on Microsoft Bing’s announcement: Shopping Searches are Now Smarter on Microsoft Bing.

Why we care. What’s good for searchers can also be good for websites. All of these annotations seem designed to help reduce friction in the shopping process and could lead to additional sales for brands and businesses.

The post Microsoft Bing adds 3 new shopping annotations appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Wednesday, August 10th, 2022

Google has announced several new enhancements to Google Search today that focus on improving the overall quality of the search results, while at the same time helping searchers evaluate the search quality of the results presented to them.

Google has made improvements to featured snippets, its content advisories, and the about this result.

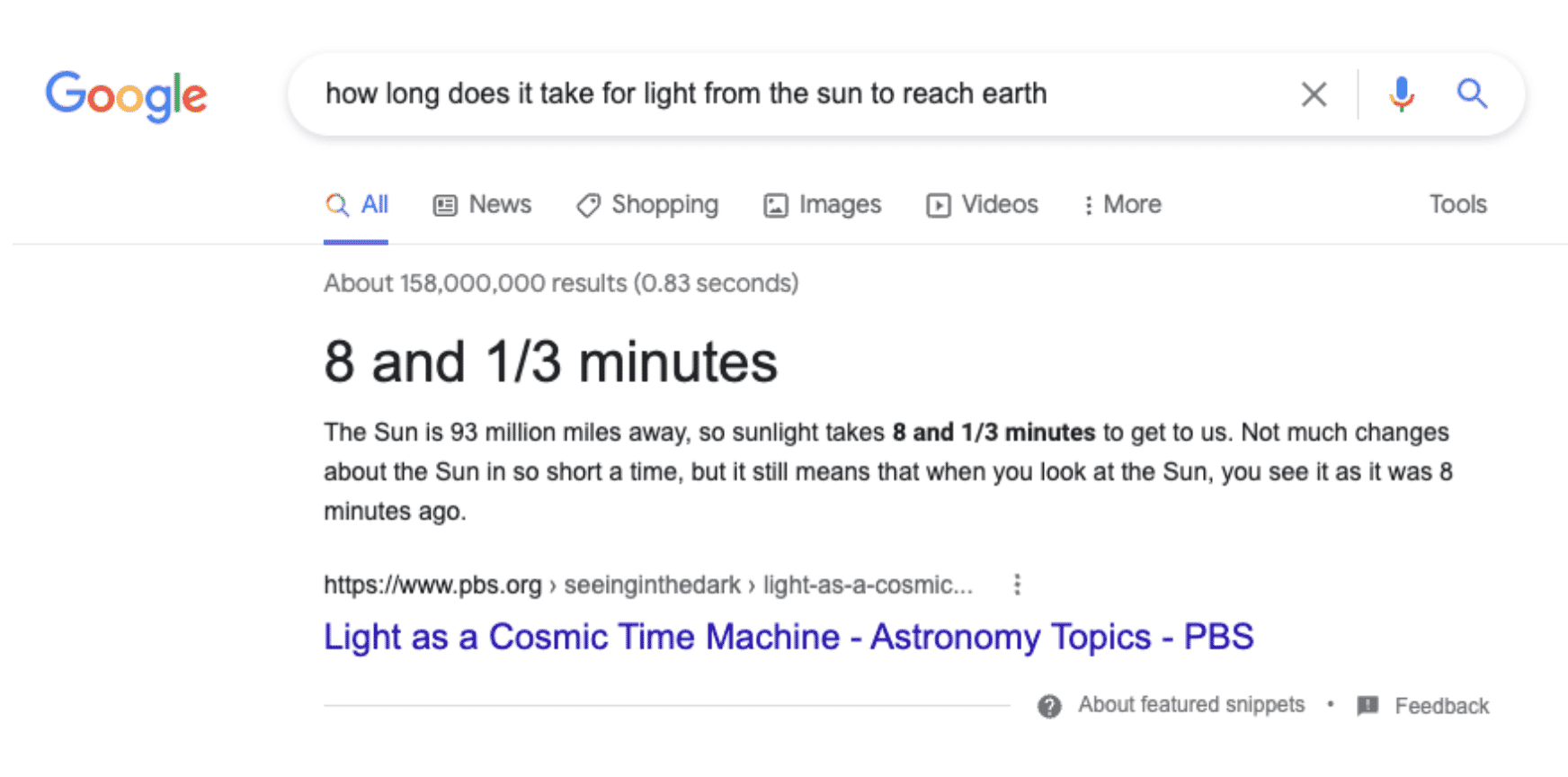

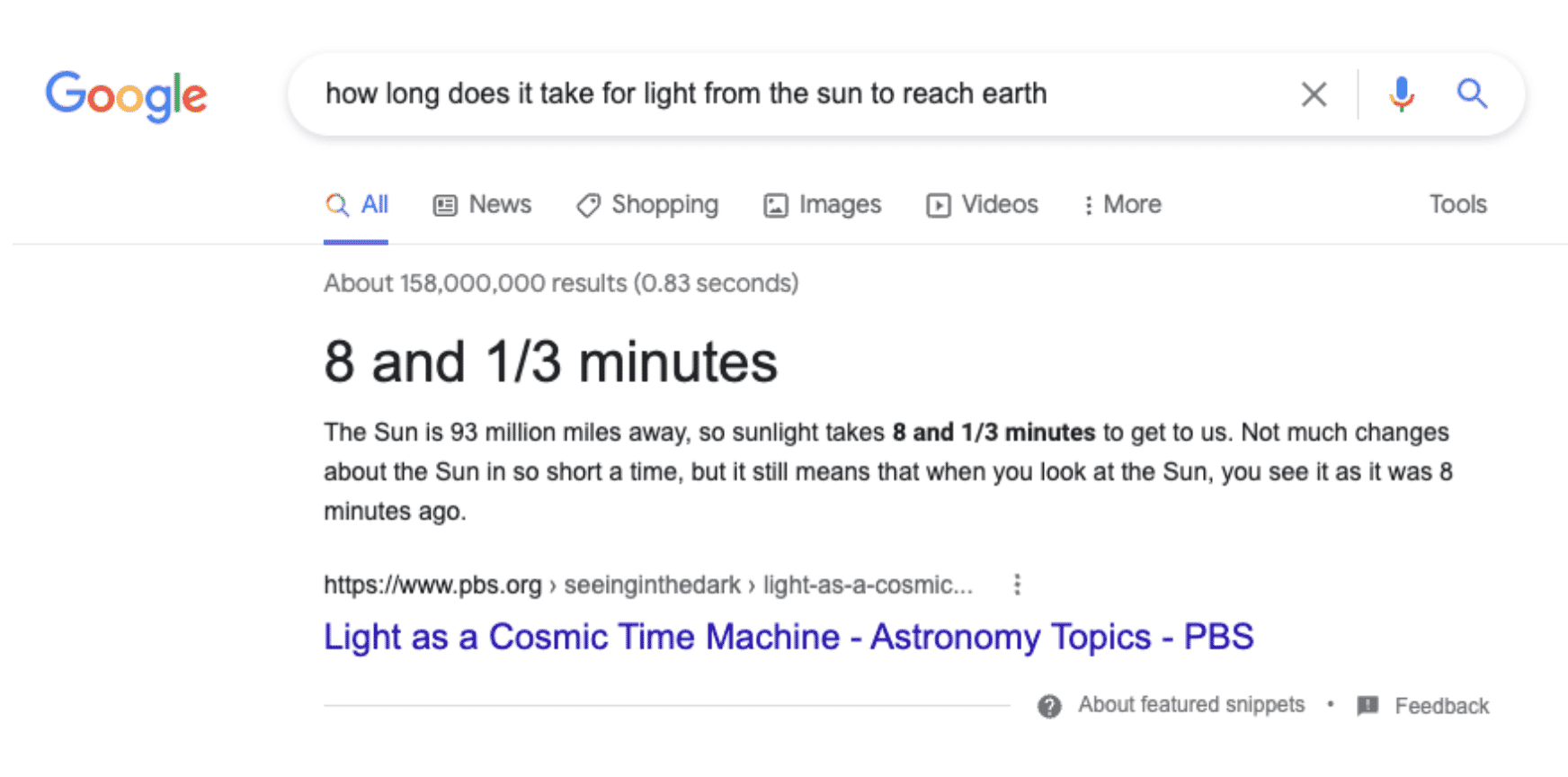

Featured Snippets callouts now uses MUM and general consensus

Featured snippets in Google Search will now use MUM to help understand if there is a general consensus for the information Google shows as callouts in these featured snippets. Google said that its “systems can now understand the notion of consensus” by using MUM, Multitask Unified Model.

MUM has not been used to date in too many applications within Google Search, limited to COVID vaccine names, Google Lens features and some other applications – more on that here, including now featured snippets.

Now, with the help of MUM, Google can understand if there is a consensus across the web to help highlight the callout portions of the featured snippets. Consensus-based techniques, according to Pandu Nayak, Vice President of Search and Fellow, Google, have meaningfully improved the quality of the featured snippet callouts. It is important to note that this does not come to mean that featured snippets will show facts, it does not necessarily do that but it does help improve the overall quality of featured snippets callouts.

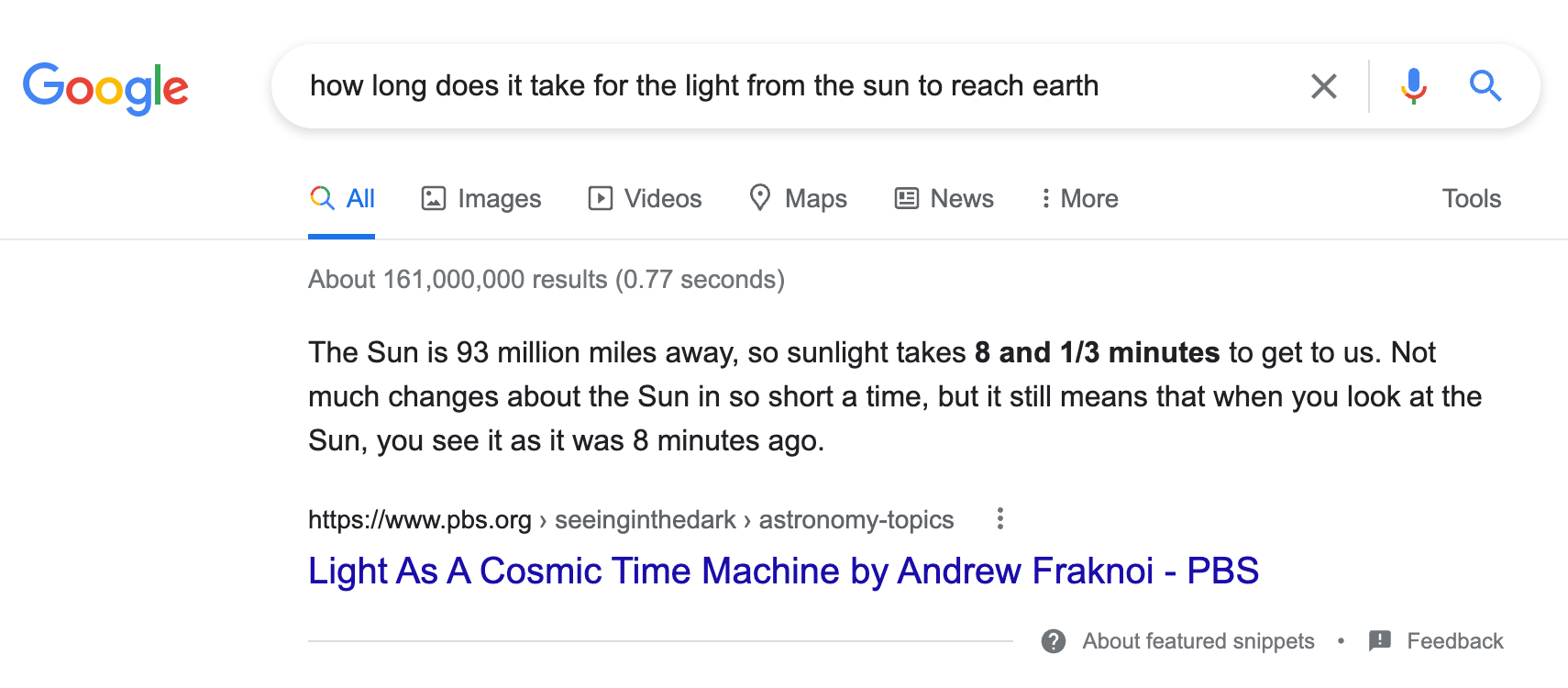

Here is an example where Google’s featured snippet callouts are improved. In the screenshot below, Google will now highlight this callout, the word or words called out above the featured snippet in a larger font, to provide a better answer for the searcher.

Here is what this looks like without this feature:

Can consensus be spammed? Pandu Nayak explained these featured snippets are generally taken from the top-ranked results, so he is hopeful that those top-ranked results in Google Search are not spammy. It is important to note that consensus is not being used as a ranking factor but rather being used for callouts for featured snippets.

Fales premise queries reduced by 40%

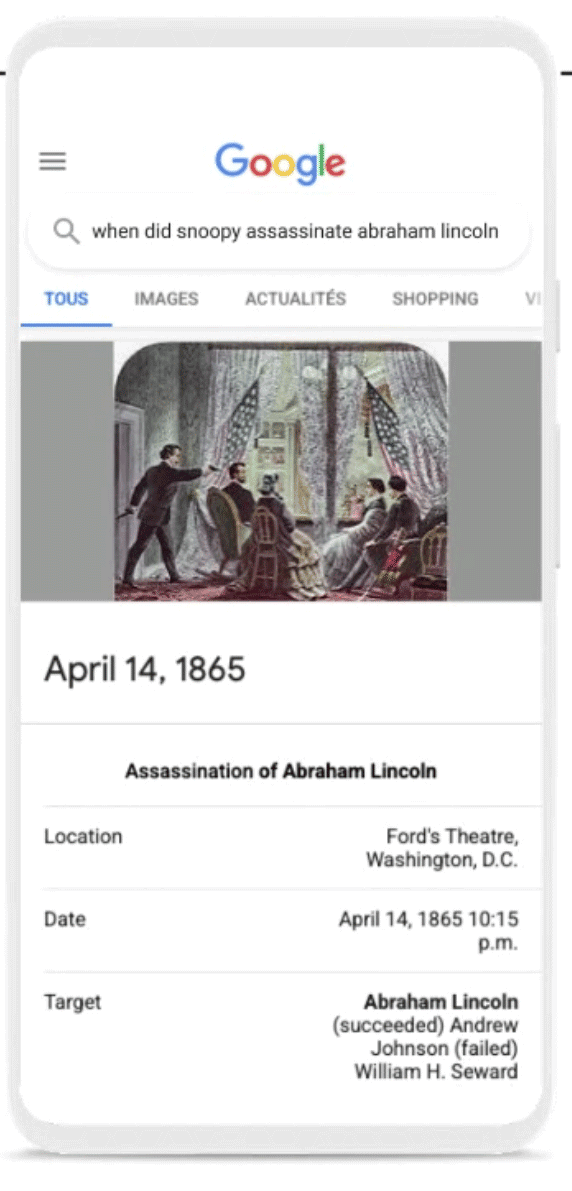

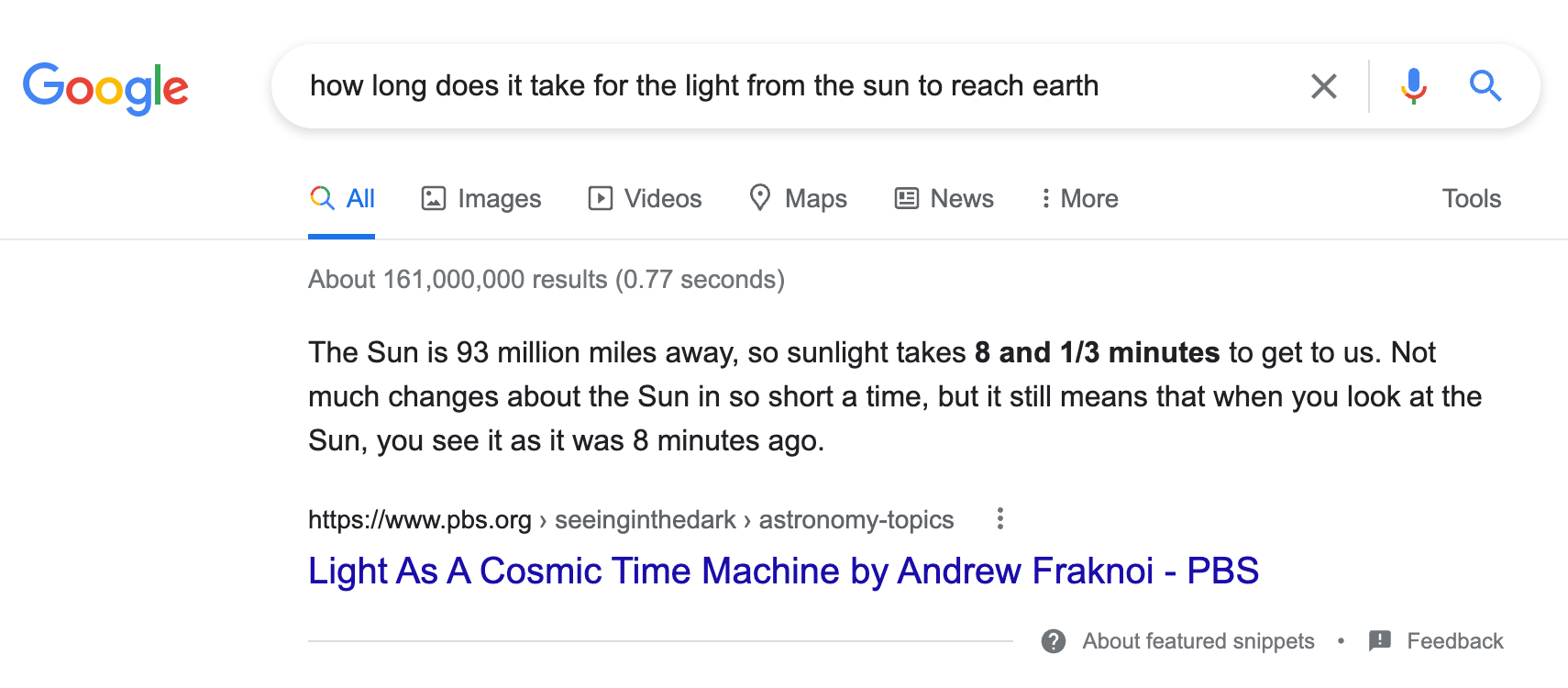

Another advancement with featured snippets is around what Google calls “false premise” queries. Queries that may be inaccurate or factually incorrect but are nevertheless used by some searchers in Google Search. Google said it has improved what featured snippets it shows for queries that contain information for things that did not happen.

Google will now show you information that is accurate and remove the false part. Google said it will show fewer featured snippets that may show false or inaccurate information. Google said it reduced these occurrences for triggering featured snippets by about 40% in Google Search.

An example Google provided was for a search on [when did snoopy assassinate Abraham Lincoln]. Now, instead of showing information about snoopy, who obviously did not assassinate Abraham Lincoln, Google will ignore the snoopy part and show you the consensus on the web around this answer.

Pandu Nayak added that this also helps with the people also ask, since those are powered by featured snippets.

Here is how it might look:

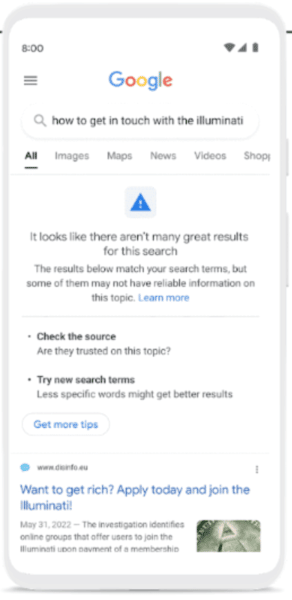

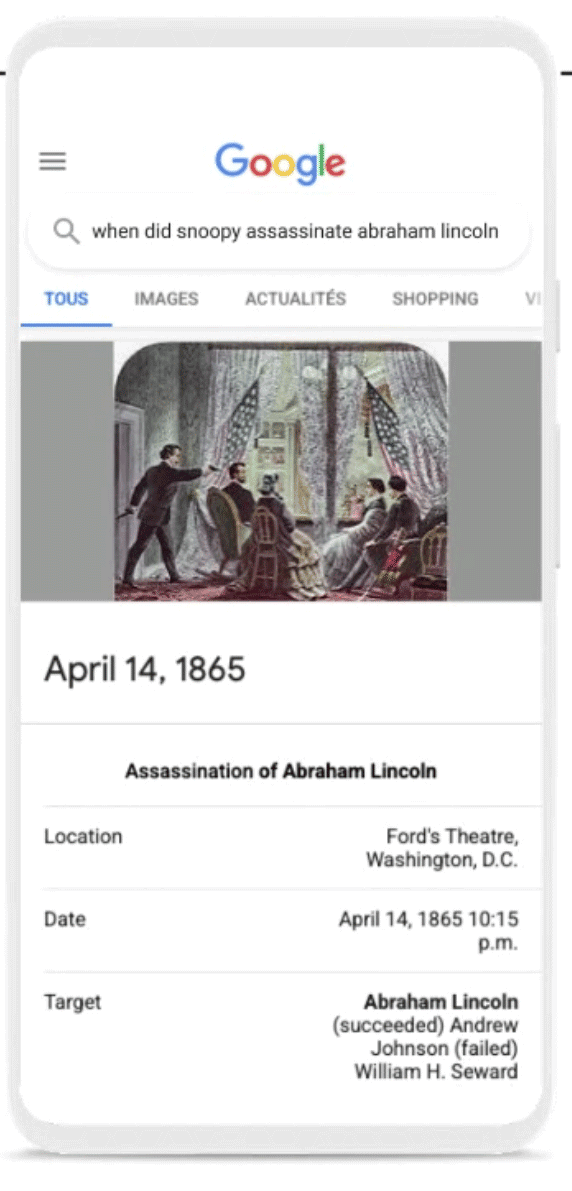

Content advisories expanded to low confidence results

In April 2020, Google launched content advisories in Google Search with the aim of communicating to searchers that the search results are not 100% reliable either because they are new or Google does not have enough information about the topic yet.

Google said it is now expanding content advisories to searches where its systems don’t have high confidence in the overall quality of the results available for the search. Google said this “does not mean that no helpful information is available, or that a particular result is low-quality.” These notices provide context about the whole set of results on the page, and you can always see the results for your query, even when the advisory is present.

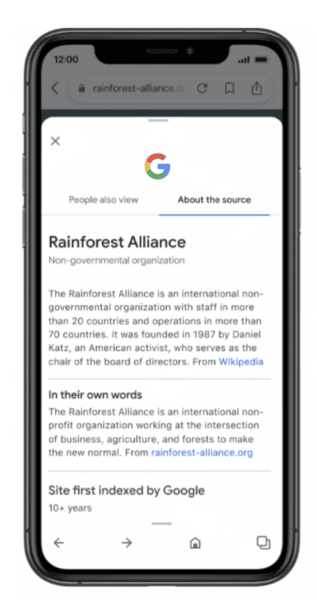

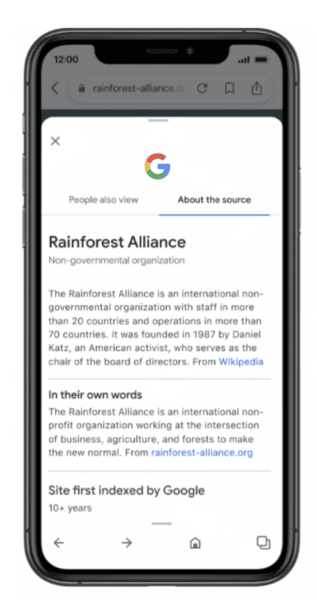

About This Result expanding as well

In February 2021, Google launched the about this result to communicate to searchers, before they click on the result, more information about that search result snippet they are looking at. Google has expanded the feature in terms of showing more details in more areas, as well as why the result is ranking for the query. Google now said this feature has been used over 2.4 billion times since it launched.

Later this year, Google is expanding in Pouguese (PT), French (FR), Italian (IT), German (DE), Dutch (NL), Spanish (ES), Japanese (JP), and Indonesian (ID) languages. Google also added the about this result to the Google app.

Google is also expanding what information is shown in the about this result, including how widely a source is circulated, online reviews about a source or company, whether a company is owned by another entity, or even when Google Search can’t find much information about a source.

The post Google steps up featured snippets with MUM; reducing false premise results by 40% appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Wednesday, August 10th, 2022

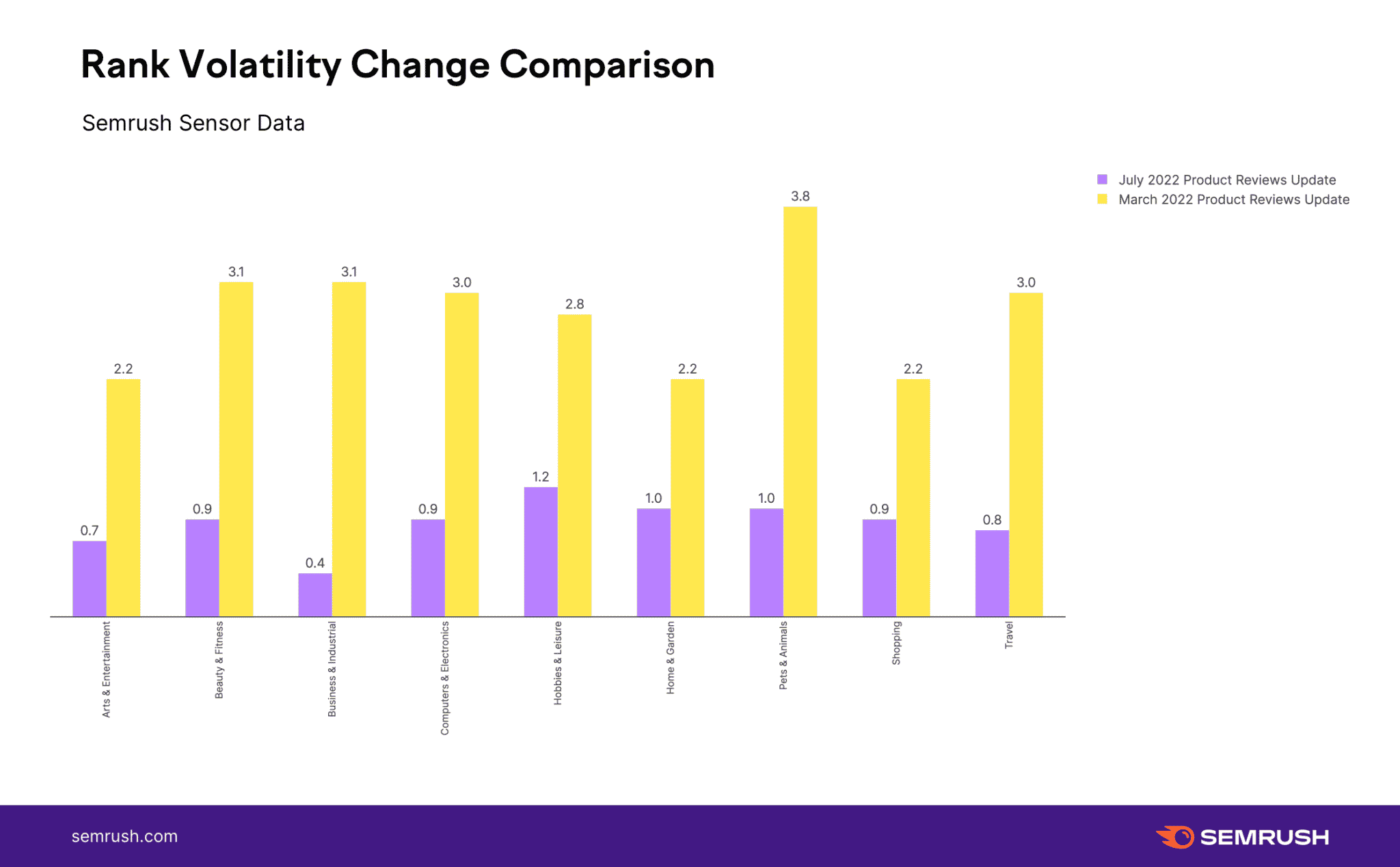

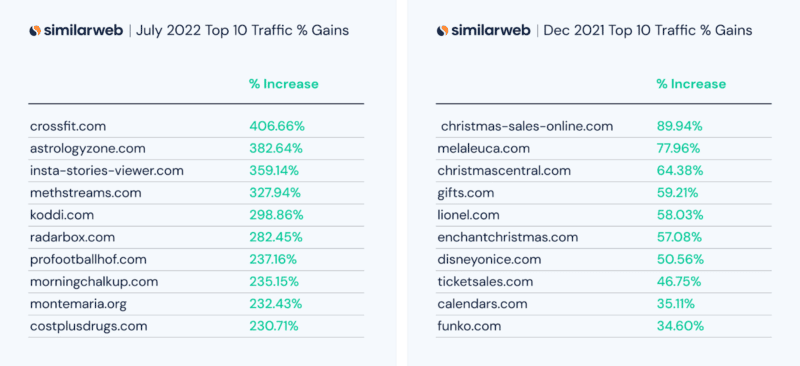

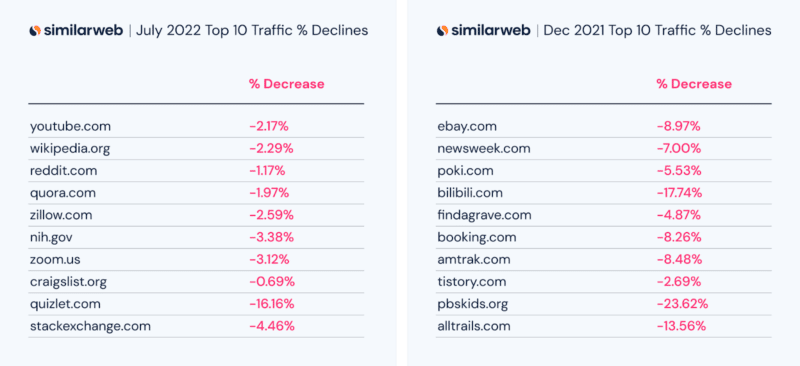

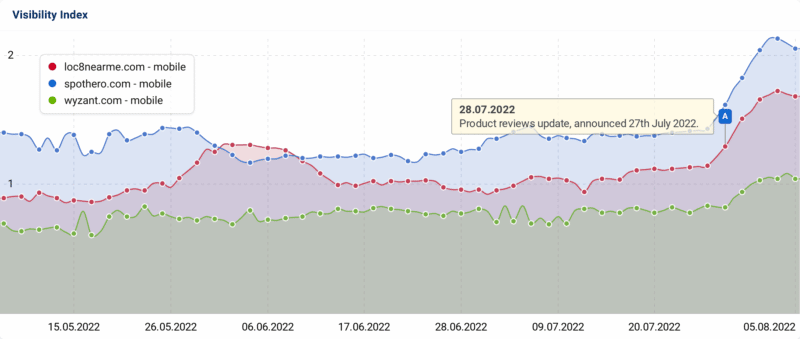

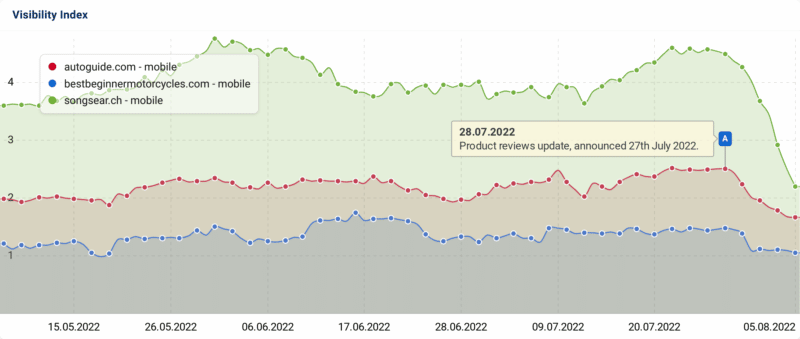

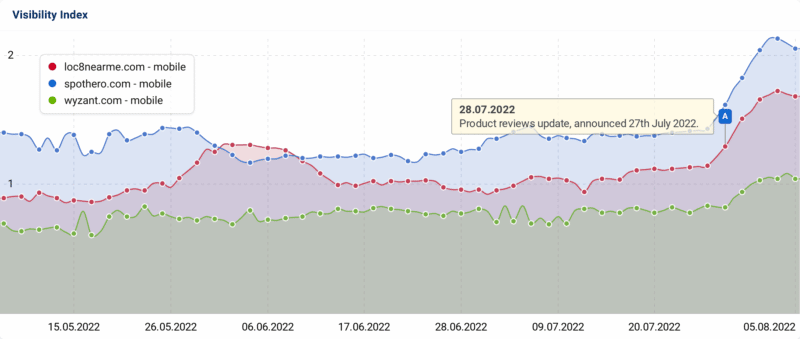

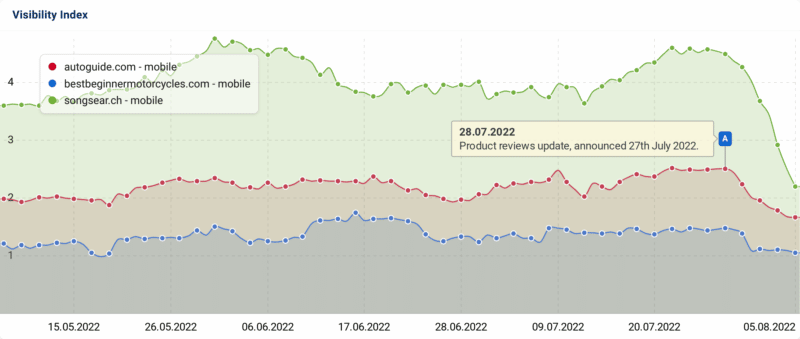

On July 27th, Google released its fourth product reviews update named the July 2022 product reviews update. That update took a short and sweet six days to roll out and was completed on August 2nd. In short, this July update was almost none existent to most of the data providers we asked, where in aggregate, there were very few signs of any real Google update during that six-day time period.

Let me add the caveat that if a site was hit by this update, it 100% felt like a major update, where the site can see traffic changes upwards of 80%. Plenty of sites were hit hard and if your site was one of them, this story is not to diminish that in any way. This story just says that it was not as widespread as previous product review updates or core updates. But in the aggregate, it seemed this update was pretty minor across Google’s search results, as a whole.

Data providers show the July update was minor

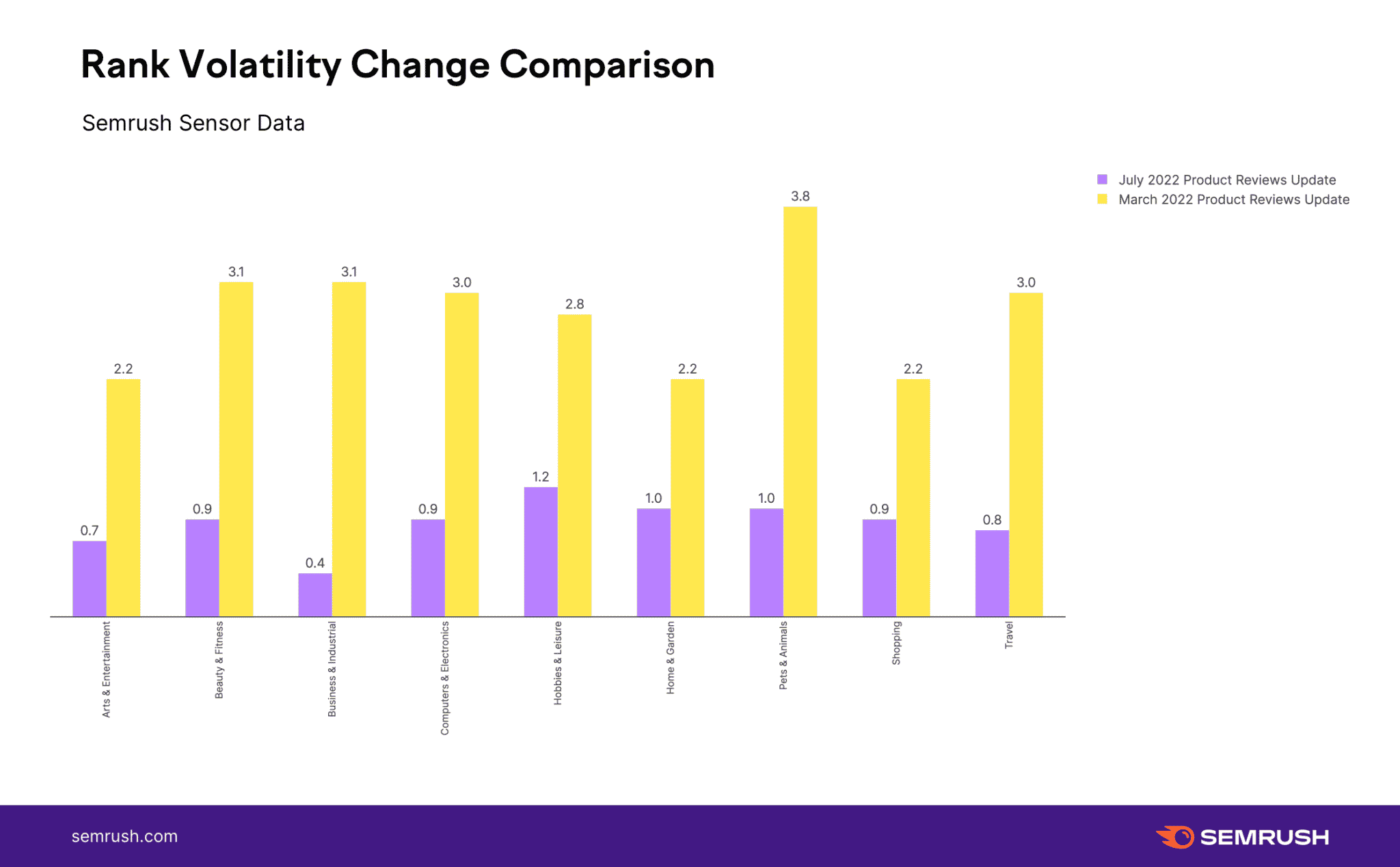

Semrush. Semrush data showed that the July product reviews update was “incredibly mild,” Mordy Oberstein, Semrush’s communications advisor said.

The change in rank volatility between the July 2022 product reviews update and the March 2022 product reviews update, the data shows that the increases are minimal. Some niches “didn’t even crack a full point increase, the highest fluctuation rate the Semrush Sensor recorded during the update,” Mordy Oberstein added.

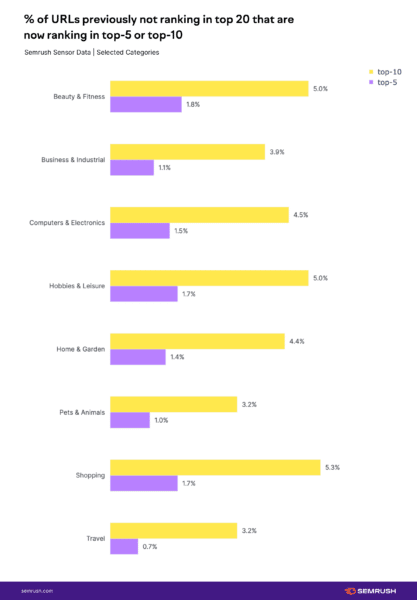

This charge shows a comparison between the July and March 2022 product reviews updates, showing July was almost insignificant compared to March:

This also works when you just isolate the peak changes, the most volatile snapshot in time during the update, you really cannot compare the two. It is like the July update was nothing compared to the March update.

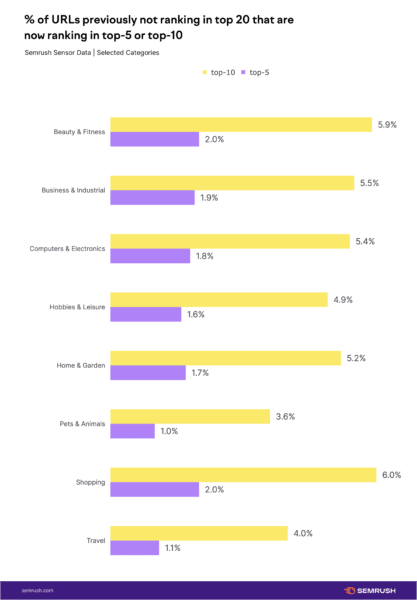

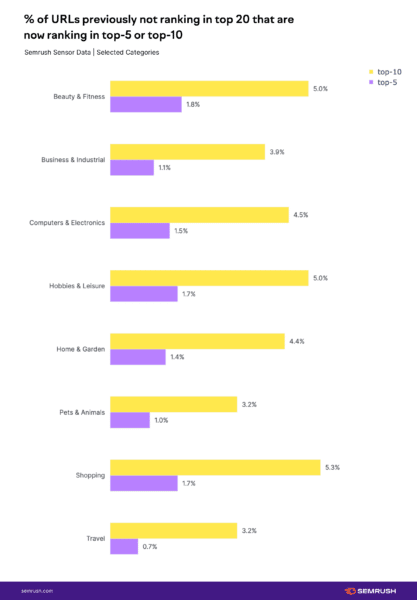

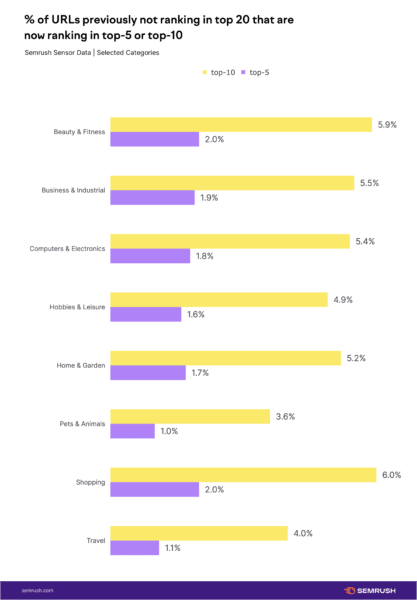

Here is another interesting chart showing the newly ranked URLs after each update and also the URLs not previously in the top 20. Click on each chart to expand:

Just one note, around June 15th, Semrush updated its Sensor to adjust the levels of sensitivity it used down. Semrush confirmed this with us today but also posted about it on Twitter some time ago, which is why we asked for confirmation on this story. That being said, the other tools showed similar findings, which was very little volatility related to this update.

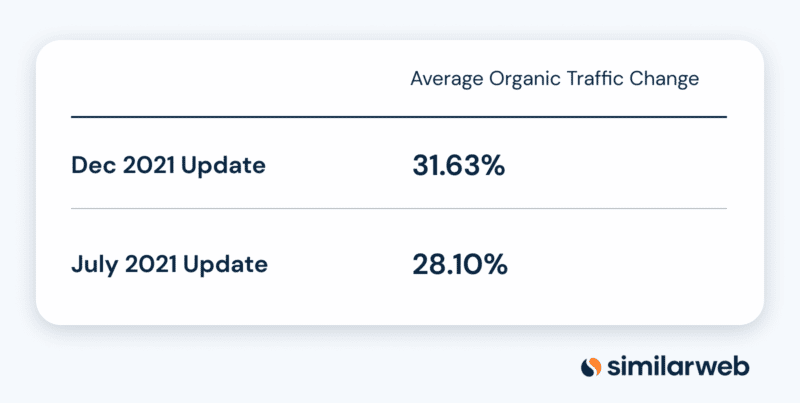

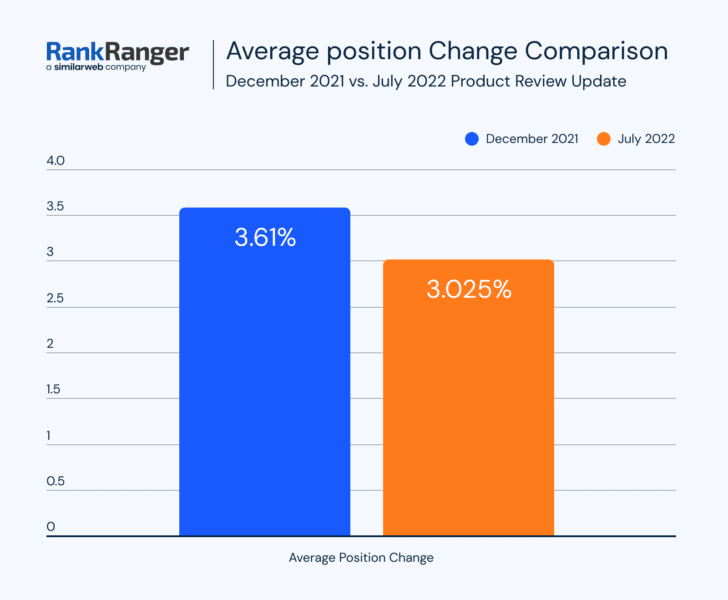

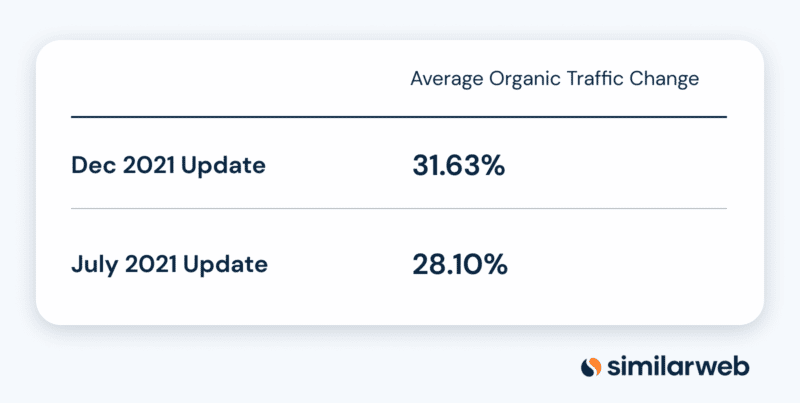

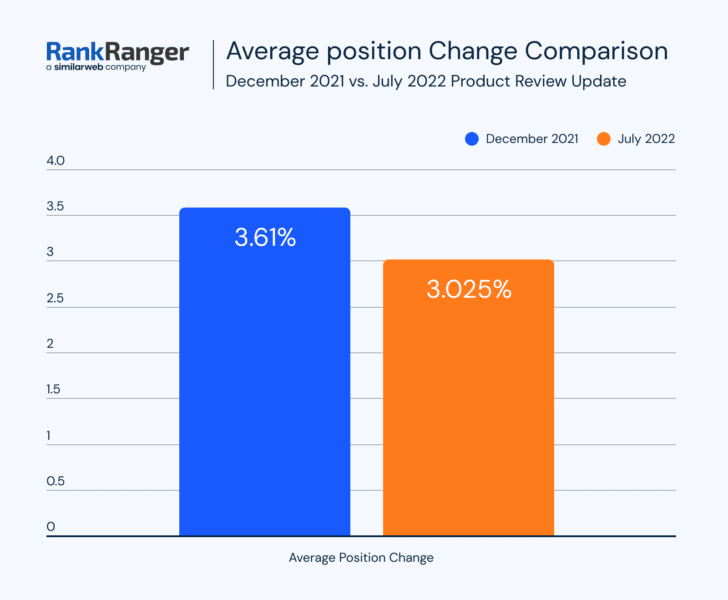

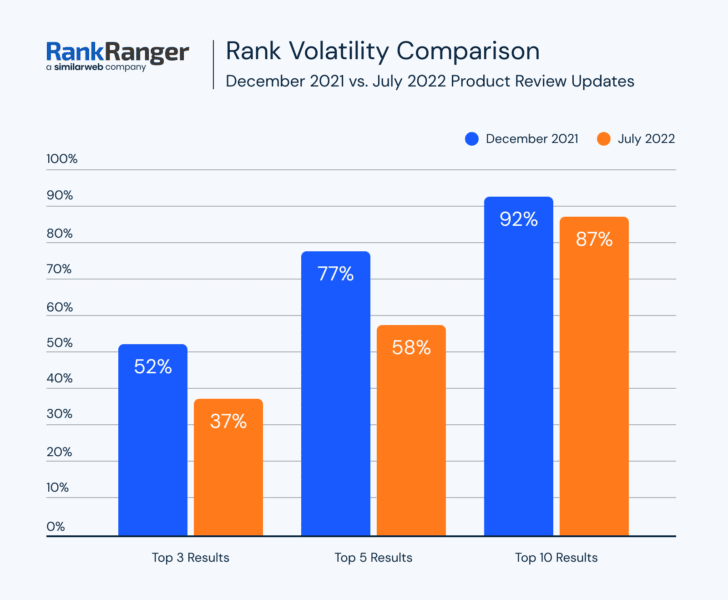

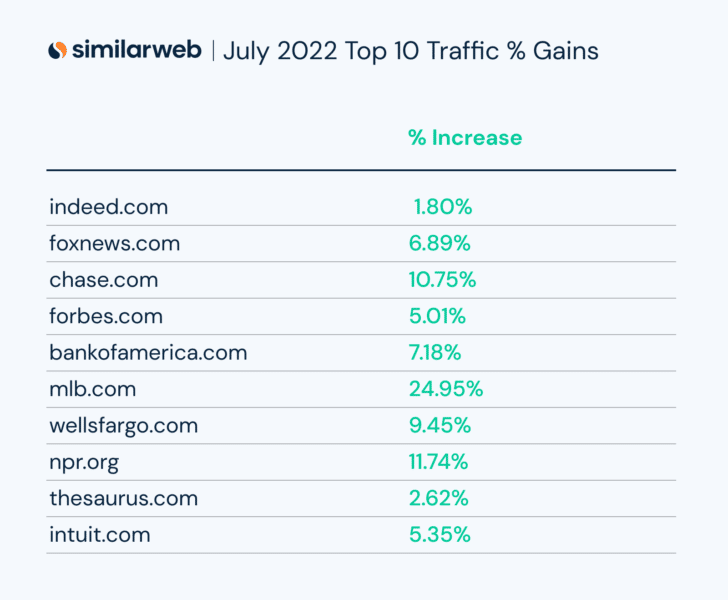

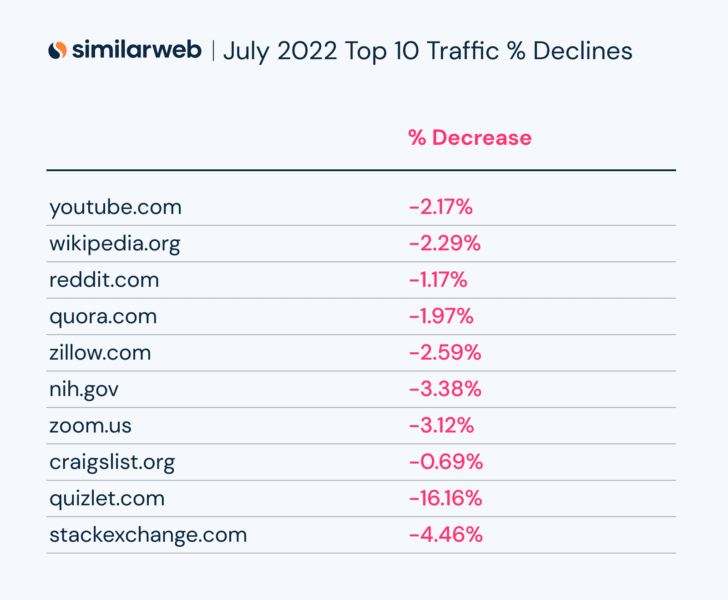

RankRanger/Similarweb. RankRanger, a company of Similarweb, showed similar results, where RankRanger data “didn’t see any unusual rank fluctuations,” according to Shay Harel from the company. “The latest Product Review update was pretty uneventful,” Shay Harel added. This is based on both RankRanger and SimilarWeb, RankRanger’s new parent company, data where both traffic data and rank fluctuation data show lower levels of fluctuations.

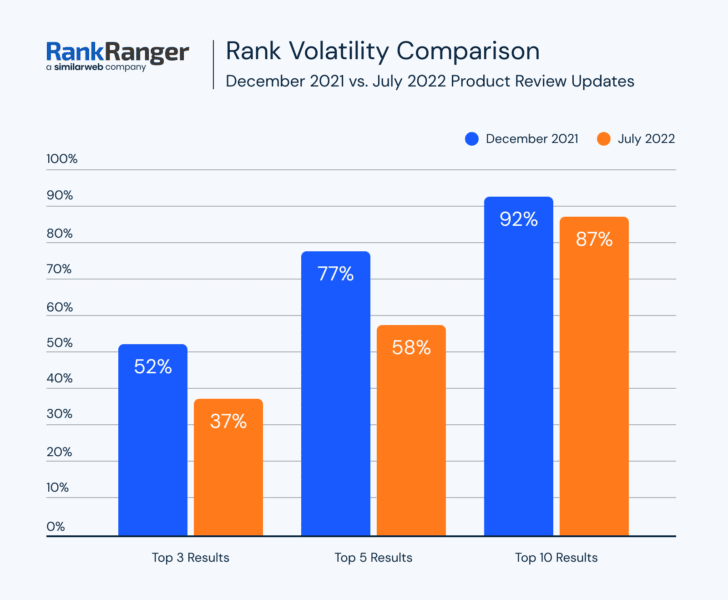

This chart below shows that the fluctuations and volatility during the period of time of the July 2022 product reviews update were limited, to say the least:

Now that RankRanger has Similarweb, they can now also compare traffic from Google Search to compare on that level. And that also showed that the December update was larger than the July update:

RankRanger compared this July update with the December update, not the March one. So keep that in mind, since Semrush compared it to the March update, which was the most recent one we had prior to this July update.

Now broken down by position, you see a similar picture:

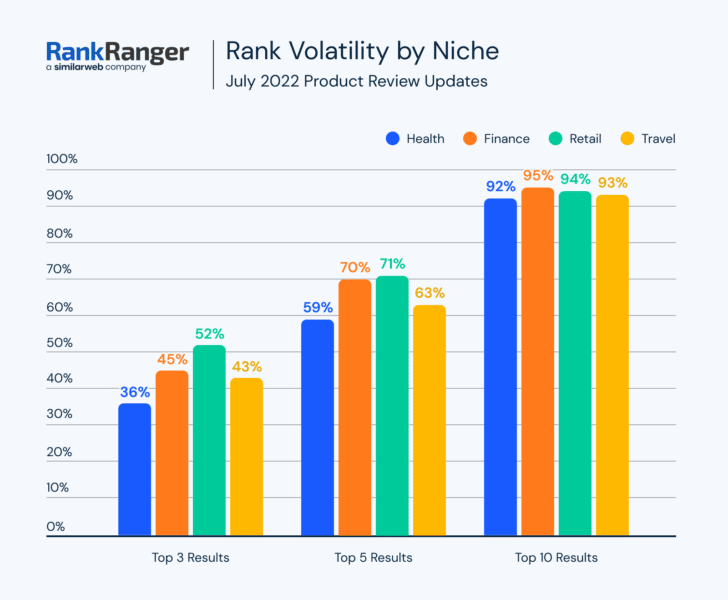

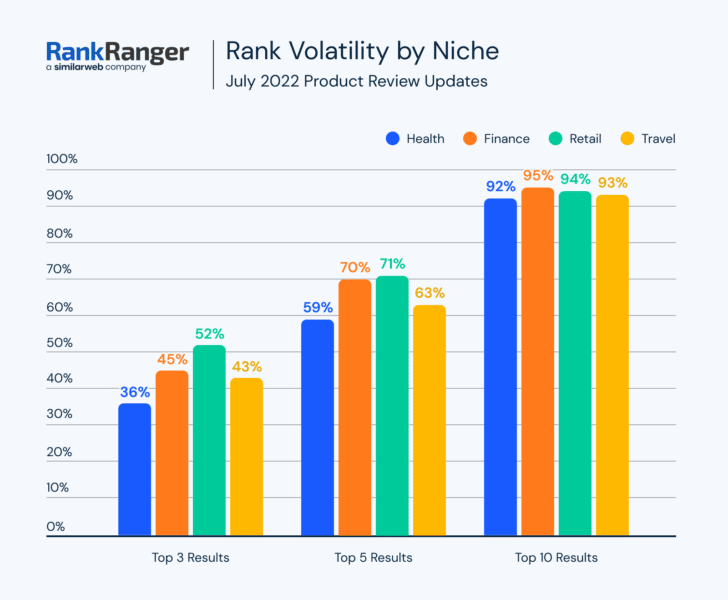

RankRanger also showed the volatility by niche for this last update:

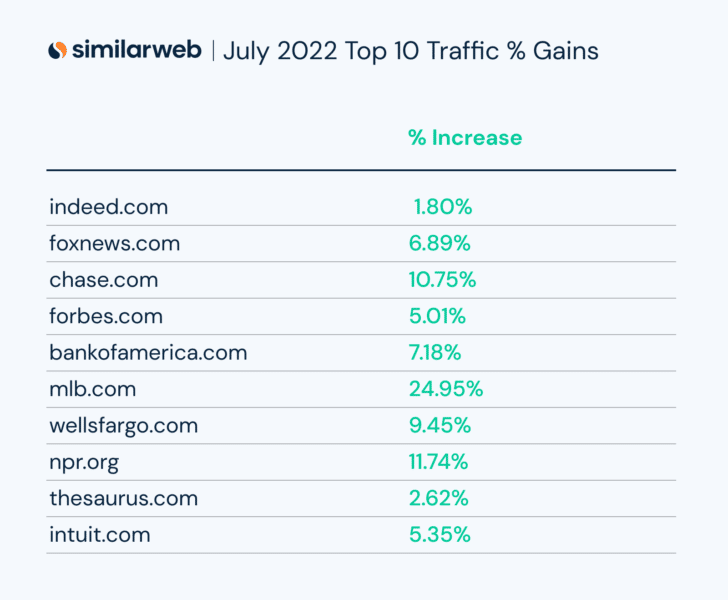

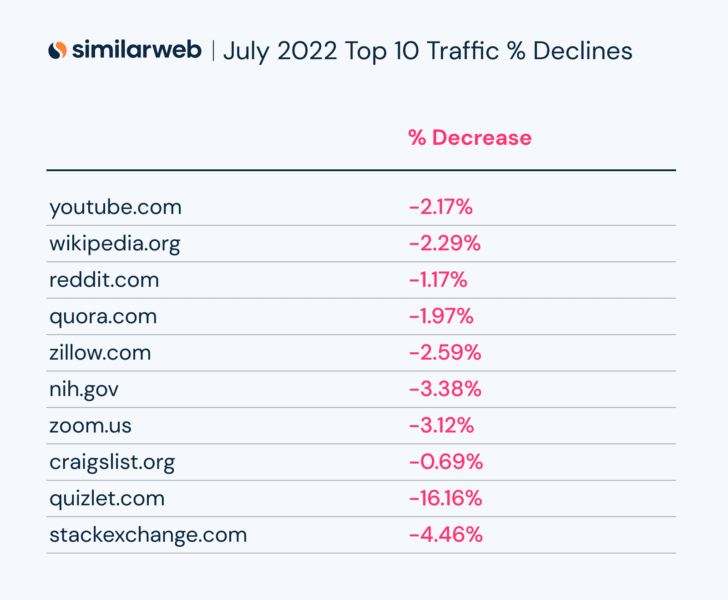

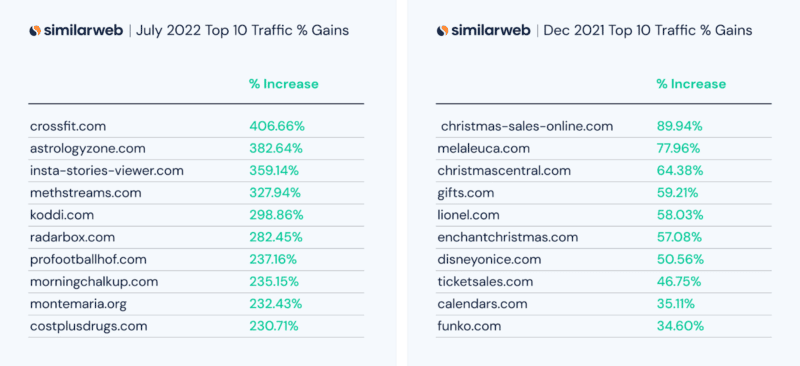

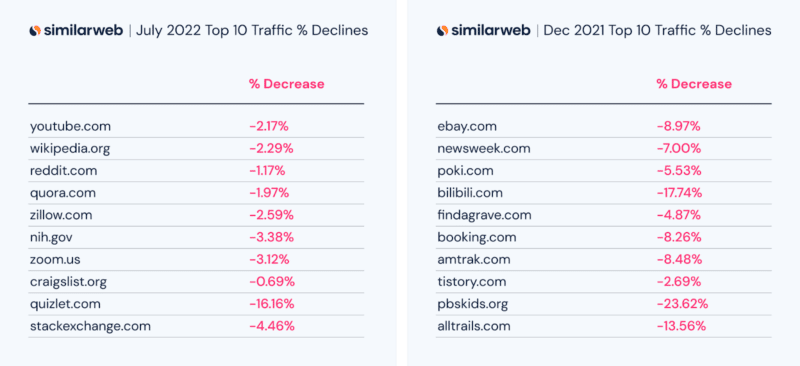

Here are some top winners and losers from Similarweb’s data:

Sistrix. Again, the data shows the same story, “after an initial analysis it’s clear that this update did not have a major impact on SERPs,” Steve Paine from Sistrix told us. Sistrix sent us a few examples of sites that did see movement both up and down after this update:

seoClarity and Moz. Both of those toolset providers told us they saw very little, just like the toolsets above. “Our internal tools are showing minimal changes,” Mitul Gandhi from seoClarity told us. He added that it is “hard to tell in a quick view anything more than standard fluctuations.” Dr. Pete Meyers from Moz also told me that he was unable to pin down a lot on this last update, saying there is not much to see with this update.

More on the July 2022 product reviews update

Google product reviews update. The Google product reviews update aims to promote review content that is above and beyond much of the templated information you see on the web. Google said it will promote these types of product reviews in its search results rankings.

Google is not directly punishing lower-quality product reviews that have “thin content that simply summarizes a bunch of products.” However, if you provide such content and find your rankings demoted because other content is promoted above yours, it will definitely feel like a penalty. Technically, according to Google, this is not a penalty against your content, Google is just rewarding sites with more insightful review content with rankings above yours.

Technically, this update should only impact product review content and not other types of content.

July 27 to August 6. This July 2022 product reviews update only took six days to fully roll out and to be honest, that is surprising. We saw very limited changes from the tracking tools and honestly, while some sites seemed to get hard by this update, it does not seem there was a lot of SEO community chatter around ranking changes due to this update. In fact, we saw a spike on August 3rd but clearly, that was after this update was complete.

What to do if you are hit. Google has given advice on what to consider if you are negatively impacted by this product reviews update. We posted that advice in our original story over here. In addition, Google provided two new best practices around this update, one saying to provide more multimedia around your product reviews and the second is to provide links to multiple sellers, not just one. Google posted these two items:

- Provide evidence such as visuals, audio, or other links of your own experience with the product, to support your expertise and reinforce the authenticity of your review.

- Include links to multiple sellers to give the reader the option to purchase from their merchant of choice.

Google added the following criteria for what matters with the March 2022 product reviews update:

- Include helpful in-depth details, like the benefits or drawbacks of a certain item, specifics on how a product performs, or how the product differs from previous versions

- Come from people who have actually used the products, and show what the product is physically like or how it’s used

- Include unique information beyond what the manufacturer provides — like visuals, audio or links to other content detailing the reviewer’s experience

- Cover comparable products, or explain what sets a product apart from its competitors

Google added three new points of new advice for this third release of the products reviews update:

- Are product review updates relevant to ranked lists and comparison reviews? Yes. Product review updates apply to all forms of review content. The best practices we’ve shared also apply. However, due to the shorter nature of ranked lists, you may want to demonstrate expertise and reinforce authenticity in a more concise way. Citing pertinent results and including original images from tests you performed with the product can be good ways to do this.

- Are there any recommendations for reviews recommending “best” products? If you recommend a product as the best overall or the best for a certain purpose, be sure to share with the reader why you consider that product the best. What sets the product apart from others in the market? Why is the product particularly suited for its recommended purpose? Be sure to include supporting first-hand evidence.

- If I create a review that covers multiple products, should I still create reviews for the products individually? It can be effective to write a high-quality ranked list of related products in combination with in-depth single-product reviews for each recommended product. If you write both, make sure there is enough useful content in the ranked list for it to stand on its own.

Why we care. If your website offers product review content, you will want to check your rankings to see if you were impacted. Did your Google organic traffic improve, decline, or stay the same? Long term, you are going to want to ensure that going forward, you put a lot more detail and effort into your product review content so that it is unique and stands out from the competition on the web.

We hope you, your company, and your clients did well with this update.

The post Google July 2022 product reviews update had very little ranking volatility, say data providers appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Wednesday, August 10th, 2022

Effective digital transformation can accelerate organic marketing growth—and maintain that growth despite future economic or regulatory challenges.

Join search experts from Conductor in an upcoming webinar and learn:

- The crucial role organic and SEO play in enterprise digital transformation.

- The importance of diversifying marketing efforts and what that looks like.

- How to apply data-driven insights to elevate the customer experience.

Register today for “Beyond the Buzzword: Transform Digitally to Drive Organic & SEO Growth,” presented by Conductor.

The post Webinar: The crucial role SEO plays in digital transformation appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Tuesday, August 9th, 2022

Technical SEO issues are more common than most users expect, but you do not have to hire a team of specialists to resolve them.

Developers and web designers want to assure everything on their website is up and running at optimal levels. Therefore, similarly to your website’s site health, monitoring your website’s SEO is important to keep your ranking growing.

In this article, we will discuss some of the issues that affect your website’s technical SEO and how to find and solve them. Included will also be tools that you can use to better manage and monitor your website’s SEO rank and Domain Authority score.

Domain Authority

Your Domain Authority (DA) is a score for your website created by Moz, which gives you a ranking from 1-100 in order to predict if your site is likely to appear higher in a search engine search.

The score is calculated on different factors; therefore, your domain’s score will fluctuate with time. It uses an algorithm to compare how often Google search uses your domain over a different domain within that same search.

Therefore, if your domain ranks higher than a competitor’s domain, it regards it as having more authority. For example, Amazon will have a higher domain authority than a regular eCommerce website.

Why does Domain Authority matter?

Domain Authority is not a Google ranking factor in and of itself. However, it does provide some insight into your website, its links, and how it compares to your competitors.

You do not have to strive to make your DA 100, as that is the highest it can go and likely very hard to compete with other large companies such as Facebook or, as previously mentioned, Amazon.

For comparison, you can review Moz’s Top 500 Websites.

Webmasters should therefore focus on their links, keywords, and other SEO aspects that will affect their Google ranking.

Backlinks

Sometimes referred to as inbound or incoming links, backlinks occur when one website is linked to another.

When your website receives a backlink from an authoritative website, you gain what is known as a “vote of confidence.”

If multiple sources of information link to your website or the same content, SEO regards your content to be of high quality.

However, what happens when links to your website are deemed as “bad” or low-quality backlinks, and what does this mean?

Toxic backlinks

Google defines bad backlinks as:

Any links intended to manipulate PageRank or a site’s ranking in Google search results may be considered part of a link scheme and a violation of Google’s Webmaster Guidelines. This includes any behavior that manipulates links to your site or outgoing links from your site.

This means if any of the backlinks on your website are from a malicious or manipulative source, your SEO will be negatively affected, as it is reflected as bad content.

Google Search Central has further examples and information on what can cause your backlinks to be flagged as toxic or bad content.

How to find toxic backlinks and how to get rid of them

A link analysis is the best way to identify where your backlinks are coming from. You can use Google Search Console to see your entire linking profile; however, for a more in-depth look at your backlinks and their sources, you can use other resources.

Semrush has a Backlink Analysis tool that lets you review your links before making any changes. It is to be noted that Semrush is a paid resource.

In order to address toxic backlinks, you can put in a request in Google Search Console to Disavow them. This lets Google know to ignore the links and not to count them against your SEO score.

Disavowing backlinks is a resource that should only be used if the links to your website are actively targeting your site and bringing your rank down.

Valid markup

Although drag and drop builders are amongst the most popular ways to design a website today, all sites are still made out of code, and this will also be reviewed by search engines.

W3C coding standards

The World Wide Web Consortium (W3C) is an international community that works together to develop Web standards.

It offers free access to these standards as its principle is Web for all, Web on everything. This means all sites should be accessible to users across browsers and devices.

What do coding standards have to do with SEO?

These standards assure that your content is available everywhere, which means if your code is not validated by these rules, it might mean there will be cross-compatibility issues and errors that can negatively impact your SEO.

Validating your code per W3C standards does not affect your SEO, but it can resolve a couple of issues, including:

- Malformed code

- Content rendering

- Microdata markup

- Heading structure

It is possible to validate your code in a variety of ways. Including URL, file upload and direct input on the W3C website.

Having your code validated will also assure you that you are available across the globe and platforms, reduce code bloat, and create a better user experience overall. All of which will contribute to better SEO management.

Other factors that might affect your technical SEO

The following are some further issues you might encounter after auditing your website.

- Resources blocked by robots.txt – If you block CSS or JS here, search engines cannot render your content.

- Broken links – Not only are broken links bad for the user experience but because your website is setting outbound links to pages that do not exist anymore or are misspelled, it reflects as bad content on your website.

- Missing images – Similarly to broken links, missing images occur when the image that was in place on a page was removed, or its link updated. Ensure your images are up to date and optimized for search engines.

- Server errors

- 404 not found – per Google, there are a variety of ways a 404 page could affect your website. Having multiple 404 pages with no 301 redirects or custom 404 pages can reflect bad content or issues with your links as they oftentimes will continue to be crawled.

- 301 redirect loops – this error occurs when one of your links redirects to a new website, that in turn continues to redirect it. As Google Search engine cannot process your final link, it is not able to gather information from that page. Ensure to update your links to point to the correct destination to eliminate the need for a 301.

- Mixed content warnings – Mixed content happens when an HTTPS page contains HTTP elements. Non-secure HTTP elements mean a page is not secure and could be attacked, negatively affecting your SEO.

Further resources

There are multiple tools to check your SEO standing and how to improve it. These are just some of our recommendations.

- Backlinko is a great resource for SEO training and link-building strategies.

- Google Core Web Vitals is one of the most thorough tools to check your site speed and what might be affecting it.

- Google Search Console is Google’s free tool for link and page checks and much more.

- ScreamingFrog is desktop-based software that crawls your entire website and integrates with data from your Google services. The audit scans for broken links, duplicate content and server errors amongst technical data points.

- SEORCH can give you vital information on your keyword density, heading structure, and code-to-text ratio.

- Semrush is a paid resource with incredible tools that help you understand your links, keywords, ranking, and more. They also offer courses, e-books and community support.

Final words

Technical SEO can be intimidating for some users as it is not as readily available to change as general SEO modifications. Oftentimes it requires changes to code, site architecture and the web server in order to resolve issues.

Keeping an eye out for these issues with consistent monitoring and full site audits helps you catch issues early on. Using tools like Semrush and Google Search Console will help your site grow not only its rank but also its Domain Authority.

If you are interested in learning more about improving your SEO ranking and optimizing your website, amongst other things, check out InMotion Hosting’s blog.

The post Top technical SEO issues every webmaster should master appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Tuesday, August 9th, 2022

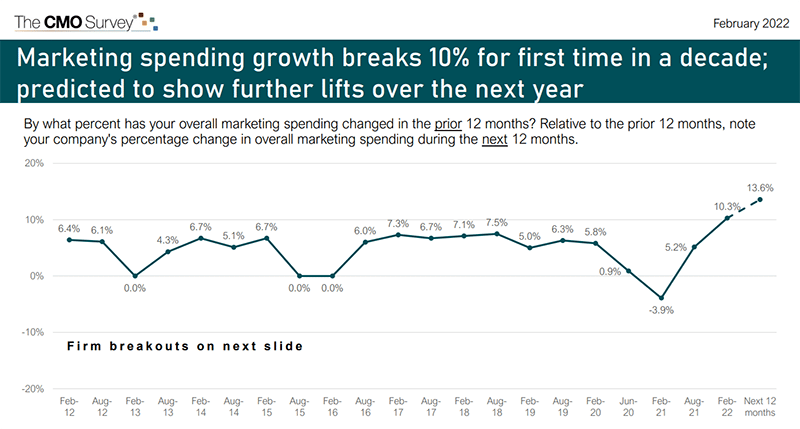

Business investment in marketing these days is soaring, especially in digital marketing.

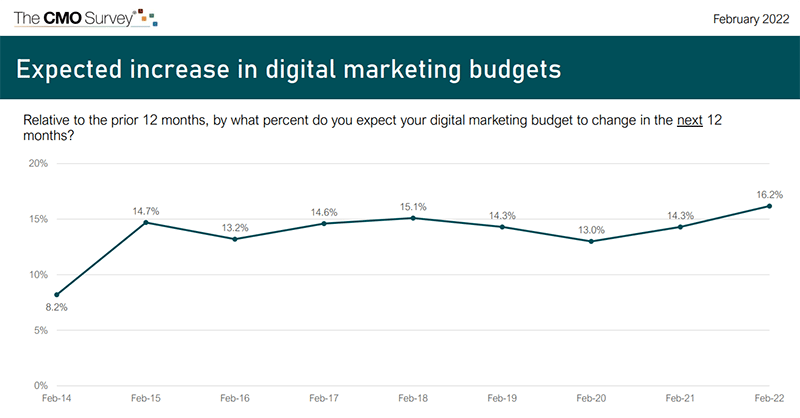

In fact, for the first time in a decade, marketing growth topped 10% from February 2021 to February 2022. According to the latest CMO Survey report, marketing spending grew by 11.8% compared to the previous 12 months. And it’s projected to grow even faster over the next year, to 13.6%.

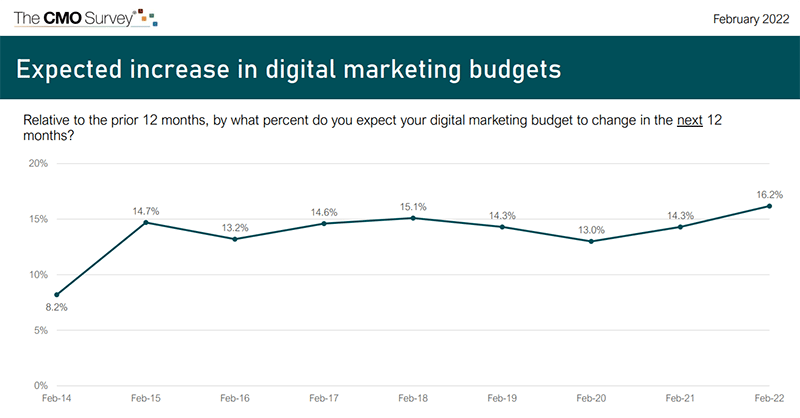

The digital marketing channel specifically accounts for the bulk of that marketing spend, at 57.1%. According to that same report, digital marketing spend is expected to grow by a whopping 16.2% over the next year.

But how much of digital marketing spending goes toward search engine optimization?

In 2019, U.S. companies spent $73.38 billion on SEO out of a total of $776.30 billion for all digital marketing – roughly 9.5%, according to an earlier report by Borrell Associates.

Those who do SEO in-house (at least with local businesses) report higher costs and lower returns versus hiring a consultant and agency, which yields lower costs and higher returns, according to the report.

According to the report:

“Those who use third parties rate the third party’s effectiveness higher than their internal skill. SEO and web design/development particularly skew towards third parties being more effective.”

That said, SEO is an investment in your business’s future revenue. Think about it:

- What drives a business is sales.

- What drives sales is leads.

- Digital leads come in through a website.

- People find a website through impressions in the search results.

SEO allows businesses to own the top of the sales funnel: impressions of your website in the search results. And, some sectors find organic search drives 2x more revenue than other channels.

So what determines your SEO budget? I’ll touch on that next.

Factors that determine your SEO budget

What percentage of your budget should go toward SEO?

It’s not black and white, but the following factors should determine how much you invest:

- Your revenue

- Your competition

1. Your revenue

I recommend that the greater of $8,000 per month or 5% to 10% of your business revenue go toward SEO. In a highly competitive space, you should lean toward the high end. This is what you will see for businesses that are serious about competing.

Spending at least $8,000 a month usually allows for a good starting point with ample expert resources. At the high end, we have clients at six times that each month.

Also, consider how much money you’re putting toward paid advertising. For example, a national brand that runs PPC campaigns to attract new customers should spend approximately 25% as much additionally on organic SEO. The two channels complement each other to help drive website traffic.

I think it’s useful to say 25% of PPC spend, or at least $8,000 a month, is a reasonable estimate of SEO spending for companies that use ads.

2. Your competition

Consider that most search engine queries yield at least a million search results. And you have to be on Page 1 to even matter.

Any business that is competing in organic search has their work cut out for them. But, if you are in a difficult niche or are up against big brands with bigger budgets, you may have to work a little harder and faster.

This often requires a bump in your SEO budget. And you have to be willing to do this or risk being irrelevant in the search results.

Get the daily newsletter search marketers rely on.

How to decide your SEO budget

So you know that two basic factors influence your SEO budget: your revenue and your competition. Let’s put this into perspective.

We know that there is massive competition in the search results. So the question isn’t only: “How much do you want to spend on SEO?” But also: “How fast do you want to beat the competition?”

This is really what determines your budget. At a minimum, you should spend 5% to 10% of your revenue on SEO. But if you want to get ahead faster, you typically spend more.

That does not mean blindly investing in SEO with the promise that more money = better results.

But you do need resources. You need to know who you are hiring, and they need to have an excellent reputation and deep expertise.

If you’re using a third-party SEO agency, make sure you only hire experts. Unfortunately, many businesses settle for inexpensive SEO services. Cheap SEO is a near-death experience, and it will cost you more time and money to crawl out of the grave you’ve dug than if you were to invest in a healthy SEO strategy upfront.

With a nice budget that affords a true expert, you can learn how to make the most impactful SEO moves with the resources you have. And, if you can be more nimble than the competition in making those changes, you have a better chance of getting ahead.

If you are able, take advantage of downturns when possible. Those who do not have the knee-jerk reaction of pulling budget for digital marketing when the outlook is shaky will have the chance to ramp up and pass their competition.

Consider diverting budget to SEO

If your marketing budget is already maxed out on other channels, consider diverting some of your budget to SEO.

For instance, say you are spending a significant amount on PPC ads. Carving out 5% to 10% of that for SEO shouldn’t be an issue.

Especially when you consider how SEO trumps PPC on average conversion rates, you will thank yourself later. And, SEO has staying power for your brand’s presence online. You can’t say that for ads – if you turned off your advertising tomorrow, you’d have no residual value in the search results.

SEO is more cost-effective in the long run because your optimized webpages can continue driving traffic for years.

Yes, you must maintain leads coming in today (be it through PPC or something else), so I’m not suggesting you shut those activities off. But, if you have a good stream of leads coming in now, invest some of your budget into the future – and SEO will get you there.

The post What percentage of your budget should go toward SEO? appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Tuesday, August 9th, 2022

New ad channels pop up seemingly overnight. Headlines tout the popularity of the latest and greatest options, and marketers feel the rush to participate. For those in the B2B space, it can be even more complex trying to decipher whether the newest trend is worth investing the time and effort to help you reach your target audience.

Join experts at MNTN who share performance metrics used to determine the effectiveness of ad channels they’ve tested—whether it’s a new social channel or even Connected TV.

Register today for “Leap or Linger: Determining Which Ad Platforms to Test for Your B2B Brand,” presented by MNTN.

The post Webinar: What ad channels work best for your brand? appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Tuesday, August 9th, 2022

Last night at about 9:30 pm ET Google had a widespread outage with Google Search, the issue lingered through the night but restoration efforts seemed to mitigate some of the connectivity issues many have experienced. But this seemed to result in a cascade of other issues with Google Search around search quality in general.

The Google Search issues. It seems that the outage, although the most noticeable issue, is not the only issue. I’ve seen issues with the overall search results impacting search quality. These issues include:

- Outages and inaccessibility of Google Search

- Old pages dropping out of Google Search

- New pages not being indexed by Google Search

- Google Search results look stale and outdated

- Huge ranking fluctuations

I posted some screenshots of the issues on the Search Engine Roundtable.

What happened? It seems a Google data center in Council Bluffs, Iowa caught on fire and the result of the fire injured some workers and causes these issues in Google Search. The incident occurred at 11:59 a.m. local time on Monday, the Council Bluffs Police Department told SFGATE. A Google spokesperson told them:

“We are aware of an electrical incident that took place today at Google’s data center in Council Bluffs, Iowa, injuring three people onsite who are now being treated. The health and safety of all workers is our absolute top priority, and we are working closely with partners and local authorities to thoroughly investigate the situation and provide assistance as needed.”

Google is aware. Google is aware of the issue, as you can see from the statement above. But Google is also aware of the overall Google Search issues related to this fire. John Mueller from Google commented on Twitter about how things should come back to normal as restoration efforts are underway. Here is what he posted:

I'd keep an eye on it today, and please let me know if it doesn't look like the main URLs are settling down again. (We don't index everything, so I'd focus on the important URLs for things like this.)

—  johnmu of switzerland (personal)

johnmu of switzerland (personal)  (@JohnMu) August 9, 2022

(@JohnMu) August 9, 2022

Why we care. If you notice ranking changes, both positive or negative, or big swings in traffic from Google Search, this might be why. Things should return to normal as this issue gets resolved but it is unclear how long it will take for everything to come back to the state it was prior to this fire.

Our thoughts and prayers should be with those who were injured in this data center fire.

The post Google Search outage causing major issues with search quality and indexing appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Monday, August 8th, 2022

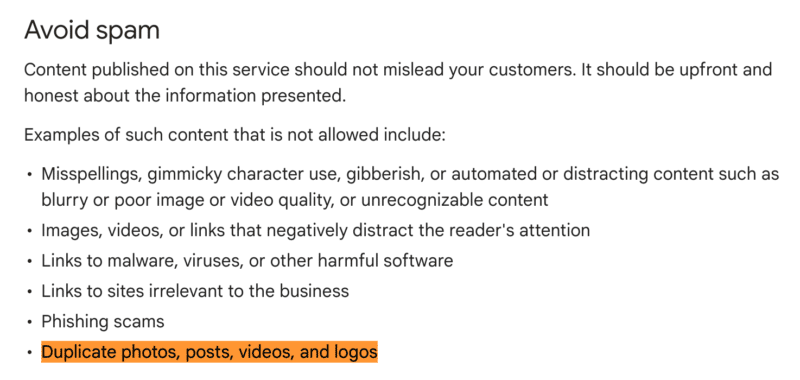

Google has updated what it considers to be spam when it comes to Google Business Profile posts in the Business Profile posts content policies. The new line added under the avoid section says “examples of such content that is not allowed includes “duplicate photos, posts, videos, and logos.”

Duplicate. Yes, the new line says that “duplicate photos, posts, videos, and logos” was added as an example of Google posts that would be rejected or removed because it is considered spam. That line was not on the Google document prior and was just recently added.

Screenshot. Here is a screenshot of the addition:

Consequence. What happens if you are caught posting duplicate photos, posts, videos, or logos in your Google Business Profile posts? Well, those posts may be rejected or removed from Google Search and Google Maps.

Joy Hawkins and Colan Nielsen posted this on Friday:

We have been hearing a ton of complaints about rejected posts recently. If you're using logos in your images or stock photos ("duplicate photos"), that could be why. https://t.co/ajVe9VfkZj

— Joy Hawkins (@JoyanneHawkins) August 5, 2022

Why we care. If you do a lot of Google Business Profile posts, make sure not to duplicate photos, posts, videos, or logos in your Google Business Profile posts. If you see some of your posts being rejected recently, it might be related to the revised guidelines Google has added the other day.

The post Google updates Business Profile posts spam policies appeared first on Search Engine Land.

Courtesy of Search Engine Land: News & Info About SEO, PPC, SEM, Search Engines & Search Marketing

Microsoft Bing’s Price history annotation

Microsoft Bing’s Price history annotation Microsoft Bing’s Coupons available annotation

Microsoft Bing’s Coupons available annotation

johnmu of switzerland (personal)

johnmu of switzerland (personal)